Perspectivas de Ingeniería

Análisis profundos sobre sistemas de IA, arquitectura cloud, sistemas distribuidos y liderazgo en ingeniería.

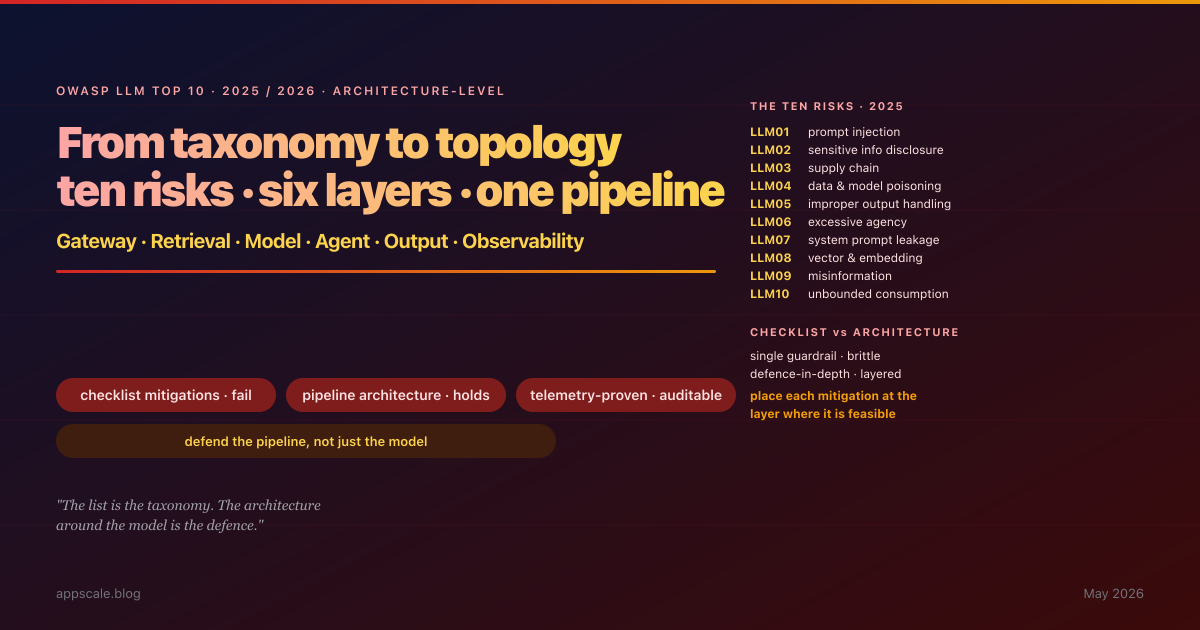

OWASP LLM Top 10 (2025/2026): Architecture-Level Mitigations Mapped to Each Risk

The OWASP Top 10 for LLM Applications gave the industry a shared vocabulary for LLM risk in 2023 and a sharper, incident-evidenced revision in 2025. What it never gave — and was never intended to give — is the architecture that actually contains those risks in production. This article walks the 2025 list, and for each of the ten risks specifies the architectural mitigation that contains it, the topological layer where the mitigation lives (gateway, retrieval, model serving, agent loop, output handling, observability), the telemetry that proves it is working, and the common failure modes when teams treat the list as a checklist rather than a pipeline-architecture specification. New entries in the 2025 revision — System Prompt Leakage (LLM07), Vector and Embedding Weaknesses (LLM08), Unbounded Consumption (LLM10) — are addressed with the same per-risk architectural placement. The article closes with eight anti-patterns and a five-stage maturity ladder from "we read the list" to a continuous adversarial-testing capability that exercises the pipeline daily.

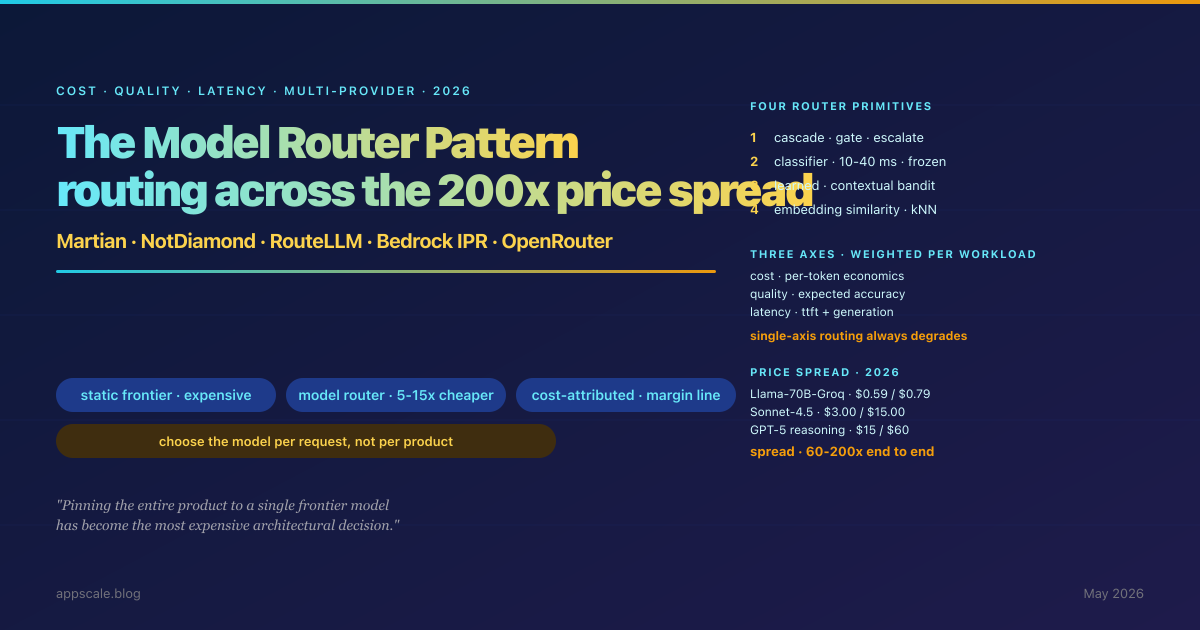

The Model Router Pattern: Cost-, Quality-, and Latency-Aware Routing Across LLM Providers (2026)

The static model choice has become the most expensive architectural decision in a 2026 LLM system. The provider market now spans a 60-200x price spread end to end, the quality gap on the dominant production workload is below the noise floor of A/B telemetry, and the latency profile of frontier models has widened — not narrowed. The Model Router Pattern is the architectural answer: a routing layer that, for every inbound request, chooses the cheapest model on the provider mix whose expected quality and latency clear the workload-specific gate, with a deterministic fallback when the routing decision turns out wrong. This article specifies the three routing axes (cost, quality, latency) and why single-axis routing always degrades, the four router primitives (cascade, classifier, learned, embedding-similarity) and when each is right, the 2026 landscape (Martian, NotDiamond, RouteLLM, Bedrock Intelligent Prompt Routing, OpenRouter Auto, Portkey, LiteLLM), the four-component calibration loop, the cost math with worked per-million-token economics, eight anti-patterns, and the five-stage maturity ladder from "we picked GPT" to a router that contributes a measurable line item to gross margin.

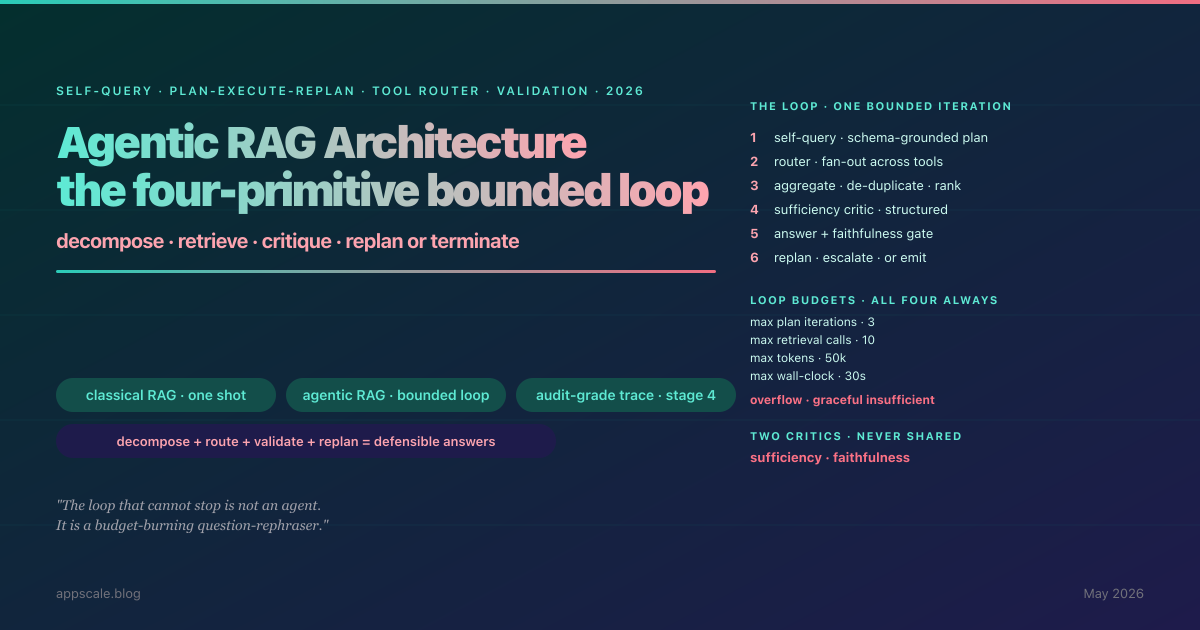

Agentic RAG Architecture: Self-Query, Plan-Execute-Replan, Tool-Augmented Retrieval, and the Validation Loop (2026)

The RAG pipeline that won 2024 — one question, one dense-vector lookup, one LLM call grounded on the top-k — is not the system that ships to production in 2026. The replacement is not bigger embeddings or a better re-ranker; it is the retrieval loop. Agentic RAG composes four architectural primitives: self-query decomposition that turns a multi-part question into a structured plan, plan-execute-replan with explicit iteration budgets that bound the loop, tool-augmented retrieval with a schema-driven router that chooses between dense indexes / SQL / graph / web search, and a validation loop with a sufficiency critic (gate to terminate-or-replan) and a faithfulness critic (deterministic gate before emission). Together they produce a bounded, observable, auditable retrieval agent. This article is the architecture-first playbook: what each primitive does, how they compose, the four failure modes specific to agentic RAG, eight anti-patterns that account for most production incidents, and the five-stage maturity ladder from classical-RAG-with-LLM-wrapper to full audit-grade deployment.

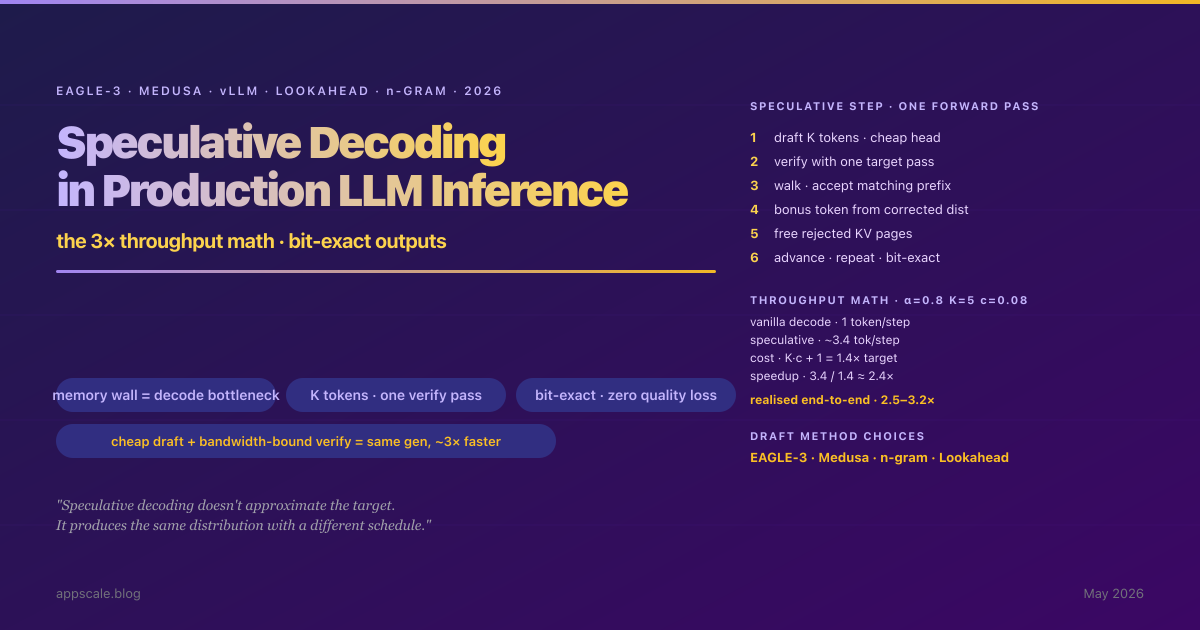

Speculative Decoding in Production LLM Inference: EAGLE-3, Medusa, vLLM, and the 3× Throughput Math (2026)

The single largest under-used lever in production LLM inference in 2026 is speculative decoding. A correctly tuned vLLM deployment with EAGLE-3 or Medusa heads delivers 2.5–3.2× throughput on the same hardware for the same model with bit-exact outputs. The arithmetic: with α=0.8 acceptance, K=5 speculation length, and draft/target cost ratio c=0.08, the speedup formula (1 − α^(K+1)) / ((1 − α) × (K × c + 1)) lands around 2.4× and rises to 3× as α climbs. Most production deployments have not adopted it, not because the technique is exotic but because the operational subtleties — draft-model selection, acceptance-rate decay on long contexts, batch interaction effects, and the cases where naive speculation actively loses — are not well understood. This article is the production playbook: what speculative decoding actually does to the autoregressive loop, the EAGLE / Medusa / Lookahead / n-gram family, the vLLM integration surface, the four workload shapes where speculation wins or loses, the long-context failure mode that catches teams off-guard, eight anti-patterns, and a five-stage maturity ladder.

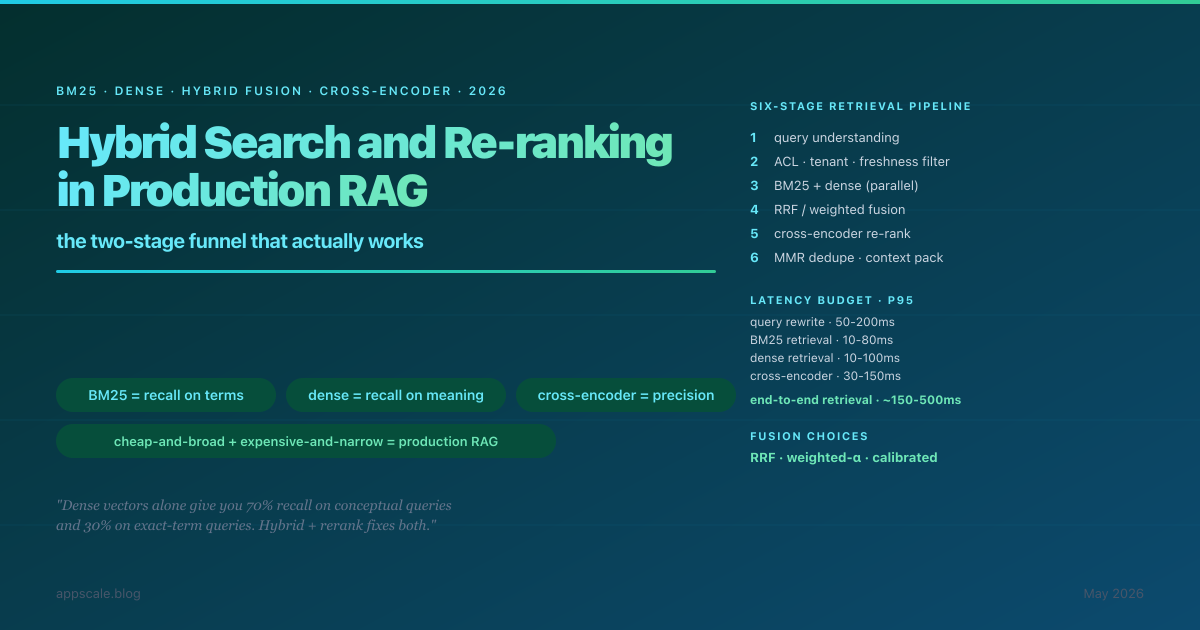

Hybrid Search and Re-ranking in Production RAG: BM25, Dense Vectors, Cross-encoders, and Everything In Between (2026)

The single biggest reason production RAG systems return confident wrong answers is not the LLM, the prompt, or the chunking — it is the retriever returning the wrong documents into the top-k. Dense-vector-only retrieval gives 70% recall on conceptual queries and 30% on exact-term queries — and a better embedding model does not fix it because the failure mode is structural. The architecture the field has converged on in 2026: sparse retriever (BM25 or SPLADE) + dense retriever (bi-encoder embeddings) running in parallel, fused via RRF or weighted-α, cross-encoder re-ranker over the top-50 candidates, MMR diversification, ACL/freshness pre-filter, query understanding in front. This article is the deep-dive on what each primitive is doing, why each fails, the latency budget, eight anti-patterns, and the five-stage maturity ladder from single-retriever to calibrated-fusion-with-online-feedback.

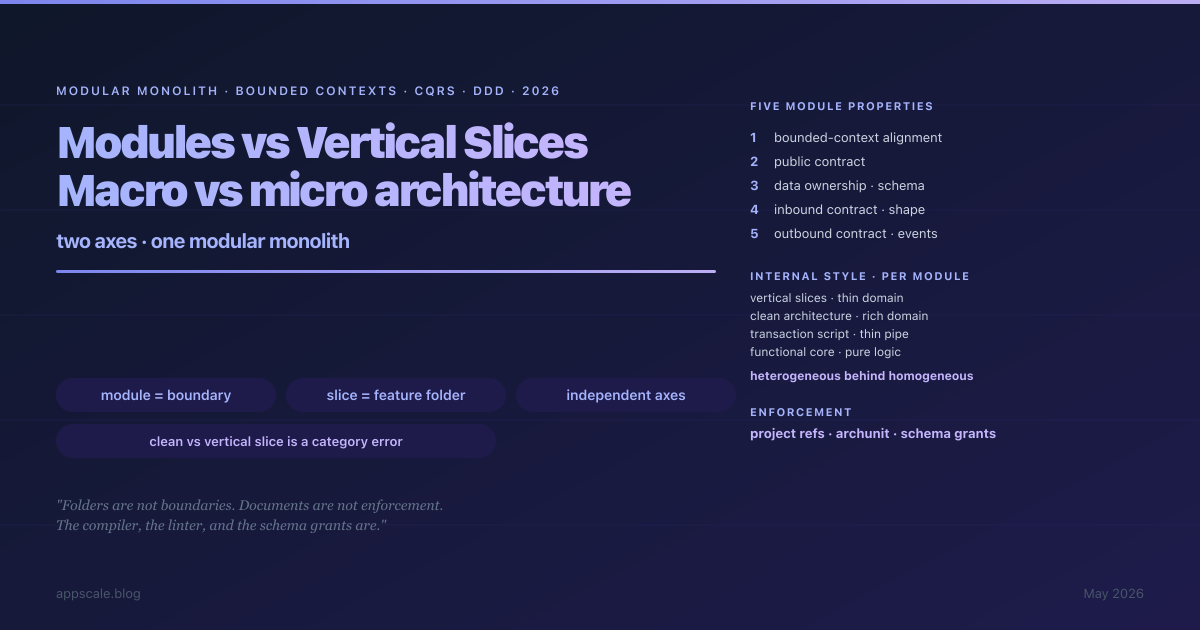

Modules vs Vertical Slices: Macro vs Micro Architecture in the Modular Monolith (2026)

The argument "Clean Architecture vs Vertical Slice Architecture" is a category error — the two operate on different axes. A module is a macro-architectural decision about bounded contexts, public contracts, data ownership and communication style. A vertical slice is a micro-architectural decision about feature folder organisation inside a module. The killer property of a real modular monolith is that the two axes are independent: heterogeneous internals (Clean Architecture in one module, vertical slices in another, transaction scripts in a third) live safely behind homogeneous module boundaries enforced by project references, ArchUnit rules, and schema grants. This article is the technical deep-dive: the five enforceable module properties, the four slice properties, the cross-module communication spectrum from in-process method calls to outbox-backed event buses, the per-module internal-style decision matrix, multi-layered boundary enforcement, eight anti-patterns, and the five-stage maturity ladder from layered monolith to deliberate modular-monolith target state.

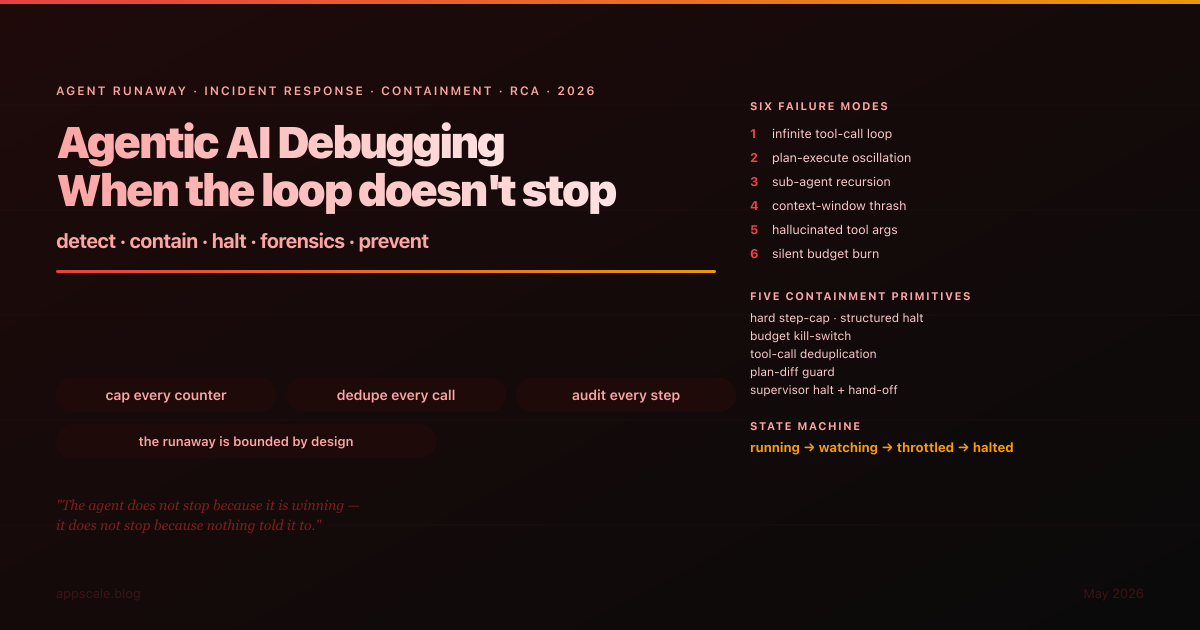

Agentic AI Debugging: When the Loop Doesn't Stop (2026)

The single most expensive failure mode of an agentic system is not the agent producing the wrong answer — it is the agent producing no answer while burning through tool calls, context, and provider budget in a tight loop the runtime did not detect. Six failure modes (infinite tool-call loop, plan-execute oscillation, sub-agent recursion, context thrash, hallucinated arguments, silent budget burn), six detection signals (step cap, semantic similarity, cost slope, identical call, delegation depth, context utilisation), five containment primitives (hard step-cap, budget kill-switch, tool-call dedupe, plan-diff guard, supervisor halt), a state machine with running/watching/throttled/halted, a seven-field RCA template, 8 anti-patterns, and a 5-stage maturity ladder. This is how runaway loops become bounded incidents.

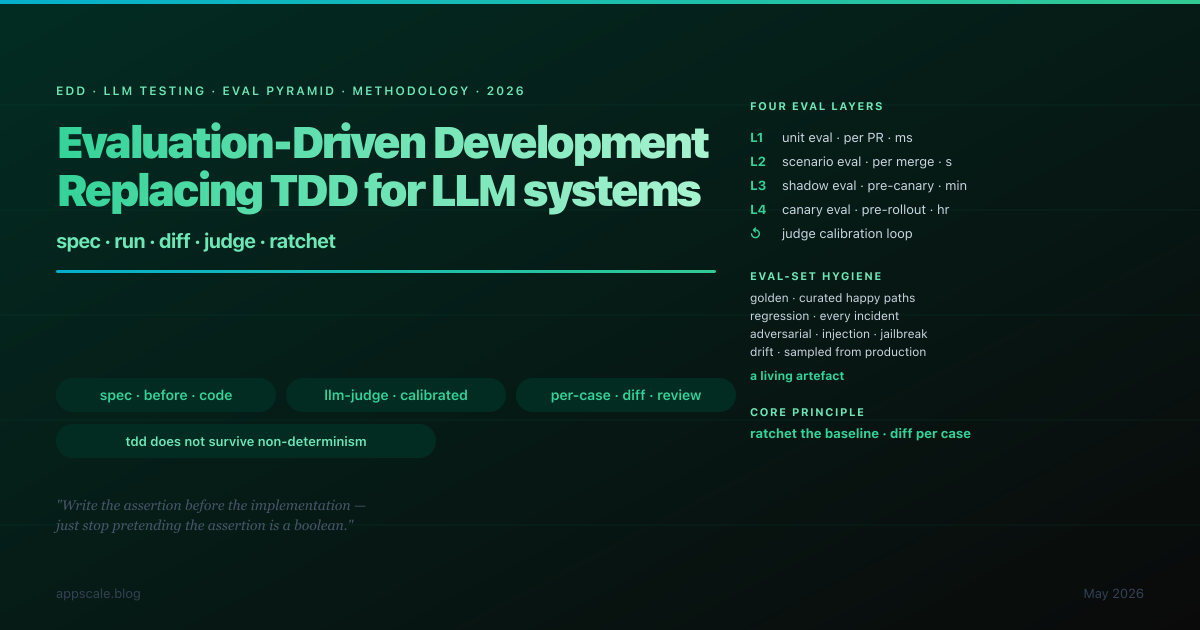

Evaluation-Driven Development: Replacing TDD for LLM Systems (2026)

Test-driven development does not survive the transition to LLM systems — the assertion cannot be strict-equality, the correct output is a distribution, the red-green-refactor loop has no green, and the assertion itself is fallible. Evaluation-driven development is the discipline that replaces TDD: the same shape of "write the assertion before the implementation, ratchet it as the implementation improves, gate every change on the verdict", but with eval sets instead of unit tests, distribution verdicts instead of boolean pass-fail, calibrated LLM judges instead of strict equality, and a ratcheted baseline instead of a fixed expected output. This article is the methodology, the eval-set hygiene (golden, regression, adversarial, drift), the four eval layers (unit, scenario, shadow, canary), the LLM-as-judge calibration practice, the CI integration, 8 anti-patterns, and the 5-stage maturity ladder.

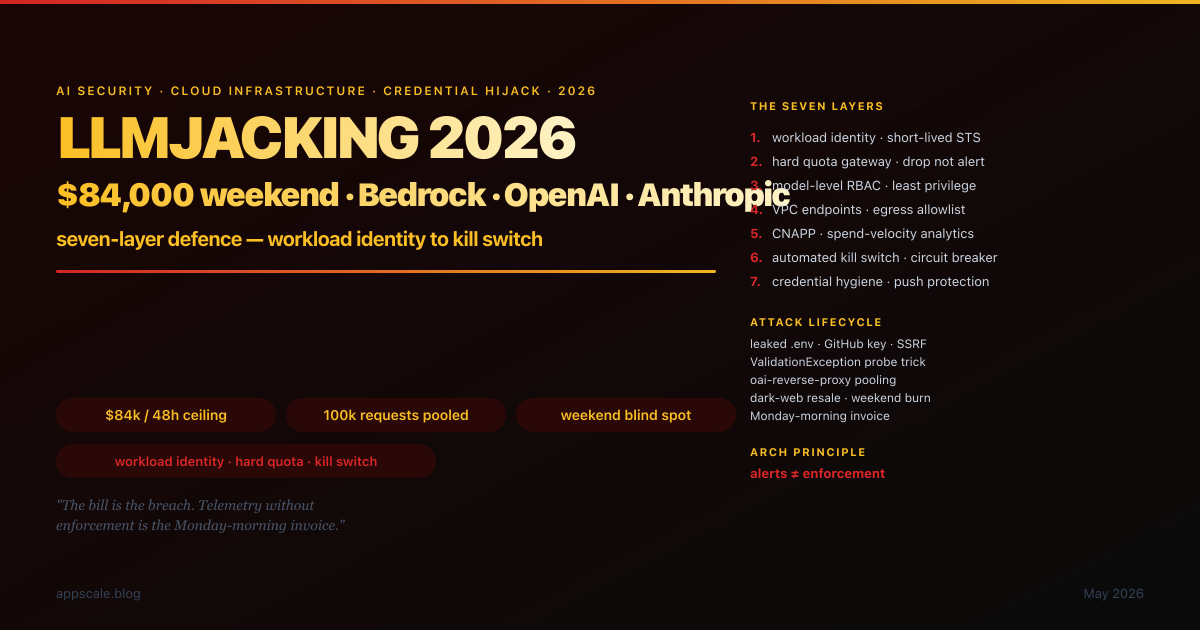

LLMjacking 2026: How Attackers Hijack Your Bedrock and OpenAI Quota — and the Seven-Layer Defence That Stops the $84,000 Weekend

A finance team walked into the office on a Monday morning in early 2025 and found an $84,000 invoice for the previous 48 hours. The application had not been defaced; no customer data had been exfiltrated; the dashboards were green. The bill was the breach. This is LLMjacking — the unauthorised hijack of cloud-hosted LLM resources for compute monetisation, the AI-security failure mode that does not look like a security incident until the invoice arrives. The seven-layer defence-in-depth stack is the architectural response: workload identity replacing static keys, hard quota at the gateway, model-level RBAC, network isolation, behavioural analytics, automated kill switch, and continuous credential hygiene. AWS-native reference architecture with Azure and GCP equivalents, attack-lifecycle map from initial access to weekend burn, eight anti-patterns retired, five-stage maturity ladder, and the Monday-morning 24h / 7d / 30d action checklist that materially reduces exposure by Friday.

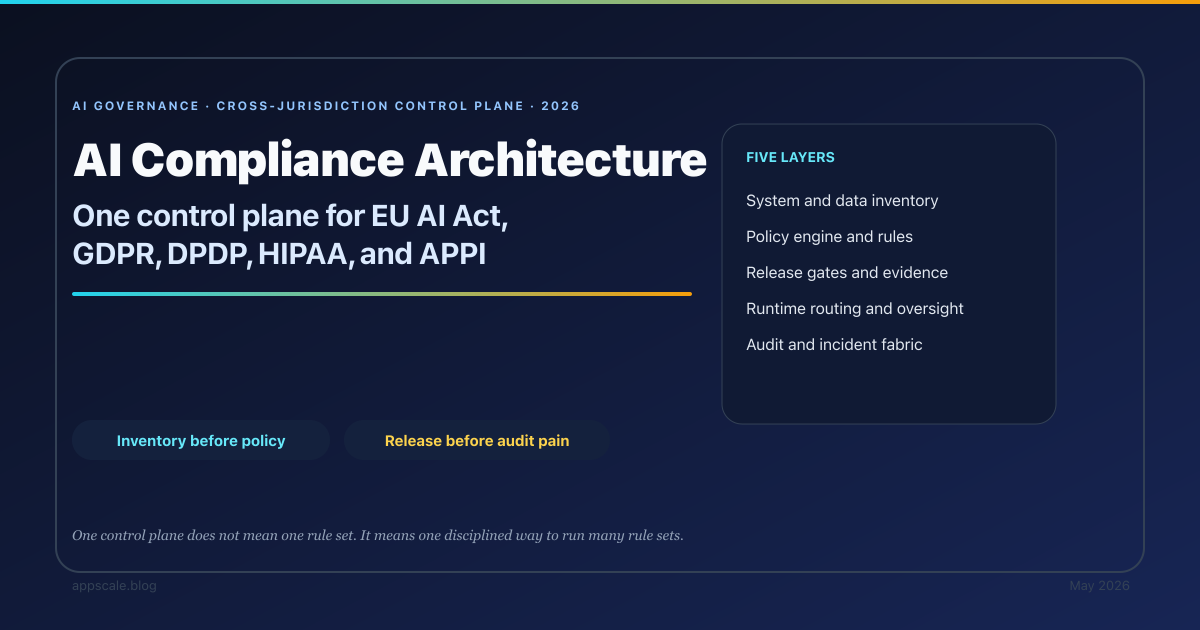

AI Compliance Architecture: One Control Plane for EU AI Act, GDPR, DPDP, HIPAA, and APPI (2026)

A reference control-plane architecture for AI systems that have to satisfy multiple regulatory regimes at once. Covers inventory, policy, release gates, runtime controls, and the evidence fabric that connects them.

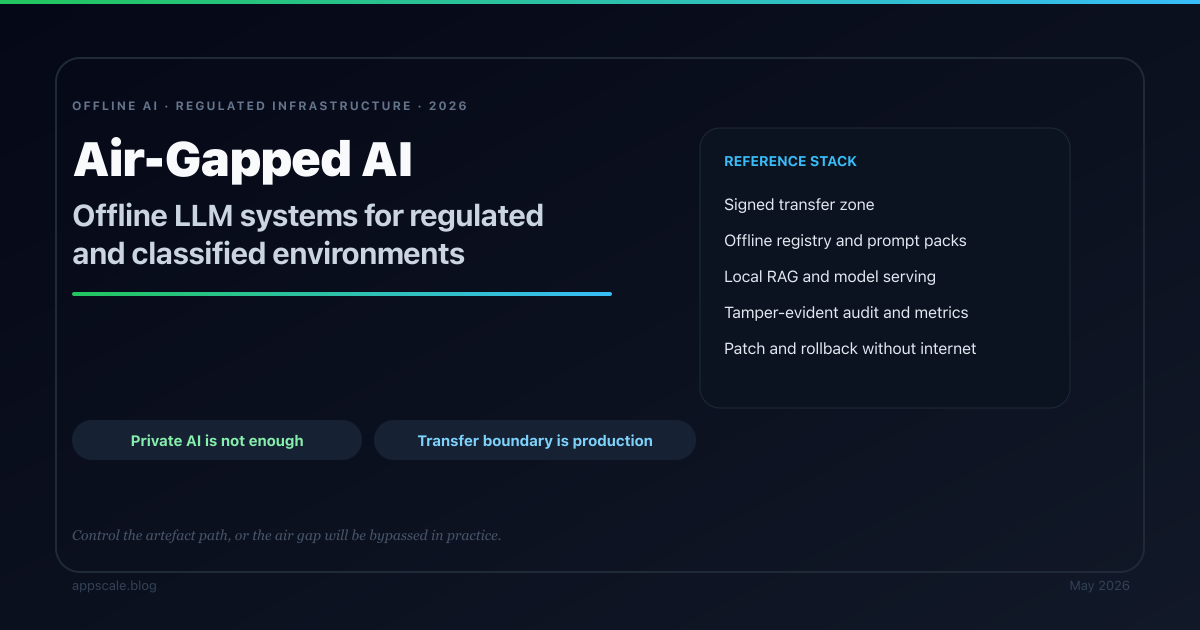

Air-Gapped AI Architecture: Offline LLM Systems for Regulated and Classified Environments (2026)

A reference architecture for offline LLM systems in air-gapped environments. Covers signed update flows, local registries, offline retrieval, observability, security controls, and the real cost profile of air-gapped AI.

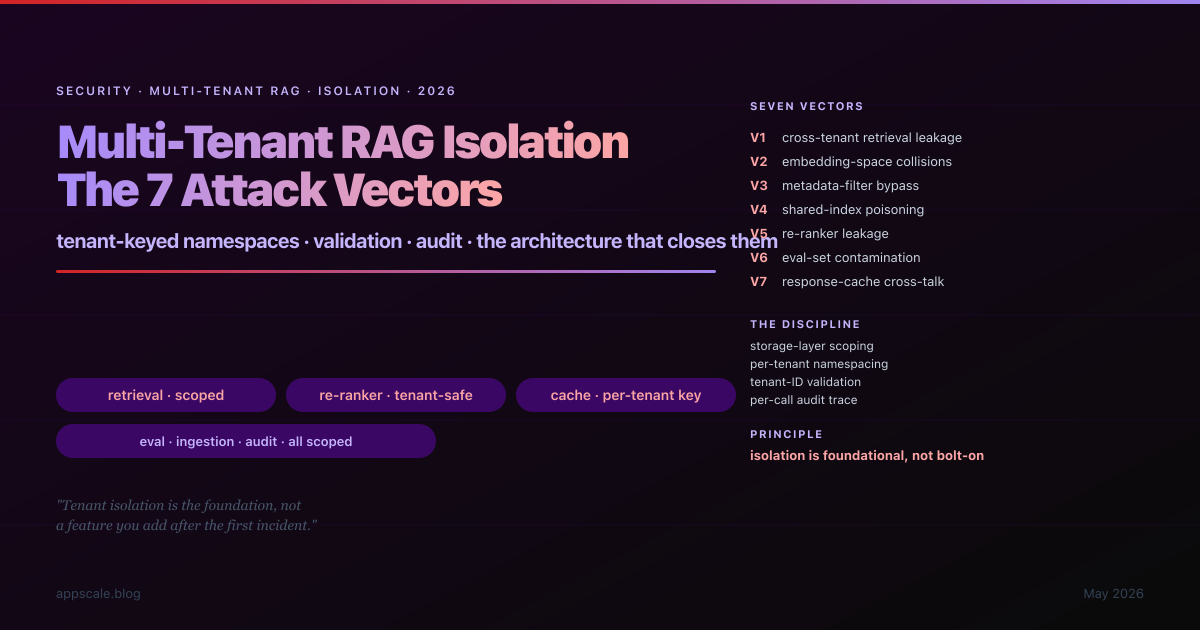

Multi-Tenant RAG Isolation: The 7 Attack Vectors and the Architecture That Closes Them (2026)

Multi-tenant RAG has a security model that does not exist in single-tenant RAG and is not covered by generic SaaS multi-tenant discipline. The 2024–2025 incident record now has enough cross-tenant RAG leakage cases to classify the failure modes, and the result is a seven-vector taxonomy: cross-tenant retrieval leakage, embedding-space collisions, metadata-filter bypass, shared-index poisoning, re-ranker leakage, eval-set contamination, response-cache cross-talk. This article is the seven vectors with their mechanism and architectural defence, the per-tenant namespace pattern that closes them at every data surface, the eight anti-patterns that produce the bad outcomes, and the maturity ladder from Stage 0 (single shared everything) to Stage 4 (continuously-validated isolation).

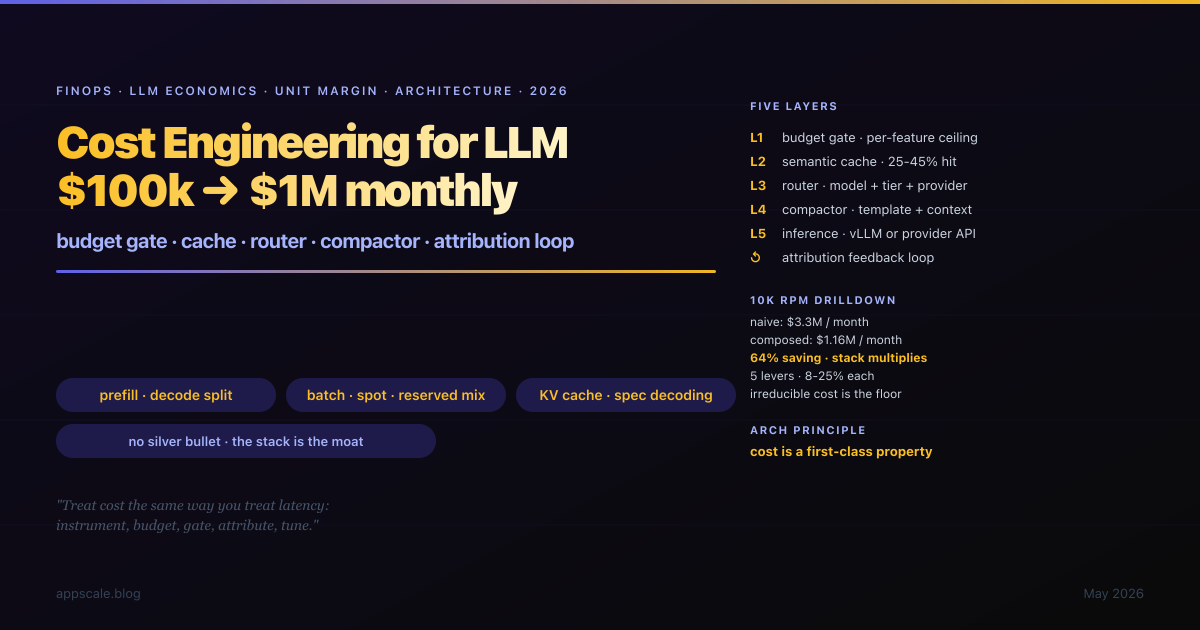

Cost Engineering for LLM Features: From $100k to $1M Monthly Spend (2026)

The $100k to $1M monthly LLM-spend transition is the architecturally serious crossing in the life of an LLM product. The teams that handle it well treat cost as a first-class architectural property — instrumented, budgeted, gated, attributed, and tuned — and they build the five-layer stack of budget gate, semantic cache, dynamic router, prompt compactor, and inference layer with an attribution feedback loop wrapped around it. This article is the architecture, the order to build it in, the 10k-RPM unit-economics drill-down that produces a 64% reduction through composed savings, the unglamorous levers (prefill/decode separation, KV-cache reuse, speculative decoding, batch endpoints, output-length discipline), the spot/reserved/on-demand procurement mix, 8 anti-patterns that produce the bad spend curve, and the 5-stage maturity ladder.

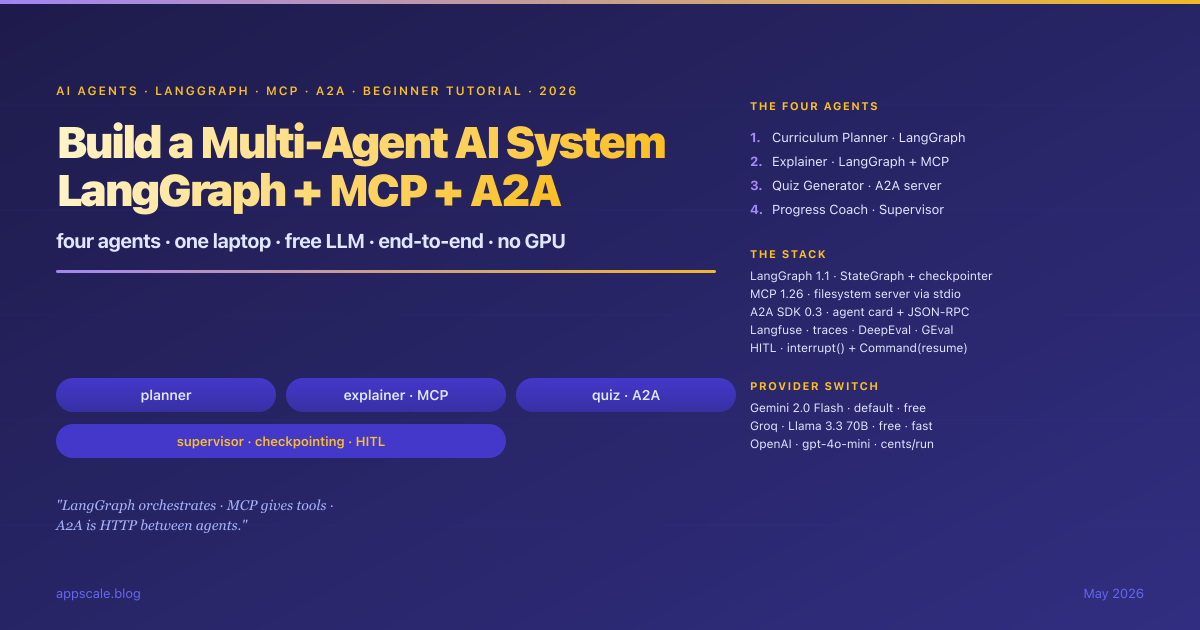

Build a Multi-Agent AI System with LangGraph + MCP + A2A: Beginner-Friendly End-to-End Tutorial (2026)

A full beginner-friendly walk-through of building a four-agent AI system on a laptop with no GPU and a free LLM. We use LangGraph for orchestration (state, nodes, edges, conditional edges, checkpointing, human-in-the-loop with interrupt), MCP for tool access (the official filesystem server via stdio), and A2A for cross-process agent calls (agent card at /.well-known/agent-card.json, JSON-RPC message lifecycle). The four agents form a Learning Accelerator — a Curriculum Planner, an Explainer that reads local notes via MCP, a Quiz Generator exposed as an A2A server, and a Progress Coach supervisor that orchestrates the rest with SQLite checkpointing. Provider switch covers Gemini 2.0 Flash (free, default), Groq (free, fast) and OpenAI (cents per run). Langfuse for traces, DeepEval for LLM-as-judge regression tests. Every file is shown in full inline; no companion repo needed.

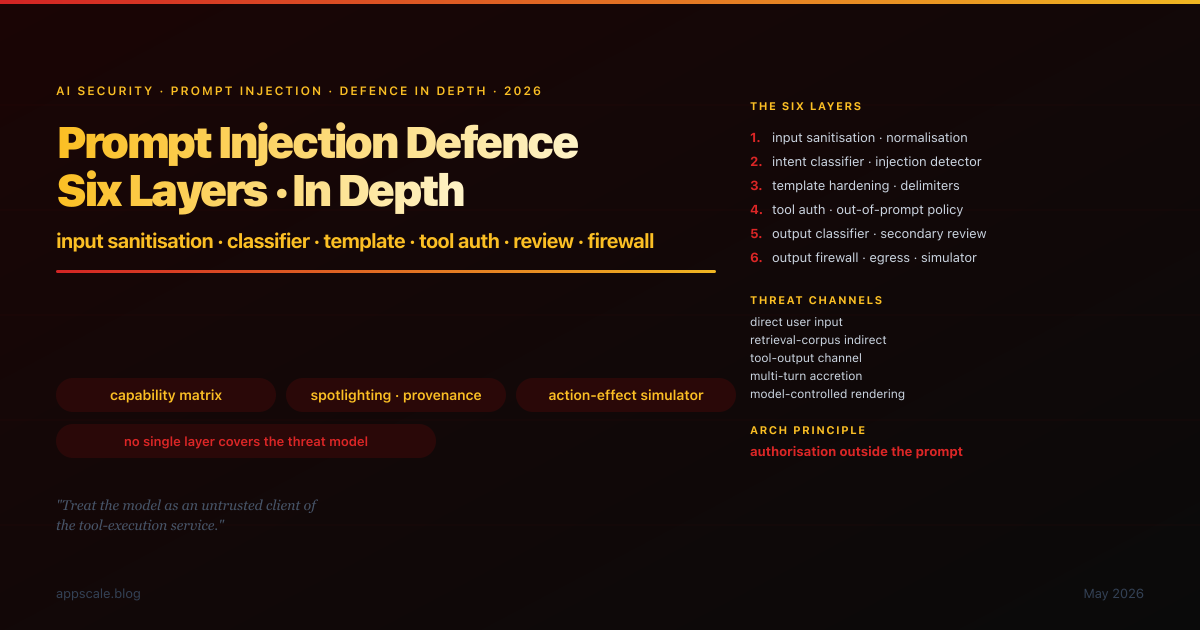

Prompt Injection Defence in Depth (2026): Six Layers from Input Sanitisation to Output Firewall

Prompt injection in 2026 is no longer a research curiosity; it is the day-one architectural assumption. The six-layer defence-in-depth stack is the engineering response: input sanitisation and normalisation, intent classifier and injection detector, prompt-template hardening with delimiters and role separation, tool-use authorisation policy outside the prompt, output classifier and secondary review LLM, output firewall for egress filtering and action-effect simulation. This article walks each layer with its threat model, engineering surface, and operational discipline; the build-order rationale; the composition with category-aware guardrails, agent circuit breakers, observability, and incident response. 8 anti-patterns retired, 5-stage maturity ladder, and the honest summary of where the field sits in early 2026.

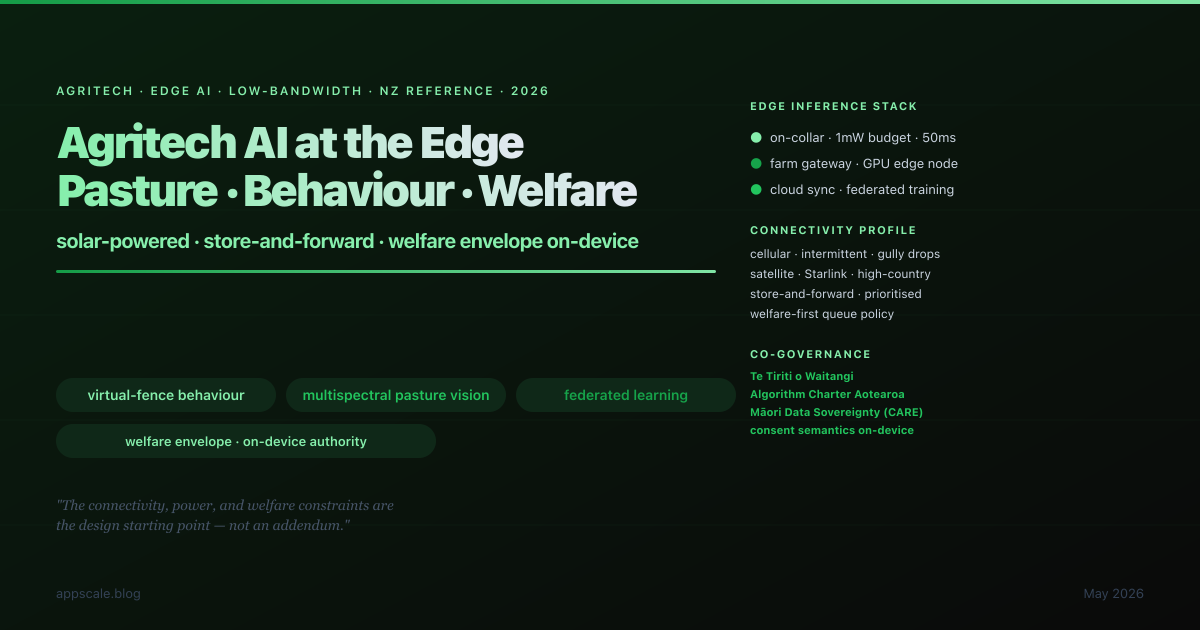

Agritech AI Architecture: Pasture Vision, Livestock Behaviour Models, and Low-Bandwidth Edge (NZ Reference, 2026)

New Zealand agritech in 2026 lands the AI architecture conversation hardest on the constraints mainstream cloud-AI tutorials assume away: solar-powered devices on the cow's collar, intermittent cellular and satellite connectivity, the welfare envelope that takes precedence over production, and the data co-governance arrangement under the Algorithm Charter and Te Tiriti o Waitangi. This article walks the engineering deliverables for an agritech AI architecture in 2026: edge-first inference with welfare envelope on-device, multispectral pasture-vision with fixed-tower-drone-satellite fusion, behaviour-model training with the labelling discipline as the value-creating activity, store-and-forward synchronisation with explicit conflict resolution, federated learning across farms, Te Tiriti and Algorithm Charter compliance engineered into the architecture. NZ-anchored to Halter, Fonterra, Gallagher, LIC, AgResearch and globally portable. 8 anti-patterns, 5-stage maturity ladder.

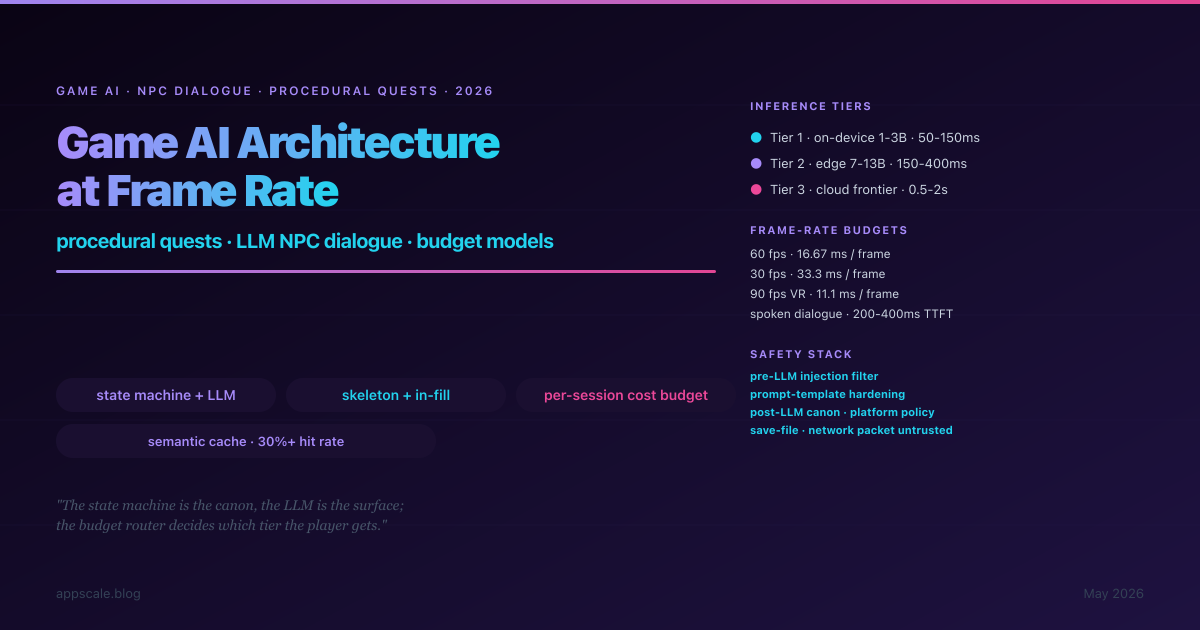

Game AI Architecture: Procedural Quest Systems and LLM-Driven NPC Dialogue (Budget Models, 2026)

Game AI in 2026 collides hardest with frame-rate budgets, session-cost economics, and the modding community's ability to break any system without adversarial assumptions. This article walks the engineering deliverables for an LLM-driven game AI architecture in 2026: tier-routed inference (on-device 1-3B small model, edge 7-13B mid-size, cloud frontier) with budget-aware routing; state-machine-augmented dialogue with LLM-generated surface variation; procedural quest skeletons with LLM in-fill within writer-defined templates; multi-layer content-safety and prompt-injection defence; per-session cost budget as engineering discipline; semantic cache as first-class architectural element. PL-anchored to the Warsaw/Krakow game-dev cluster (CD Projekt Red, Techland, 11 bit, People Can Fly, Bloober Team) and globally portable. 8 anti-patterns, 5-stage maturity ladder.

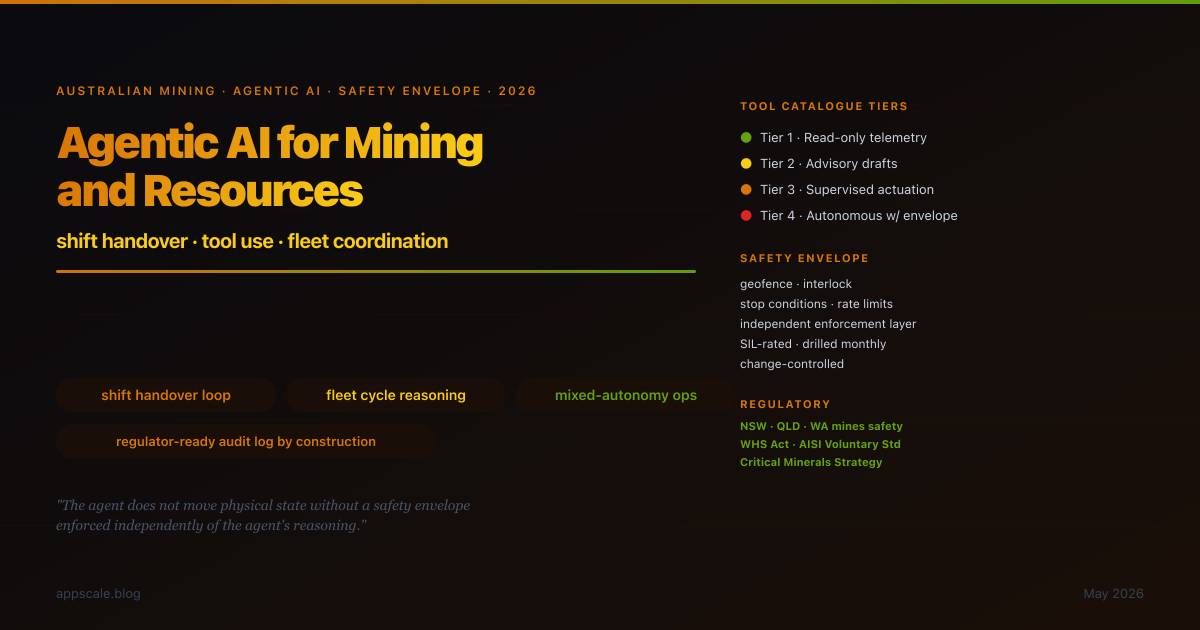

Agentic AI for Mining and Resources: Shift Handover, Tool Use, and Fleet Coordination (2026)

Mining and resources is a distinct architectural setting for agentic AI: bounded autonomy under safety envelopes, partial-disconnection resilience, regulator-ready operational record by construction. This article walks the engineering deliverables for a mining-and-resources agentic AI architecture in 2026: tool catalogue tiered by safety envelope (read-only / advisory / supervised actuation / autonomous actuation); shift-handover loop integrating voice, paper, and structured data into the agent's working memory; fleet-coordination layer reasoning about cycle time, mixed-autonomy interaction, and stop-condition response; safety envelope as an explicit, inspectable, enforced, and exercised artefact; regulatory composition with NSW/Queensland/WA mines safety regimes, the AISI Voluntary Standard, and the Critical Minerals Strategy. AU-anchored, globally portable to Chile/Canada/SA/Indonesia/Brazil mining and to construction/heavy-haulage/ports/rail. 8 anti-patterns, 5-stage maturity ladder, composition with AISI eval pipeline, agent memory, human escalation, incident response, and agent-level circuit breakers.

AI Incident Response Runbook: RCA for LLM Failures (2026)

LLM systems fail in ways the SRE runbook of the last decade does not anticipate. This article walks the engineering deliverables for an LLM-aware incident response architecture in 2026: severity classification adapted to LLM failure surfaces; detection signal stack (eval drift, guardrail trips, cost spikes, latency p99, hallucination rate, user reports); six containment primitives operable from a single console (model pin, prompt rollback, retrieval quarantine, canary halt, traffic shape, kill-switch); RCA template with LLM failure classes (hallucination, prompt injection, model regression, retrieval poisoning, vendor outage, jailbreak, context-window leak, agentic loop) and LLM-specific action item types; blameless culture extended to model contributions; on-call rota with primary, secondary, incident commander, and subject-matter dimensions. 8 anti-patterns, 5-stage maturity ladder, composition with AI observability, prompt versioning, human escalation, and AI-native CI/CD.

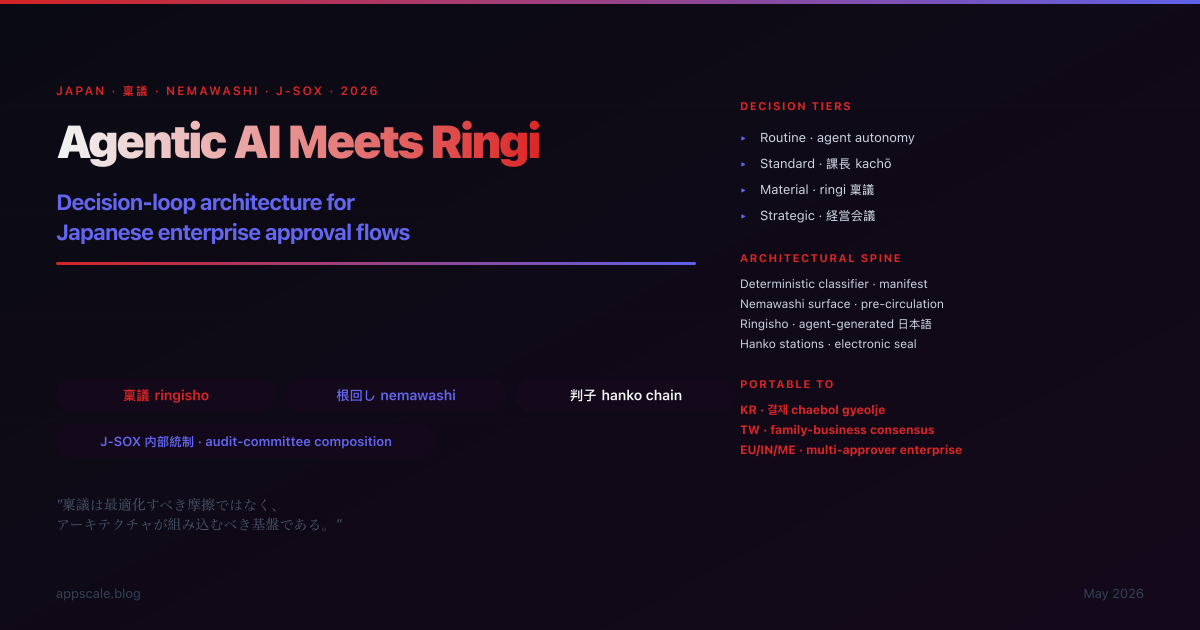

Agentic AI Meets Ringi: Decision Loop Architecture for Japanese Enterprise Approval Flows (2026)

The Japanese ringi seido is not a workflow to optimise around — it is the consensus-decision substrate on which the firm's J-SOX internal-control framework rests, and an agentic AI deployed without ringi-aware architecture fails the audit committee's review on first sample. This article walks the engineering deliverables: deterministic decision classifier reading the internal-control manifest; structured nemawashi surface for pre-circulation; agent-generated Japanese ringisho from internal-control-approved templates; electronic hanko circulation integrated with the firm's approval system; chain-of-approval execution gate; decision-narrative audit trail composed with J-SOX retention. 8 anti-patterns, 5-stage maturity ladder, portable to Korean chaebol gyeolje, Taiwanese family-business consensus, and broader multi-approver enterprise decision cultures.

Mantente a la Vanguardia

Análisis semanales profundos sobre sistemas de IA, arquitectura cloud, sistemas distribuidos y liderazgo de ingeniería. Únete a más de 5,000 ingenieros.