Engineering Insights

Deep-dives on AI systems, cloud architecture, distributed systems, and engineering leadership.

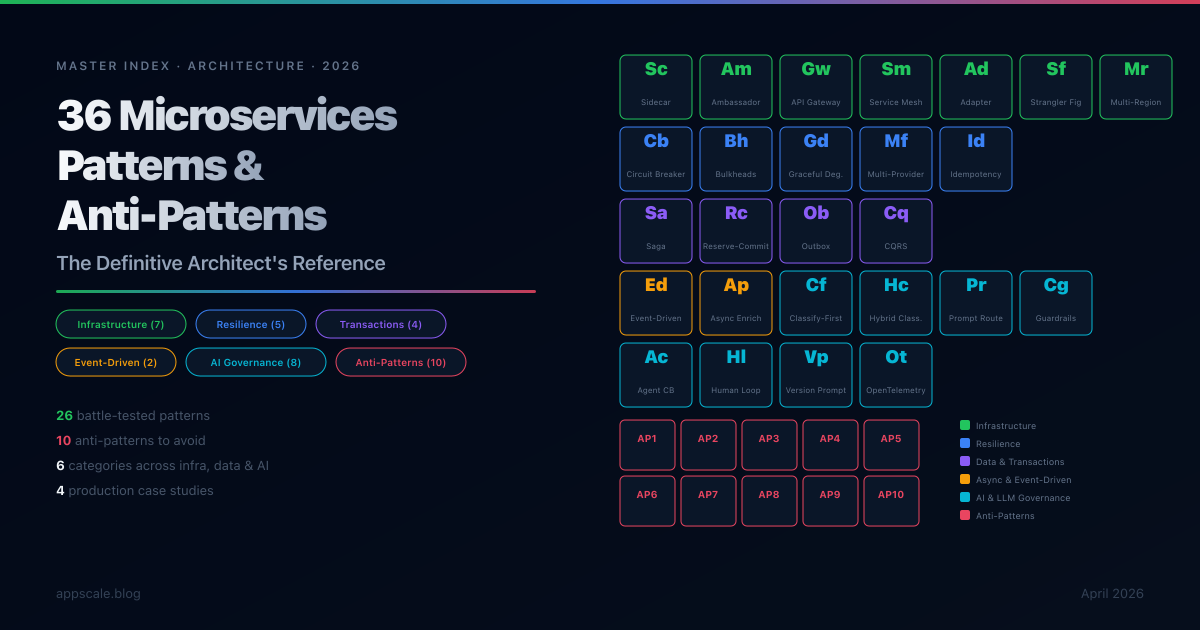

36 Microservices Patterns & Anti-Patterns: The Definitive Architect's Reference (2026)

A comprehensive master index of 26 battle-tested microservices patterns and 10 anti-patterns across infrastructure, resilience, data consistency, async communication, and AI governance — with deep-dive links, cross-references, and a quick-reference table.

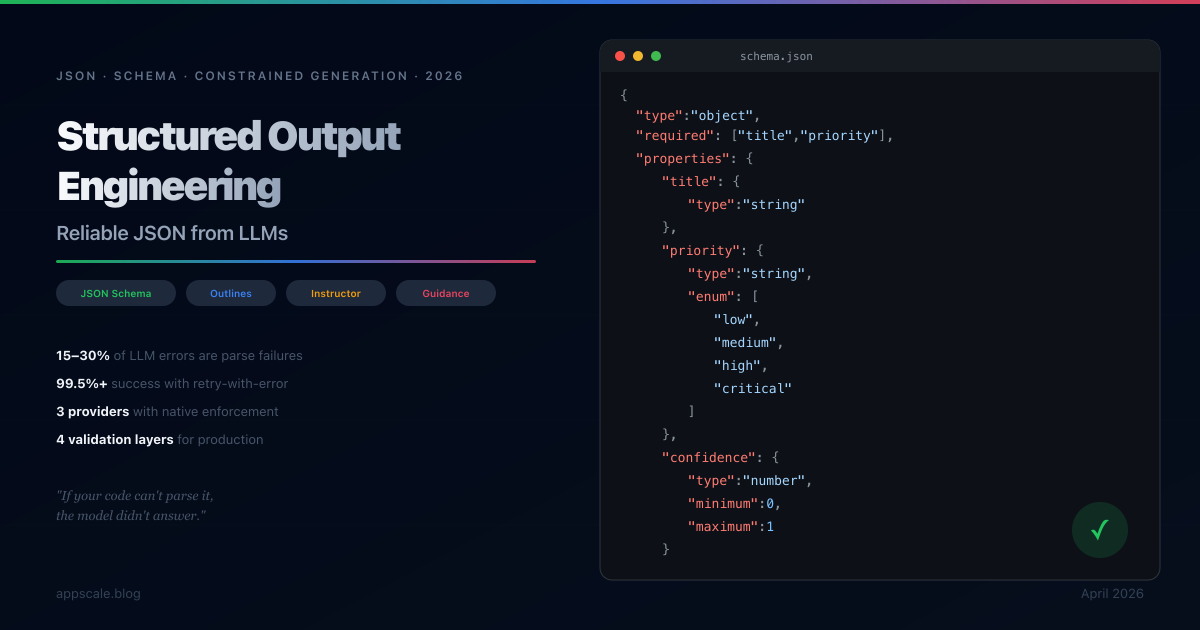

Structured Output Engineering: Getting Reliable JSON from LLMs (2026)

The most common failure in production LLM systems is unparseable output. This guide covers every technique for getting reliable JSON from LLMs — provider-native enforcement (OpenAI, Anthropic, Google), open-source constrained generation (Outlines, Instructor, Guidance), production validation patterns, and prompt engineering strategies.

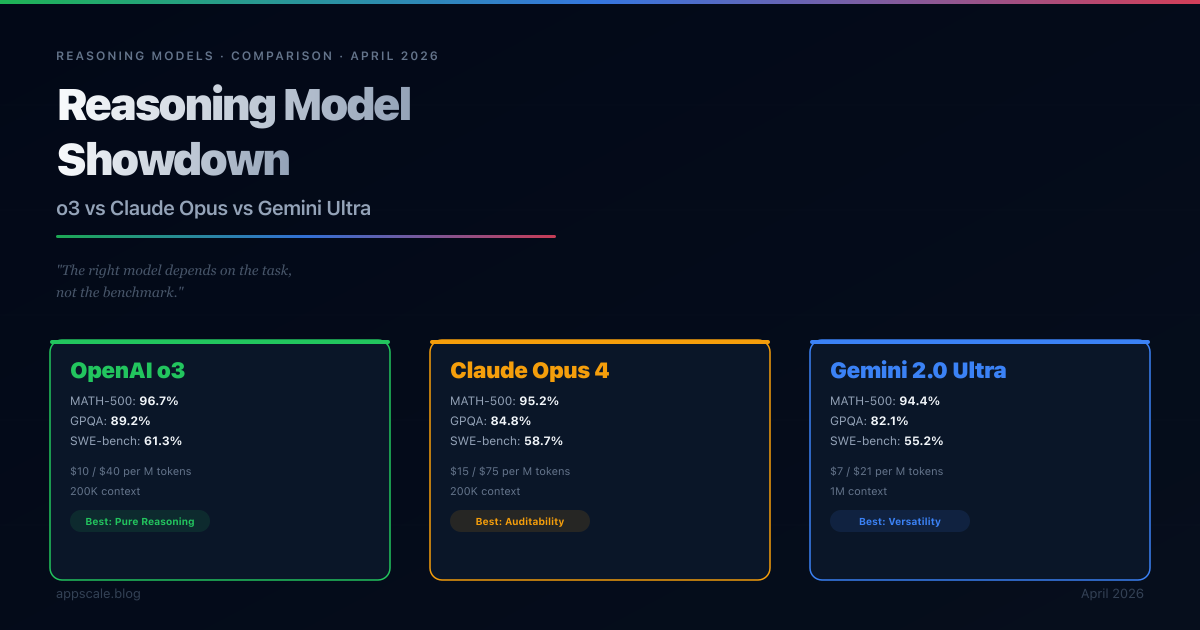

OpenAI o3 vs Claude Opus vs Gemini 2.0 Ultra: Reasoning Model Showdown (2026)

A direct, evidence-based comparison of OpenAI o3, Anthropic Claude Opus 4, and Google Gemini 2.0 Ultra — the three dominant reasoning models of April 2026 — covering benchmarks, pricing, latency, architecture differences, and a practical decision framework for enterprise deployment.

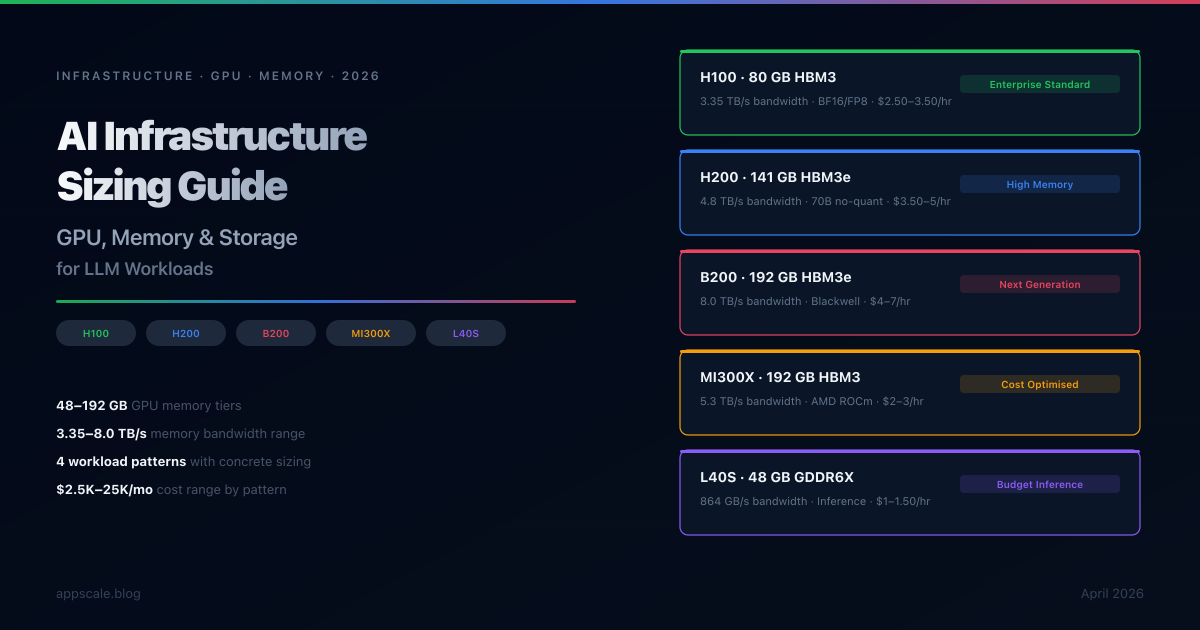

AI Infrastructure Sizing: GPU, Memory, and Storage for LLM Workloads (2026)

Concrete sizing guidance for LLM workloads in 2026 — covering GPU selection (H100, H200, B200, MI300X, L40S), memory architecture, storage tiers, network requirements, and cost-optimised infrastructure patterns for inference, training, and batch processing.

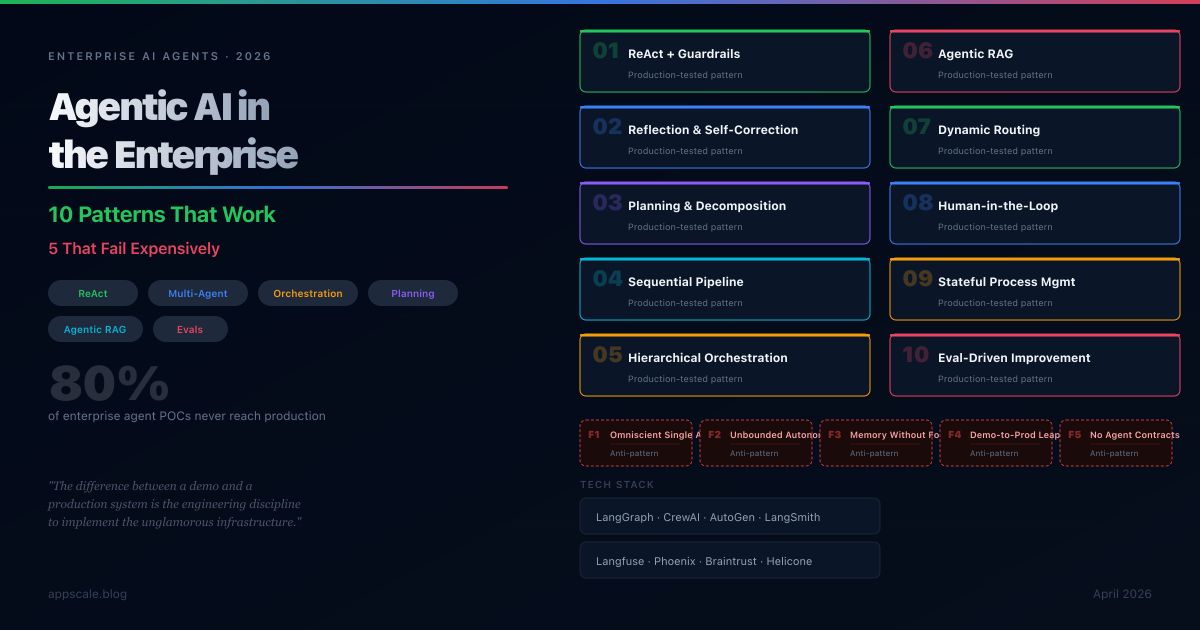

Agentic AI in the Enterprise: 10 Patterns That Work (and 5 That Fail Expensively)

Enterprise AI agents fail 80% of the time in production. Learn the 10 agentic AI patterns that actually work, 5 failure patterns to avoid, and a production readiness checklist for CTOs and architects.

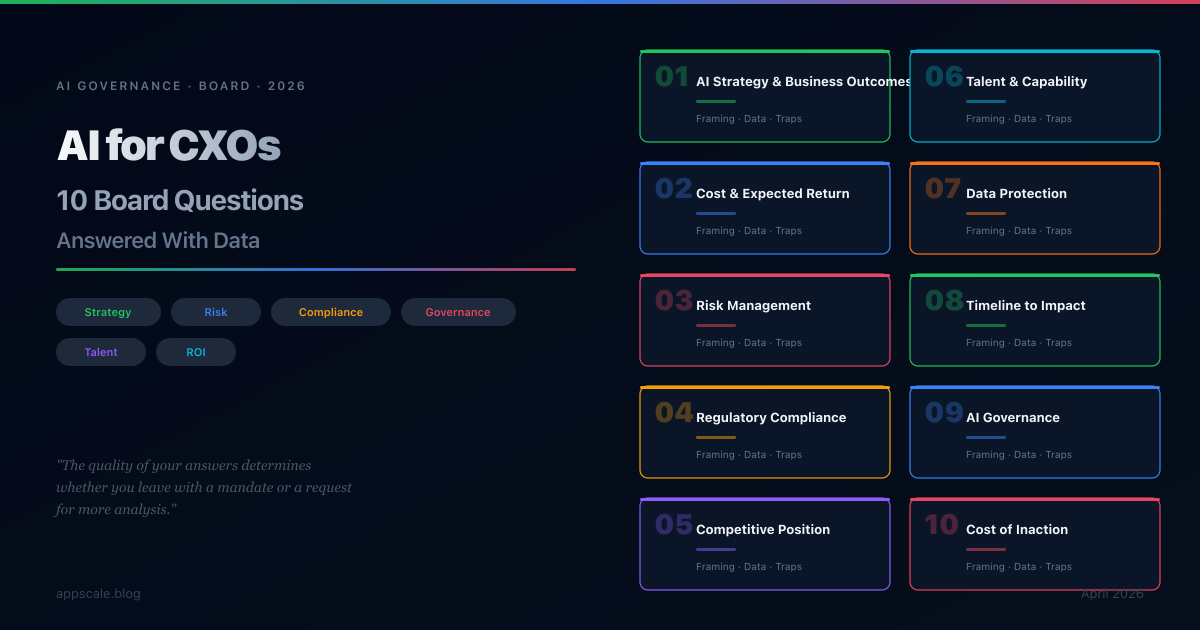

AI for CXOs: The 10 Questions Your Board Will Ask About AI — And How to Answer Them (2026)

Boards are no longer asking whether AI matters — they are asking what it means for the company financially, operationally, and strategically. This article covers the 10 questions boards most consistently ask about AI, with the exact framing, data points, and recommended answers that build credibility in the boardroom.

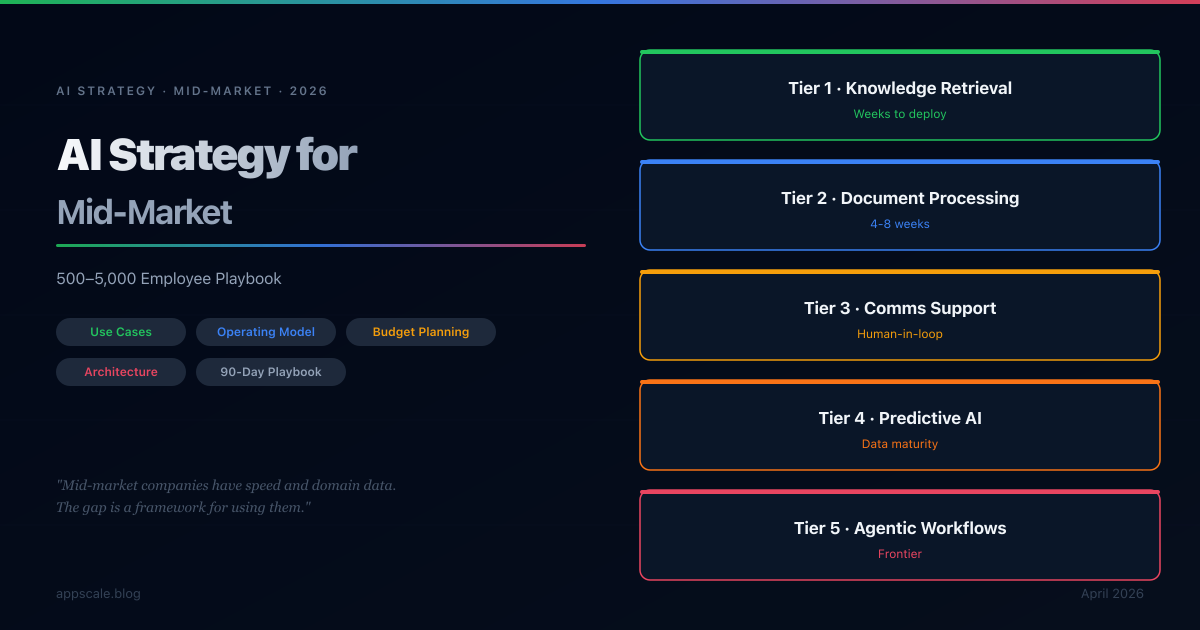

AI Strategy for Mid-Market: How 500–5,000 Employee Companies Should Approach AI (2026)

Mid-market companies have structural AI advantages that large enterprises lack — decision speed, domain data depth, and workflow access. The challenge is not technology availability; it is having a framework for use case prioritisation, operating model design, and architectural choices that fit real budget and talent constraints. This guide covers all of it.

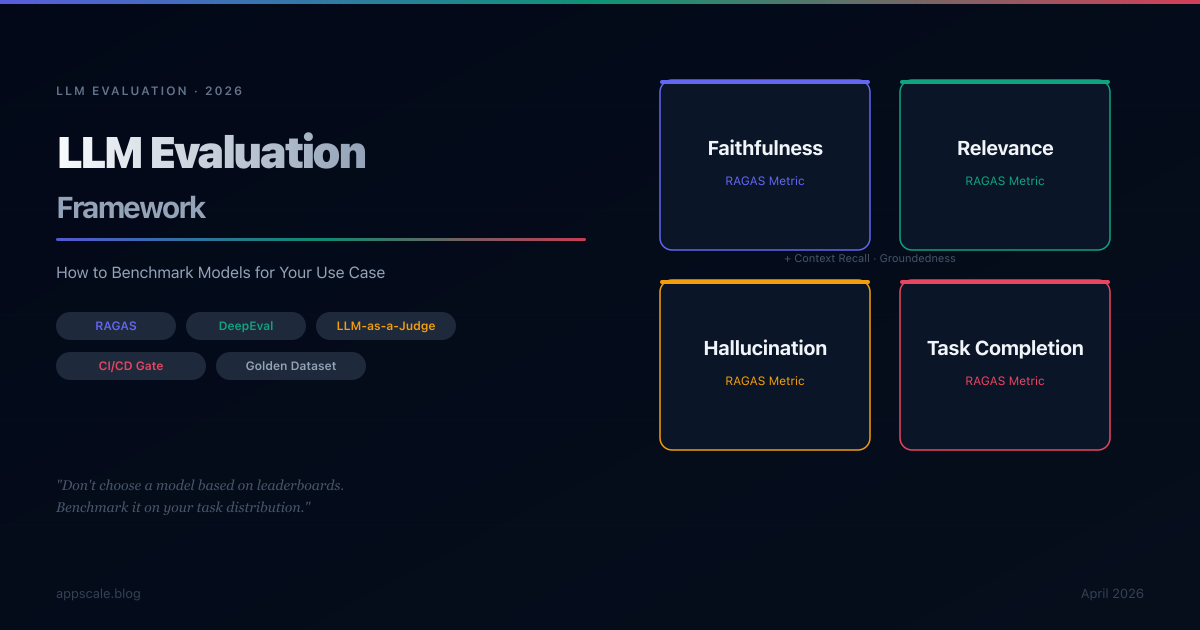

LLM Evaluation Framework: How to Benchmark Models for Your Use Case (2026)

Public benchmarks tell you which LLM is best in general. They do not tell you which model is best for your RAG pipeline, your agentic system, or your summarisation workflow. This guide covers how to build a golden dataset, measure faithfulness and relevance, automate evaluation in CI/CD, and make model selection decisions based on real task performance data.

Knowledge Graphs + LLMs: The Architecture That Beats Pure RAG

Pure RAG retrieves similar text. Knowledge graphs retrieve relationships — and in enterprise knowledge, the relationships are usually what matters. This article explains GraphRAG architecture: how to combine a knowledge graph with vector search, build an entity extraction pipeline, implement Text-to-Cypher query generation, and choose when GraphRAG beats pure RAG for multi-hop reasoning, auditability, and structured fact retrieval.

Langfuse vs LangSmith vs Braintrust vs Helicone: The 2026 Comparison Guide

Langfuse, LangSmith, Braintrust, and Helicone each solve a different primary problem in LLM observability. This 2026 comparison covers integration patterns, pricing at scale, self-hosting trade-offs, evaluation depth, and a CTO decision framework that maps each tool to a specific architectural scenario.

AI Observability in 2026: Monitoring LLMs with LangSmith, Langfuse, Arize, and W&B

In 2026, LLM observability has evolved from trace logging into Agentic Engineering Platforms. This article compares LangSmith, Langfuse, Arize Phoenix, and W&B Weave — covering instrumentation patterns, evaluation pipelines, Semantic Drift Detection, and a CTO decision guide mapped to four production scenarios.

Semantic Search vs Keyword Search: Architecture and Implementation

Semantic search and keyword search answer fundamentally different questions — one matches vocabulary, the other matches meaning. This article covers the architecture of both approaches, their failure modes, the hybrid architecture that most production systems use, and the full implementation pipeline: document chunking, embedding service design, vector store selection, query pipeline, reranking, and evaluation metrics.

AI Transformation Roadmap: From POC to Production in 6 Months

Most enterprise AI initiatives stall between proof of concept and production — not because the technology fails, but because the surrounding architecture, governance, and data infrastructure were never designed for production scale. This article provides a phased six-month roadmap covering data pipeline architecture, security design, model serving, retrieval systems, human oversight, cost controls, and executive monitoring — with the phase gates and failure mode patterns that determine whether an AI programme delivers measurable business value or becomes an expensive demonstration.

Guardrails for LLMs: Preventing Toxic, Off-Topic, and Hallucinated Output

Guardrails are the structural controls that define what an LLM can receive and produce in production. This guide covers the four-layer architecture — input guards, scope classification, output guards, and fact verification — with tooling comparisons (NeMo Guardrails, Guardrails AI, LangChain), prompt injection defence, latency budgeting, and production readiness criteria.

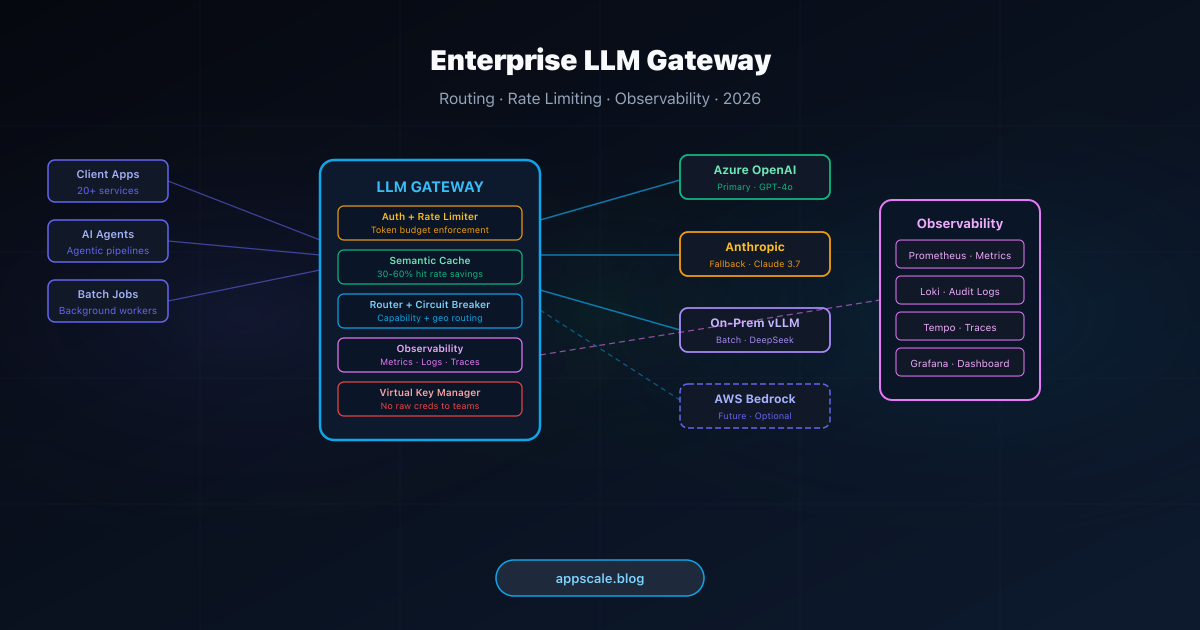

Enterprise LLM Gateway Architecture: Routing, Rate Limiting, and Observability

Every mature AI platform running multiple LLM-powered features converges on a single architectural decision: centralise the interface to language model providers. This guide covers the six core functions of a production LLM gateway — routing, rate limiting, circuit breaking, semantic caching, virtual key management, and observability — with implementation patterns and build-versus-buy analysis.

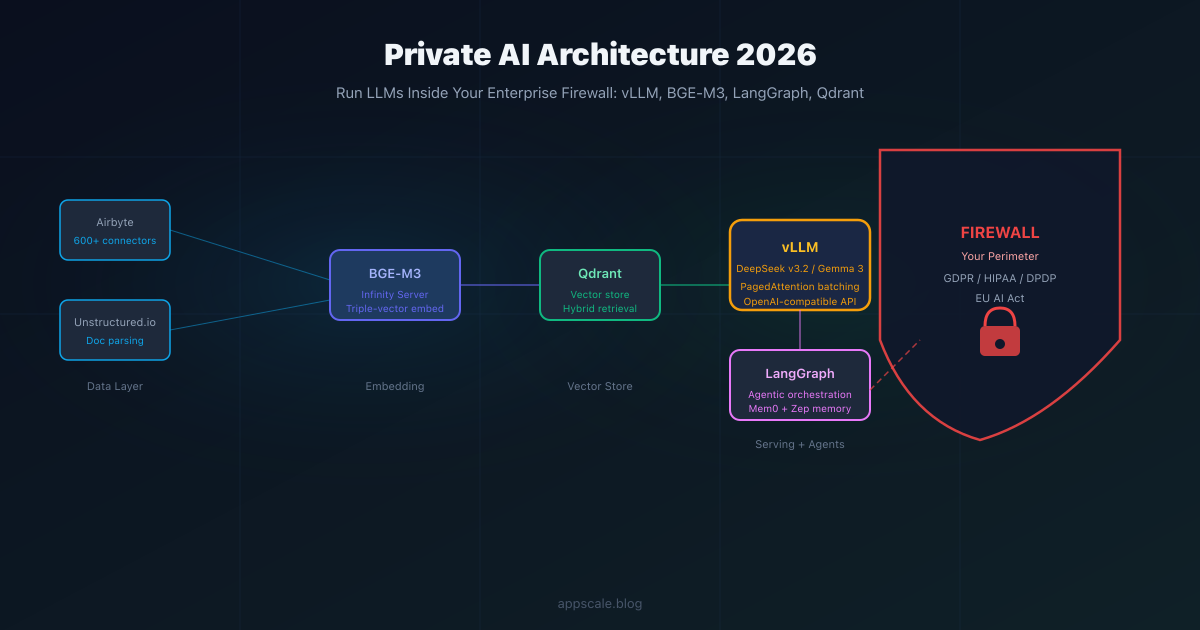

Private AI Architecture: How to Run LLMs Inside Your Enterprise Firewall in 2026

Complete guide to on-premises AI architecture in 2026: open-weight models (DeepSeek v3.2, Gemma 3, Qwen3), vLLM serving, BGE-M3 embeddings, Qdrant, LangGraph, and the Swiss Army Knife vs agent team decision framework.

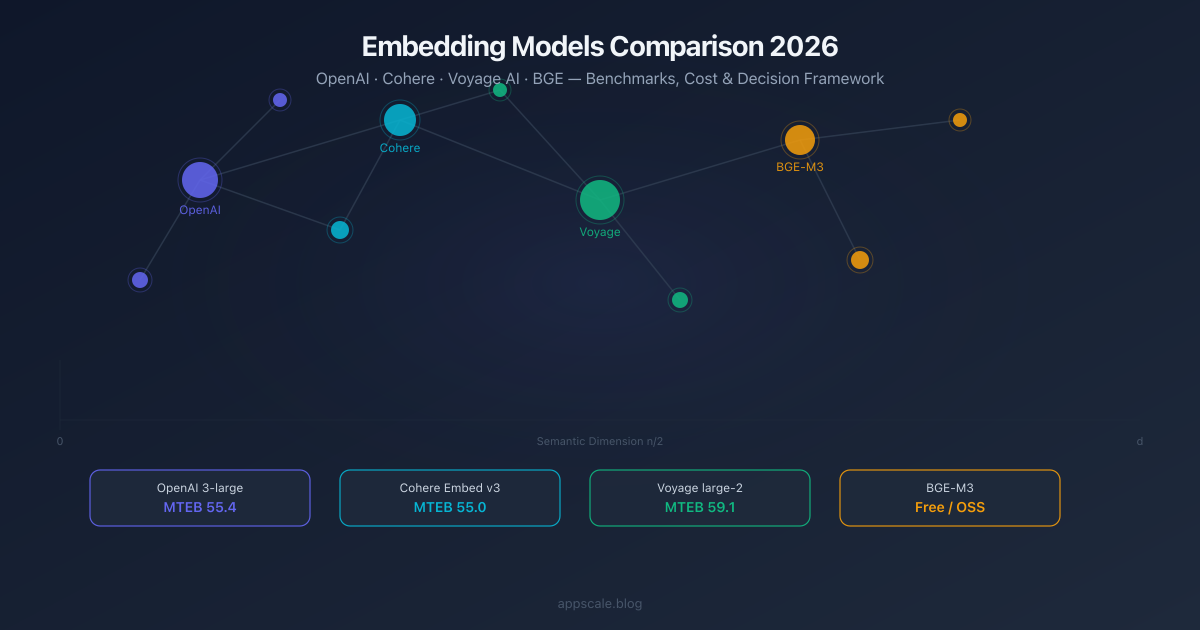

Embedding Models Comparison 2026: OpenAI vs Cohere vs Voyage vs BGE

Head-to-head comparison of the top embedding models in 2026: OpenAI text-embedding-3, Cohere Embed v3, Voyage AI, and BGE. Benchmarks, cost per 1M tokens, context windows, and a decision framework for RAG, code search, multilingual, and self-hosted deployments.

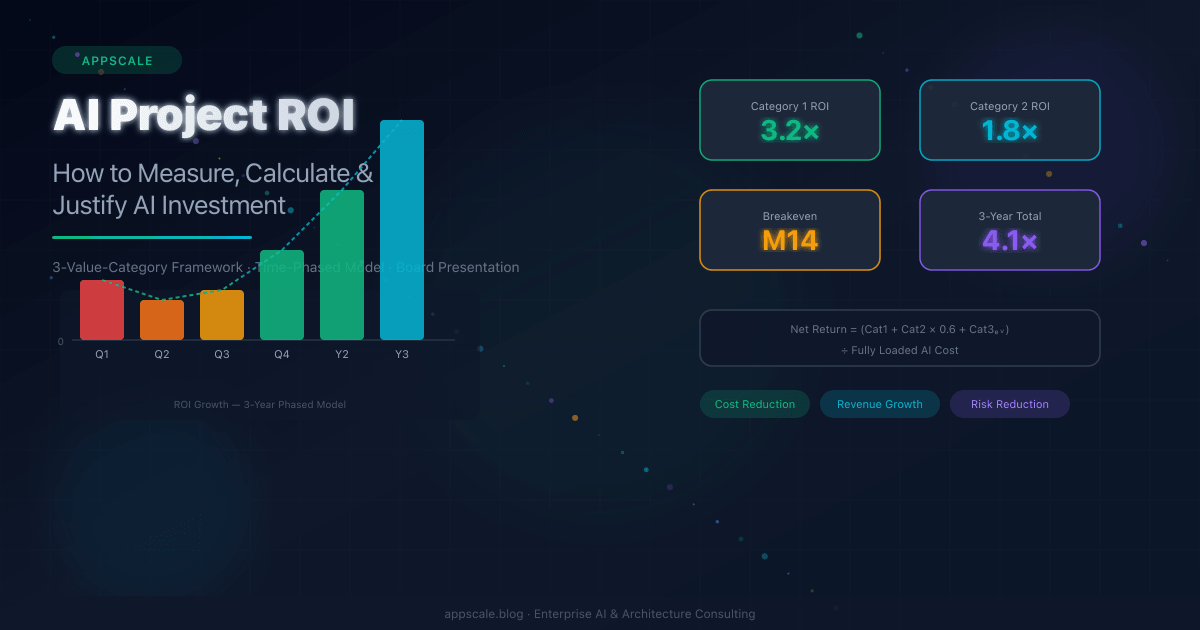

AI Project ROI: How to Measure, Calculate, and Justify AI Investment (2026)

A practical framework for measuring, calculating, and presenting AI project ROI to boards and CFOs. Covers three value categories, time-phased modelling, ROI by AI system type (RAG, agents, fine-tuned models), the three-case board presentation, and the four most common measurement mistakes.

AI Architecture Roadmap 2026: What Every Engineer Must Know

A comprehensive AI architecture roadmap for 2026 covering the five critical layers every enterprise must build: agentic orchestration with a Control Plane, the Small Model Strategy with tiered inference, GraphRAG data architecture with data lineage, AI TRiSM governance enforcement, and the Autonomous SDLC. Core thesis: architecture outlasts every model — build the right structure and model selection becomes a configuration decision, not a re-architecture event.

Kubernetes for AI Workloads: GPU Scheduling, Model Serving & Auto-Scaling

Running AI workloads on Kubernetes requires a fundamentally different mental model from standard microservices. This guide covers every layer: GPU scheduling with the NVIDIA device plugin and MIG partitioning, model serving with vLLM and KServe, GPU-metric-driven auto-scaling with KEDA, spot instance strategies, namespace isolation with priority classes, and DCGM health monitoring. Includes production YAML configurations and a cost optimization framework that cuts GPU spend by 40-74%.

Stay Ahead of the Curve

Weekly deep-dives on AI systems, cloud architecture, distributed systems, and engineering leadership. Join 5,000+ engineers.