工程见解

深入探讨人工智能系统、云架构、分布式系统和工程领导力。

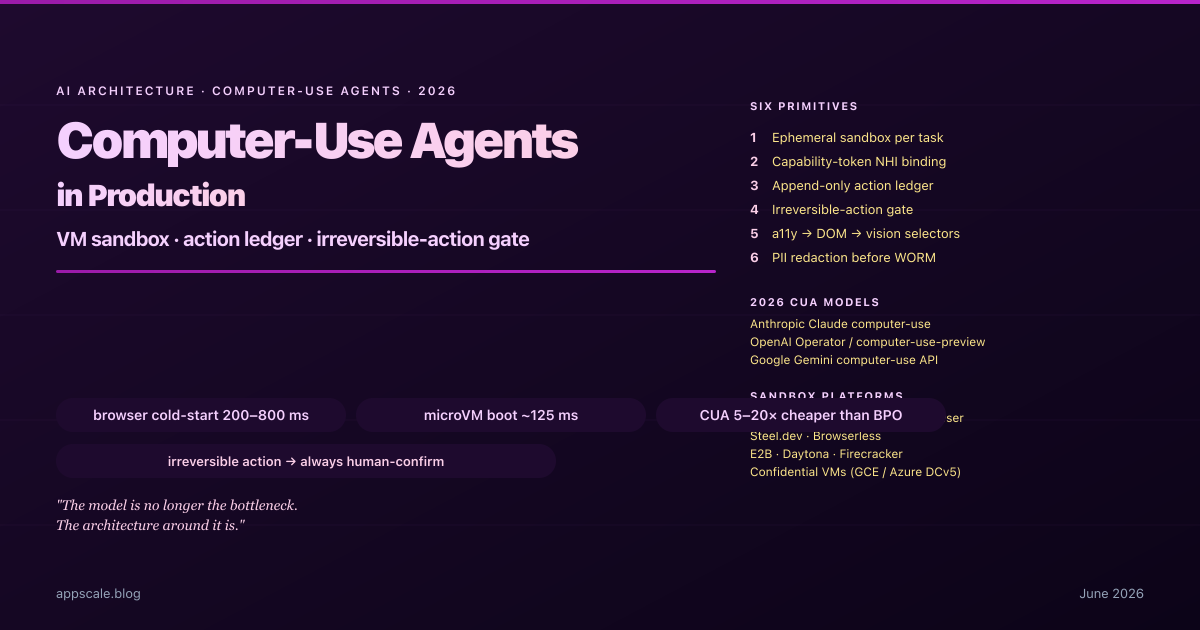

Computer-Use Agents in Production — VM Sandboxing, Action Audit, and Recovery (2026)

Production architecture for computer-use agents in 2026: VM-per-task sandboxing, action ledger, irreversible-action gate, selector resilience, and eval drift.

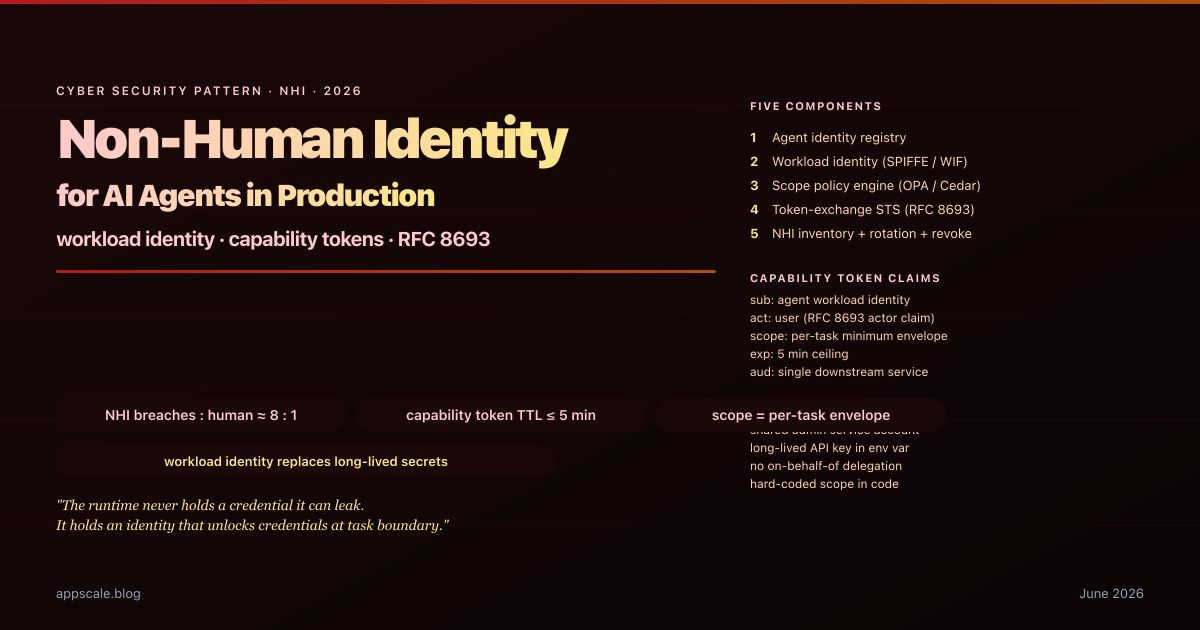

Non-Human Identity for AI Agents — Workload Identity, Capability Tokens, and the End of the Shared Service Account (2026)

Non-human identity for AI agents in 2026: workload identity, RFC 8693 capability tokens, on-behalf-of delegation, scope policy engine, and rotation discipline.

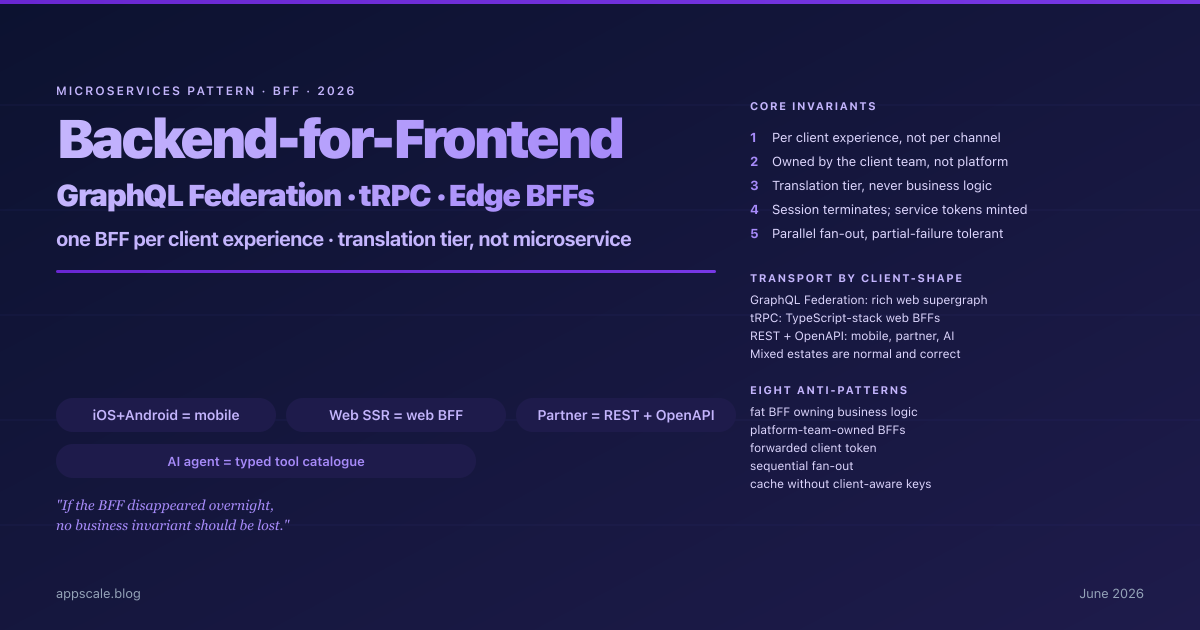

Backend-for-Frontend (BFF) in Production — GraphQL Federation, tRPC, and Edge BFFs Without the Anti-Patterns (2026)

Backend-for-Frontend in 2026: one BFF per client experience, GraphQL Federation vs tRPC vs REST per client-shape, Edge BFFs, and eight production anti-patterns.

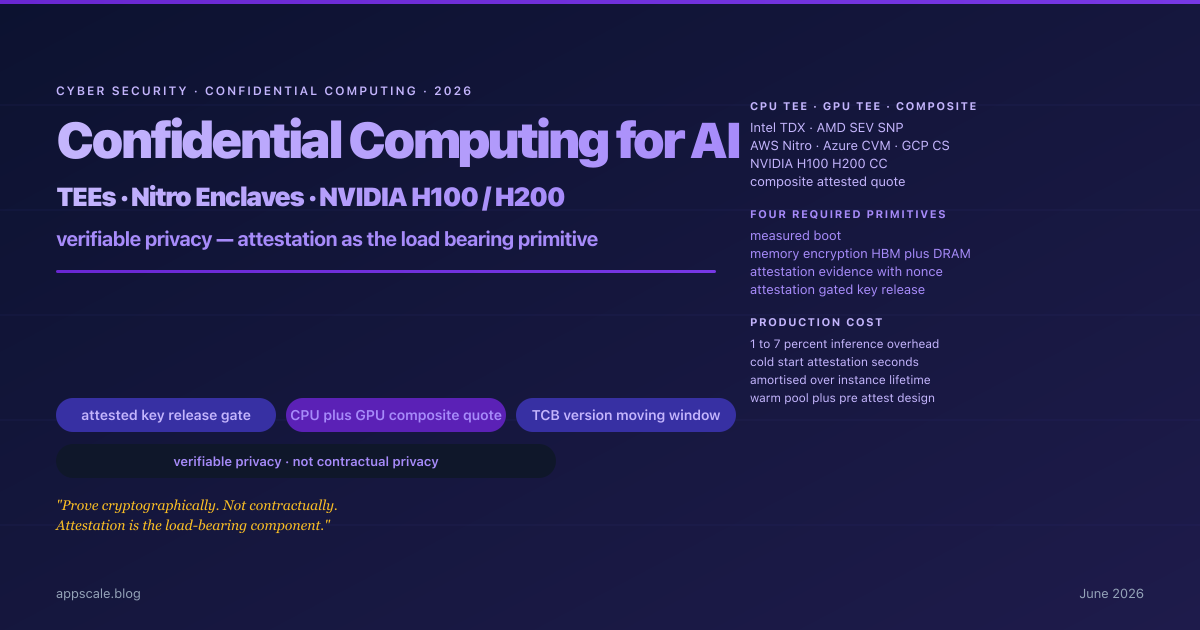

Confidential Computing for AI Inference in 2026 — TEEs, Nitro Enclaves, NVIDIA H100/H200, and the Verifiable-Privacy Architecture

Confidential computing for AI inference in 2026: CPU TEEs, NVIDIA H100/H200 GPU CC, attestation-gated key release, and the verifiable-privacy architecture procurement now demands.

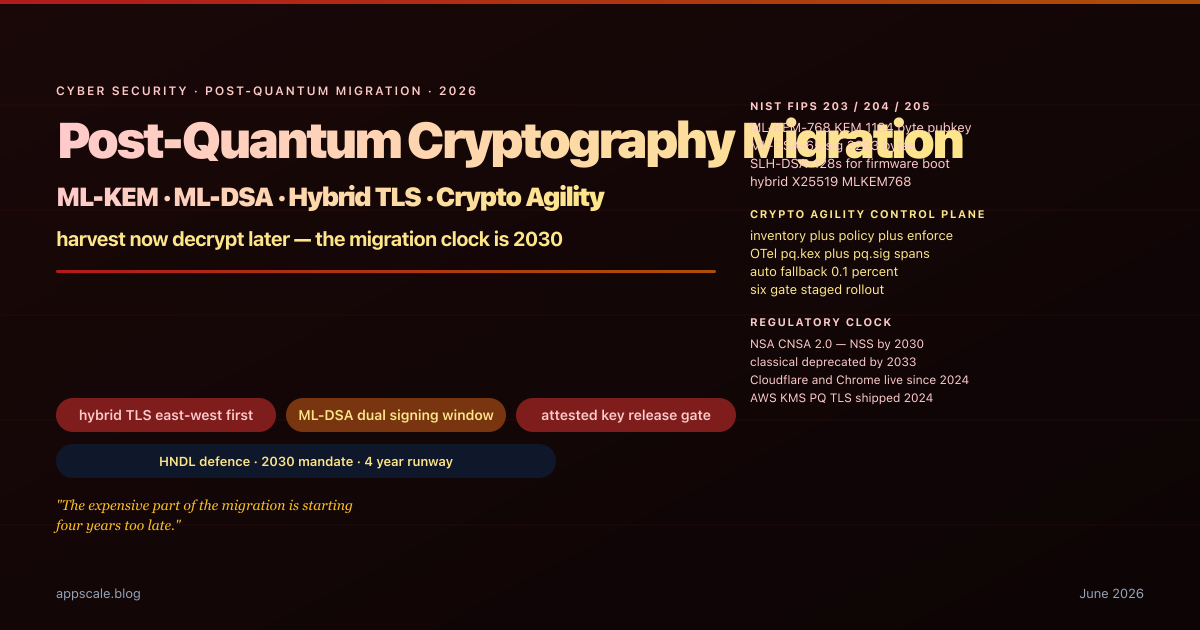

Post-Quantum Cryptography Migration in 2026 — ML-KEM, ML-DSA, and Hybrid TLS for Production Systems

Post-quantum migration in 2026: ML-KEM, ML-DSA, hybrid TLS, the crypto-agility control plane, and the six-gate rollout that survives production at NSA-CNSA-2 timelines.

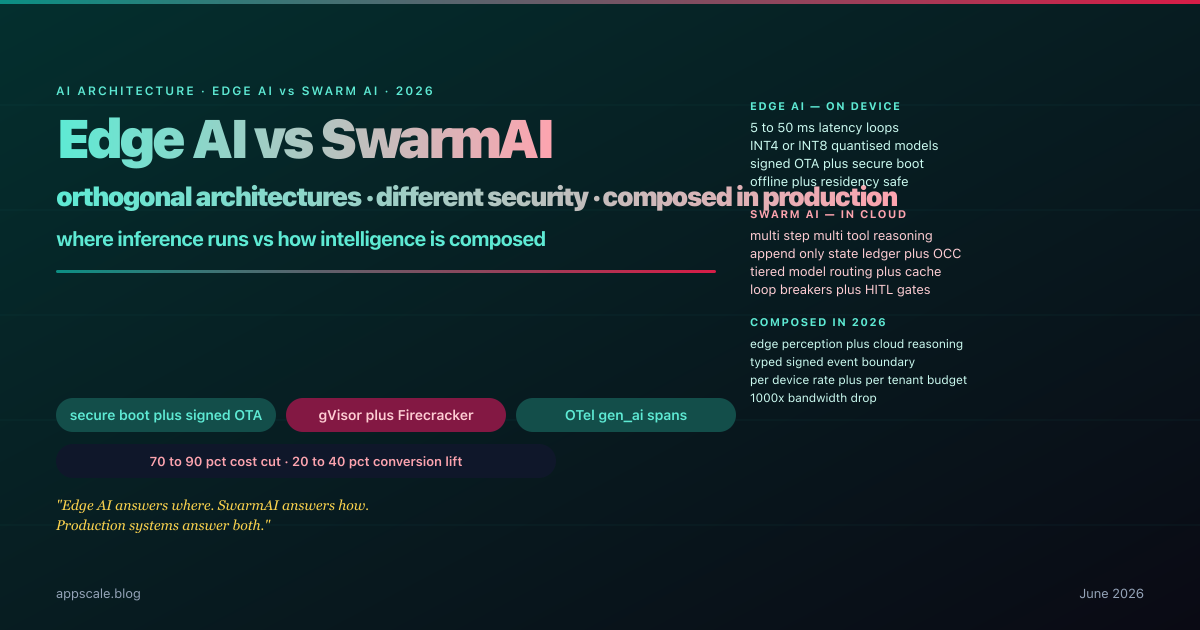

Edge AI vs SwarmAI — Differences, Security, Adoption, and Business Plus Consumer Benefits (2026)

How Edge AI and SwarmAI differ in 2026, the security threat models for each, adoption sequencing, and the business plus consumer benefits when systems compose both.

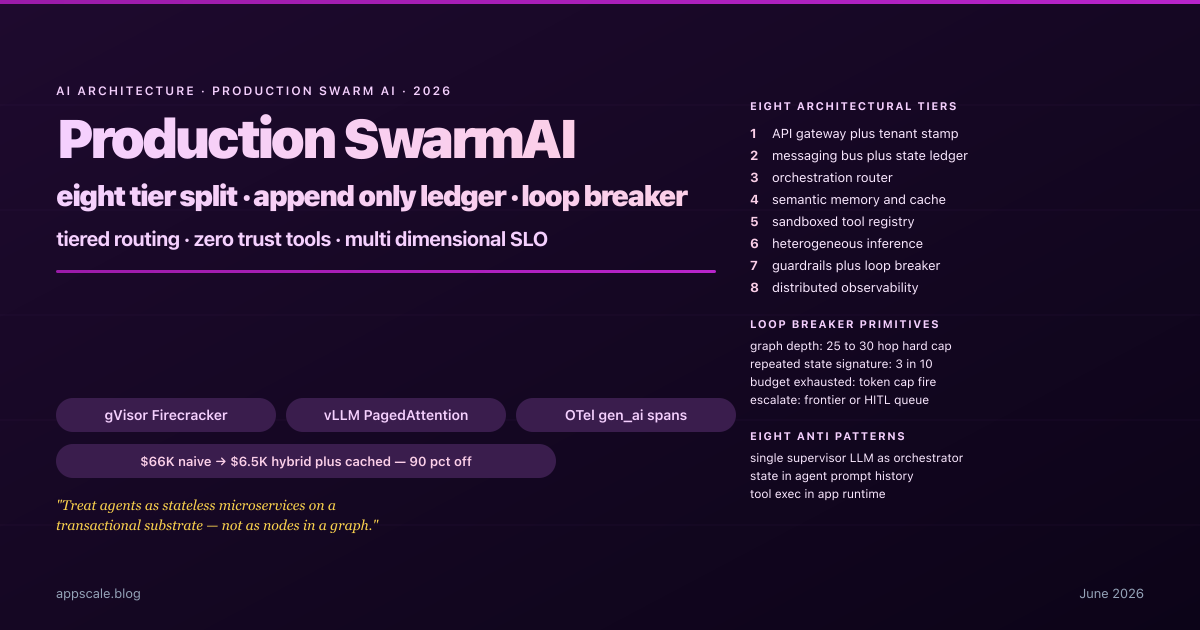

Production SwarmAI Systems — Architecture, State, Guardrails, and Observability for Multi-Agent Platforms (2026)

How production SwarmAI platforms split into eight tiers, bound emergence with loop-breakers, route across model tiers, and cut costs by 90 percent without quality loss in 2026.

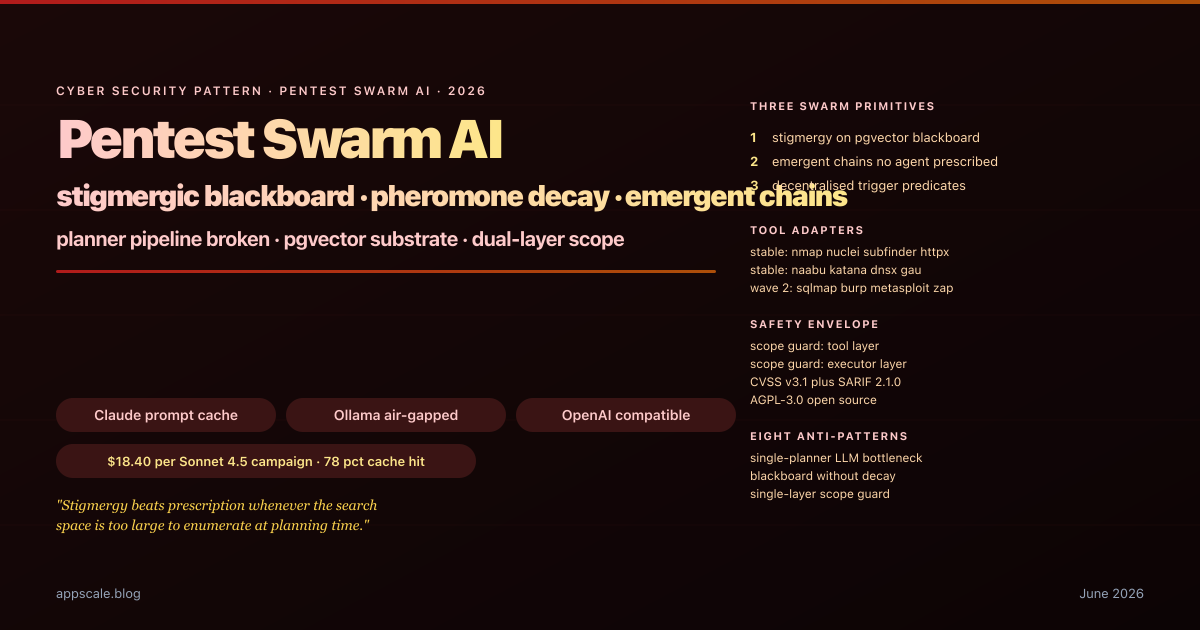

Pentest Swarm AI — Stigmergic Blackboard Architecture for Autonomous Penetration Testing (2026)

How Pentest Swarm AI replaces planner-LLM pipelines with a pgvector blackboard, pheromone-weighted findings, dual-layer scope guards, and trigger-predicate dispatch in 2026.

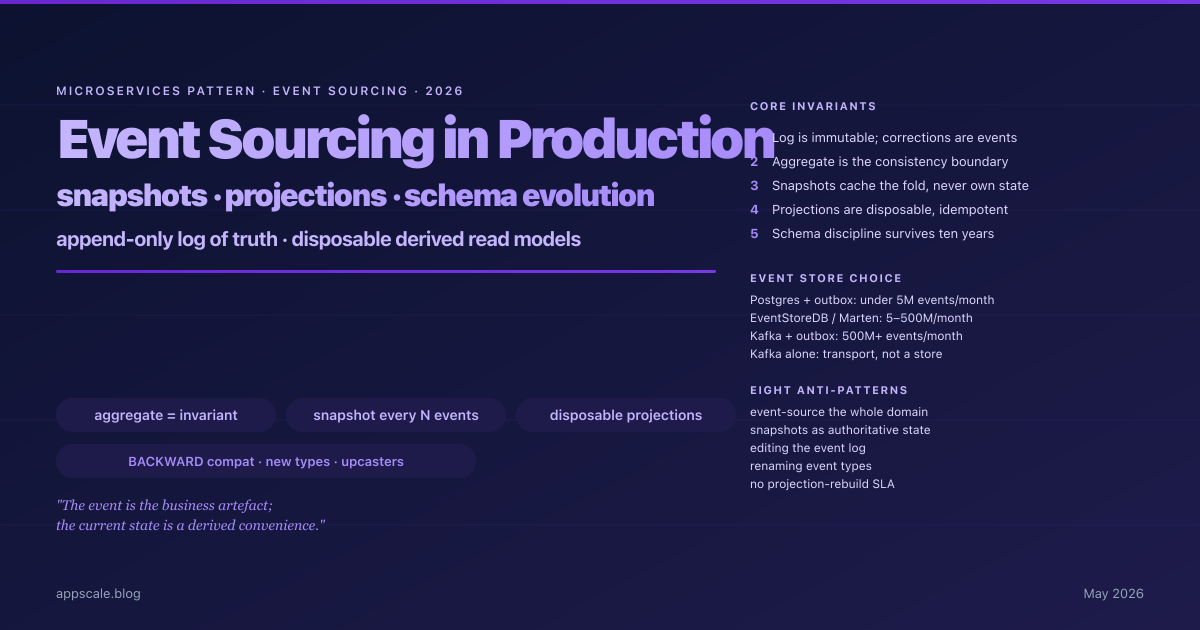

Event Sourcing in Production — Snapshots, Projections, and Schema Evolution Without Tears (2026)

Event sourcing for production microservices in 2026 — aggregate boundaries, append-only stores, snapshot strategy, projection rebuilds, and schema evolution.

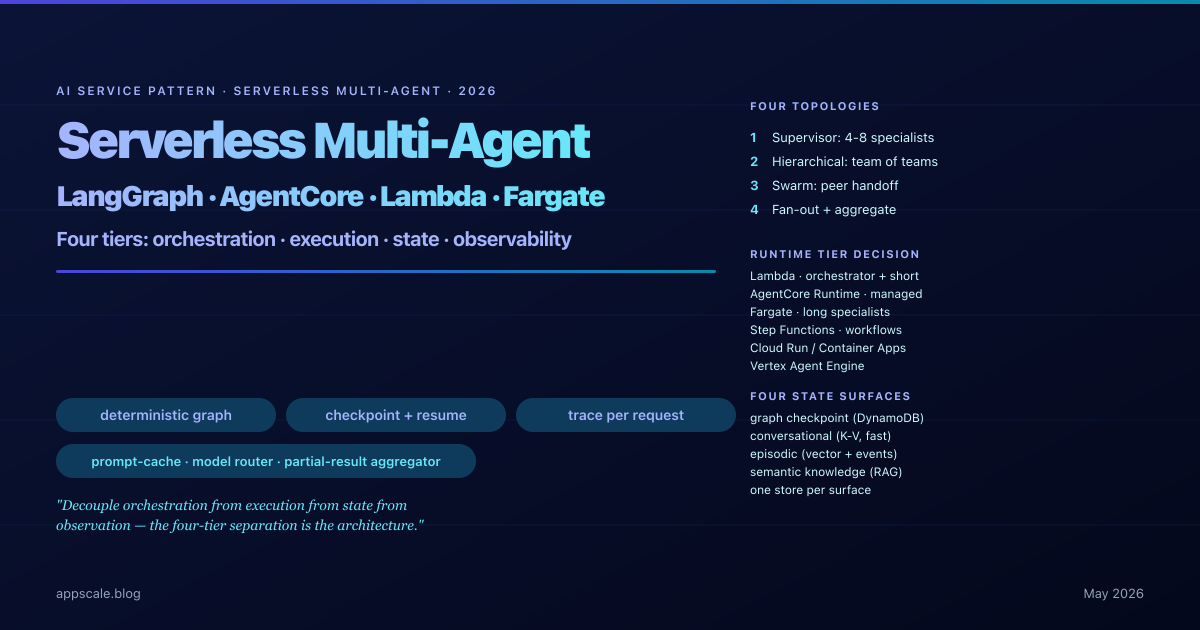

Serverless Multi-Agent Orchestration — LangGraph, Bedrock AgentCore, and the Architecture Pattern Behind Production AI Workflows (2026)

2026 pattern for serverless multi-agent systems: LangGraph orchestration, Bedrock AgentCore, fan-out topologies, four-tier state, per-span observability.

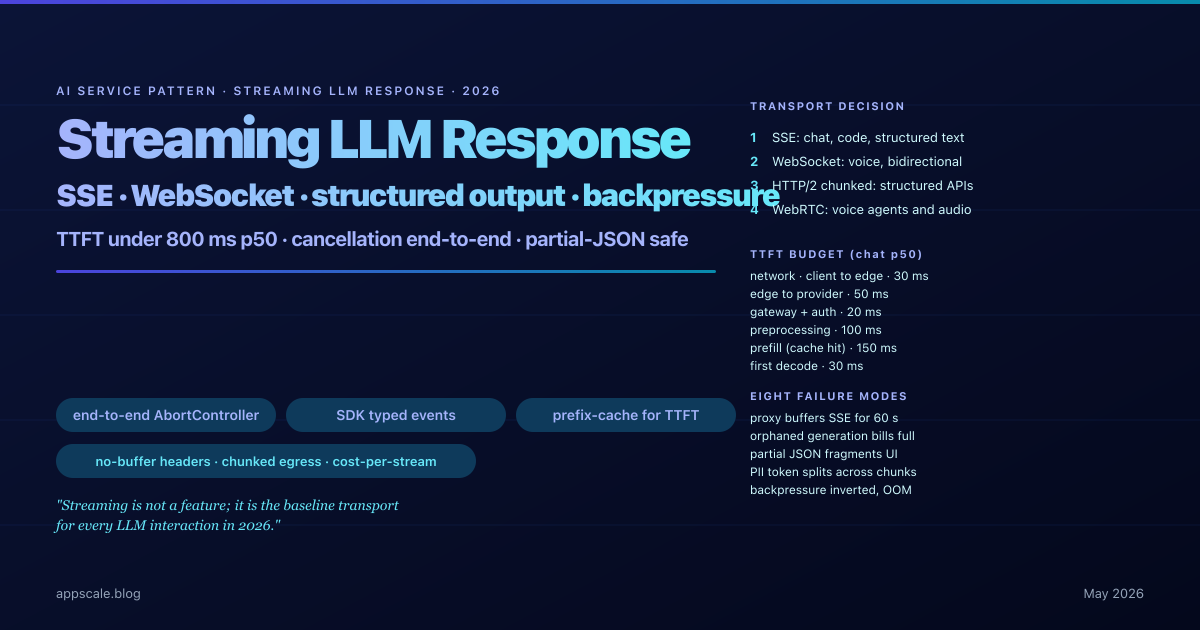

Streaming LLM Response Pattern — SSE, WebSockets, Structured Output, and Backpressure (2026 Architecture)

The 2026 production architecture for LLM streaming: SSE versus WebSockets, structured output, backpressure, end-to-end cancellation, TTFT levers, and proxy buffering.

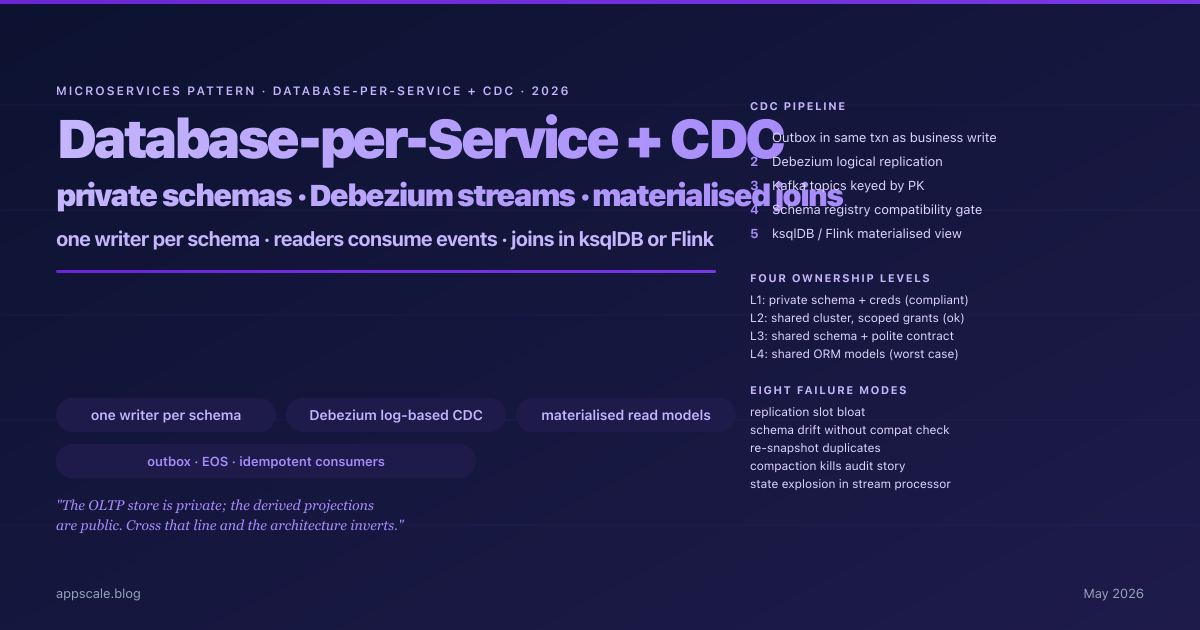

Database-per-Service and Cross-Service Joins with CDC — The 2026 Architecture for Reporting Without Distributed Transactions

The 2026 architecture for database-per-service plus cross-service joins via Debezium CDC, Kafka, and ksqlDB / Flink materialised views — with the operational runbook.

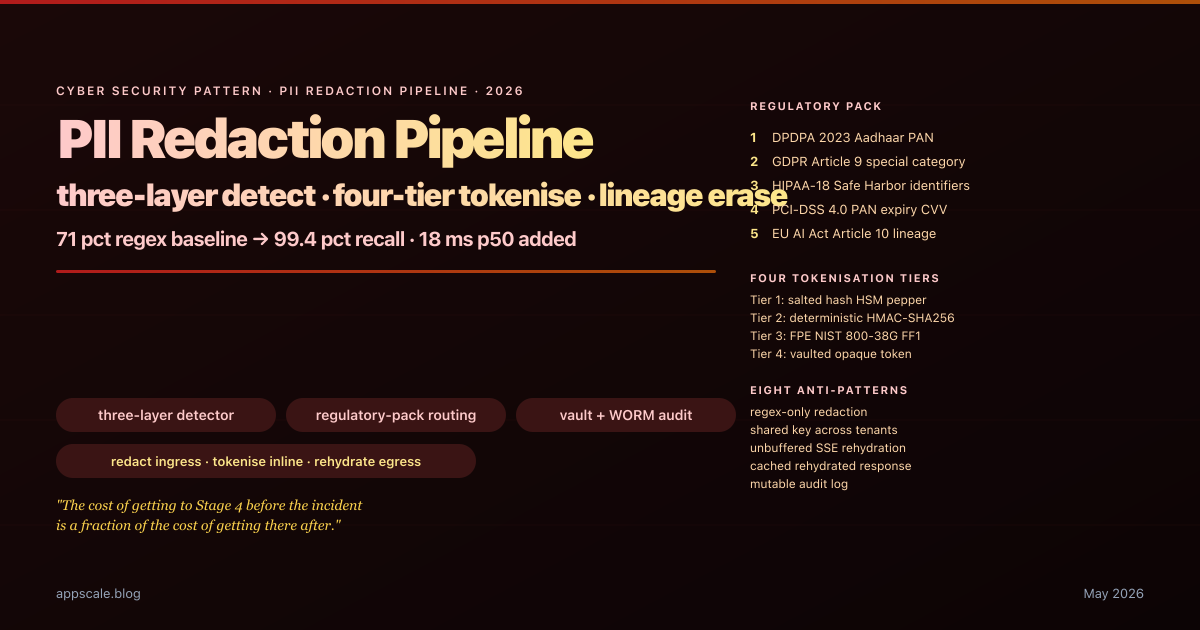

PII Redaction Pipeline Architecture for LLM Workloads — Presidio, NER, and Reversible Tokenisation (2026)

The 2026 architecture for PII redaction in LLM stacks: three-layer detector, regulatory-pack routing, four tokenisation tiers, per-tenant CMK, WORM audit, provenance graph.

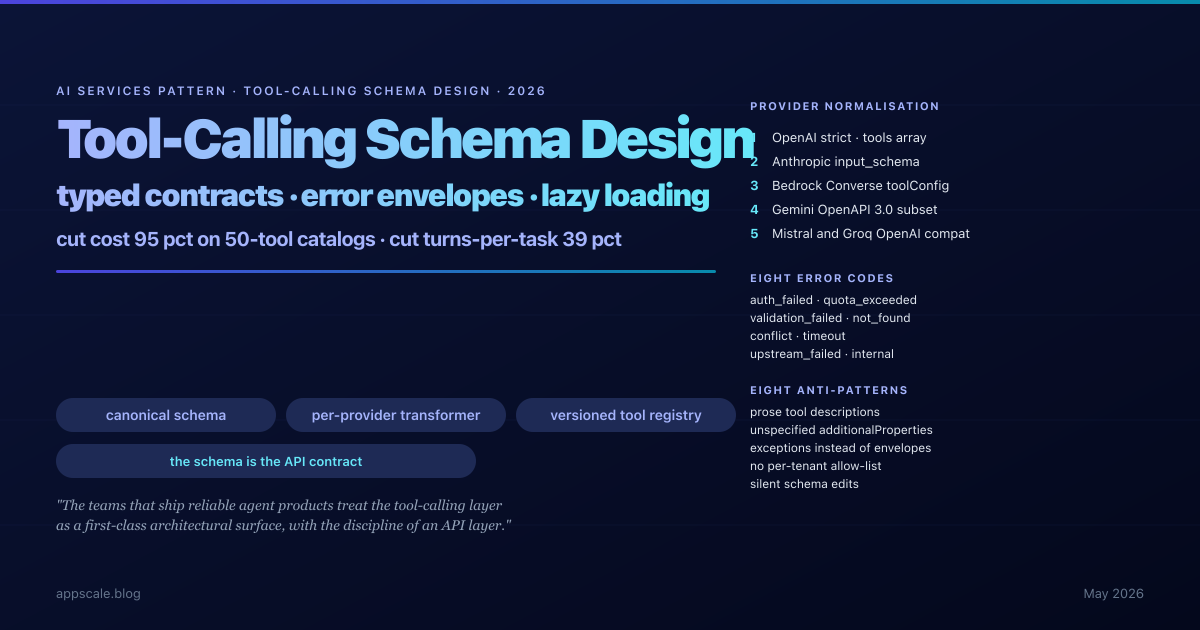

Tool-Calling Schema Design for LLM Agents — The 2026 Production Pattern

Production tool-calling needs typed canonical schemas, eight-code error envelopes, per-tenant allow-lists, and lazy loading. The schema layer pays back at 95% cost cut.

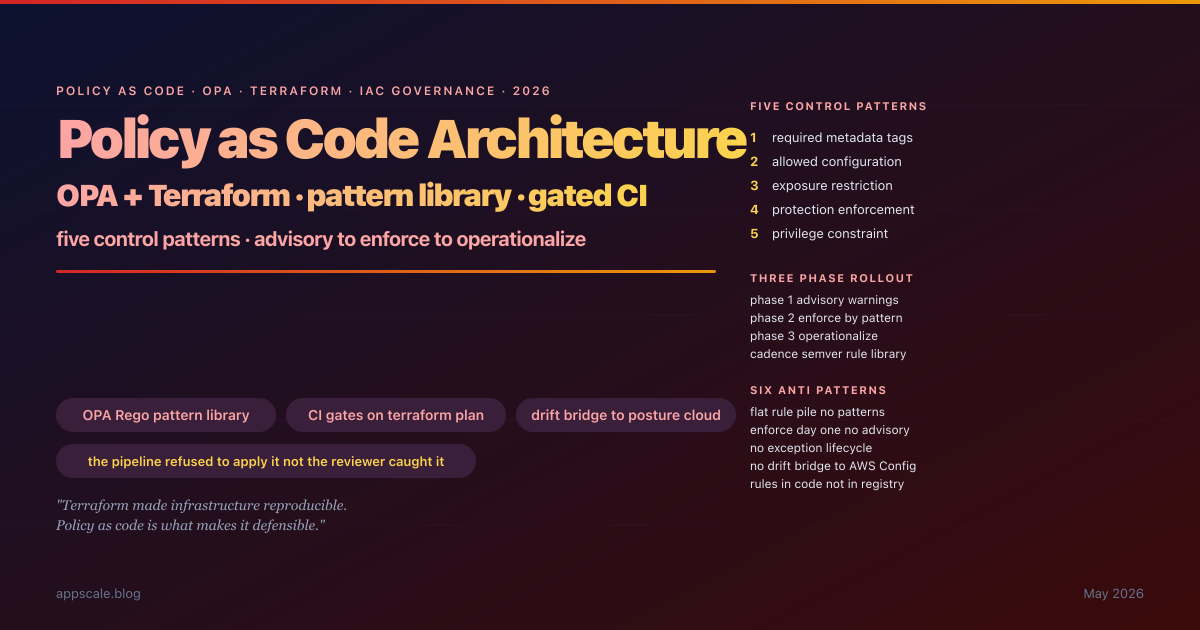

Policy-as-Code Architecture: OPA + Terraform Pattern Library for IaC Governance (2026)

IaC without policy-as-code is a fast lane to misconfigured cloud: every engineer writes Terraform that passes terraform plan, no one writes the controls that say "S3 buckets must be encrypted with customer-managed KMS, must enforce TLS, must deny public read; security groups must not expose port 3389 to 0.0.0.0/0; IAM roles must not have wildcard trust principals". Policy-as-code, expressed as a versioned pattern library of OPA Rego rules evaluated against every Terraform plan in CI/CD, turns the cloud security model from "reviewer caught it" to "the pipeline refused to apply it". This article is the production architecture: the five orthogonal control patterns (required metadata, allowed configuration, exposure restriction, protection enforcement, privilege constraint) that compose into every IaC governance system, three production-grade Rego examples with the failure modes they catch, the pattern library directory layout with shared helpers and test fixtures, the six-stage gated CI/CD pipeline with structured violation reporting, the three-phase rollout (advisory → enforce → operationalize) that protects developer velocity, the OPA vs Checkov vs tfsec vs Conftest decision matrix, the drift bridge to AWS Config / Azure Policy / GCP Security Command Center, and the exception lifecycle with TTL and audit trail that keeps the process from becoming a permanent loophole.

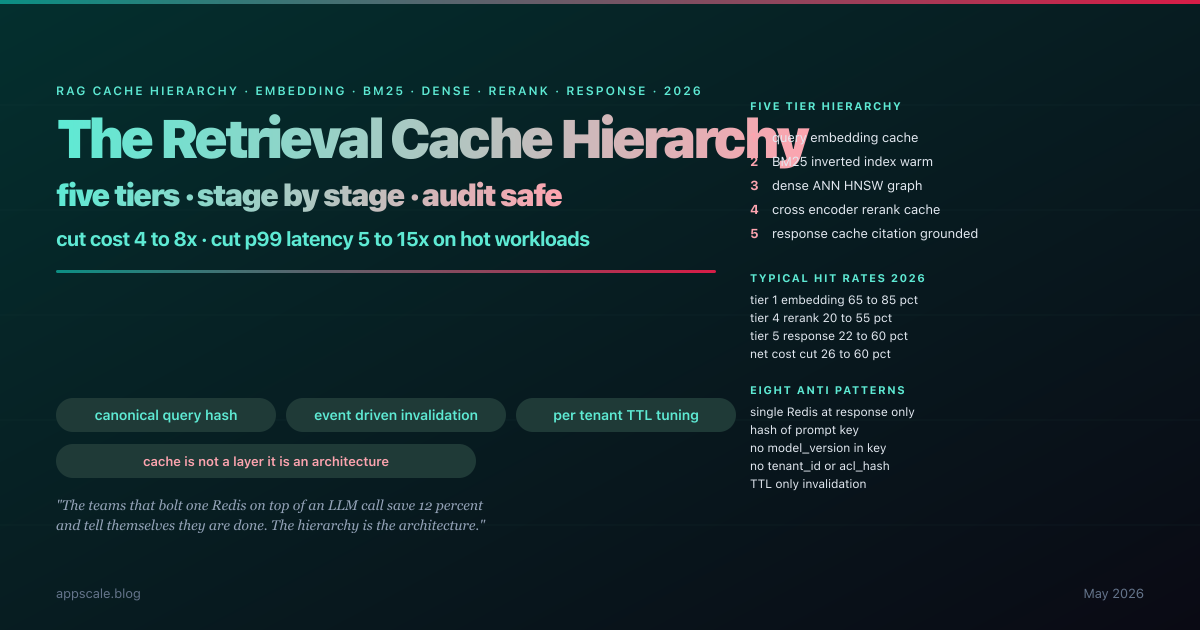

The Retrieval Cache Hierarchy: Embedding, BM25, Dense, Rerank, and Response Caching for Production RAG (2026)

Production RAG is not one cache, it is a five-tier hierarchy — embedding, BM25 posting-list, dense ANN graph, cross-encoder rerank, and final response — each with its own key derivation, hit-rate economics, invalidation contract, and freshness budget. Teams that bolt one Redis layer onto a RAG pipeline keep paying for embedding calls and rerank passes on every request; teams that stratify the cache hierarchy cut cost by 4 to 8x and p99 latency by 5 to 15x on hot workloads while keeping multi-tenant isolation and document-churn invalidation correct. This article is the deep design: why a single response cache fails under reciprocal-rank-fusion non-determinism and prompt-template drift and multi-tenant key leakage, the five tiers and what each one caches, the canonical-query-hash discipline that propagates through every tier, the cross-encoder rerank cache as the largest cost lever, response-cache keying with tenant_id and acl_hash and retrieved-document revision hashes, event-driven invalidation with a reverse index for efficient per-document purge, multi-tenant isolation patterns with cross-tenant CI tests, cost math at 2026 prices showing the 26 to 60 percent saving on customer-support workloads, eight anti-patterns, observability metrics per tier, and the five-stage maturity ladder.

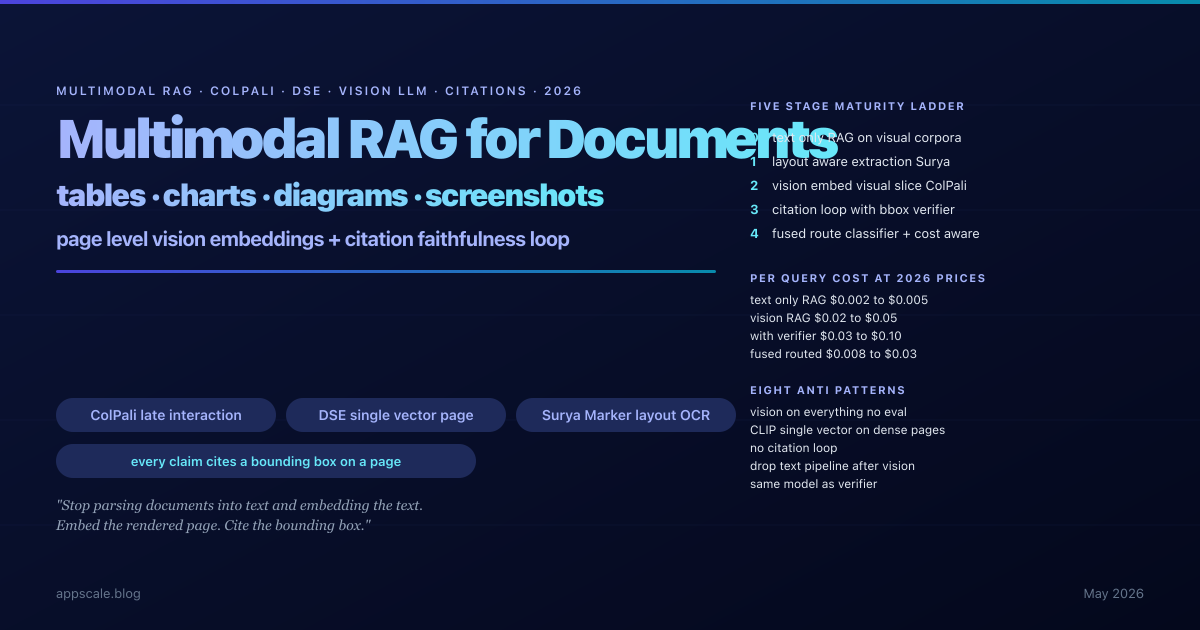

Multimodal RAG for Documents: ColPali, DSE, and Vision-LLM Citation Architecture (2026)

Vector RAG silently fails on PDFs that carry their meaning in tables, charts, schematics, and screenshots, because the parser flattens or erases the very content that holds the answer. ColPali, Document Screenshot Embeddings, and ColQwen2 flipped the parse-first pipeline on its head by embedding the rendered page itself as a sequence of patch vectors with late-interaction MaxSim scoring; the 2026 production multimodal RAG stack composes layout-aware parsing, page-level vision embeddings, hybrid text-plus-visual retrieval routed by a lightweight classifier, and a vision-LLM citation loop that proves every answer back to a bounding box on the source page. This article is the deep-architecture decision guide: when the vision pipeline is justified and when layout-aware extraction is enough, what ColPali and DSE actually do under the hood, the full production pipeline with parse, embed, retrieve, ground stages, the non-negotiable citation faithfulness loop with structured-output generation and per-claim verifier, table and chart understanding patterns, hybrid composition with query classifier and reciprocal rank fusion, cost math at 2026 prices showing the 10x to 25x generation-cost premium and the levers that compose to bring it back down, eight anti-patterns, and the five-stage maturity ladder from text-only RAG to cost-aware composed hybrid.

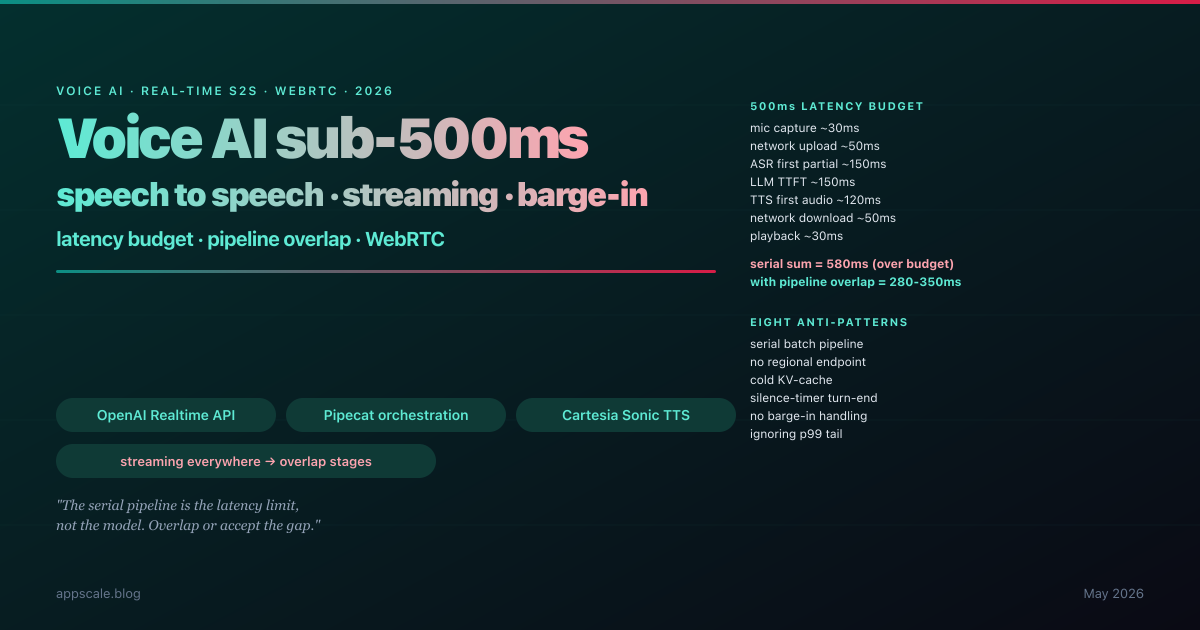

Voice AI Architecture in 2026: How to Hit Sub-500ms Speech-to-Speech Latency Without Faking It

The honest reason most voice AI demos feel awkward in production is latency budget. A human conversational turn-taking gap is roughly 200ms; anything beyond about 800ms feels broken; the comfortable 2026 production target is end-to-end speech-to-speech response under 500ms, measured from the user’s last audio frame to the first audio frame of the agent’s reply. The architectures that hit this in 2026 are not faster models bolted onto the same pipeline; they are streaming end-to-end with first-token TTFT optimisation, barge-in handling, jitter-tolerant transport, and a hard discipline about what runs at the edge versus the cloud. This article is the deep-architecture decision guide: the latency budget broken down stage by stage, why batch ASR plus chat-completion LLM plus offline TTS will never hit the target, OpenAI Realtime API and Gemini Live as integrated speech-to-speech baselines, Pipecat and LiveKit Agents as orchestration layers, barge-in via Silero VAD and turn-end prediction, WebRTC versus WebSocket transport, streaming TTS with ElevenLabs Flash and Cartesia Sonic, eval metrics (WER, latency p50/p99, barge-in latency, turn-taking smoothness), eight anti-patterns, five-stage maturity ladder.

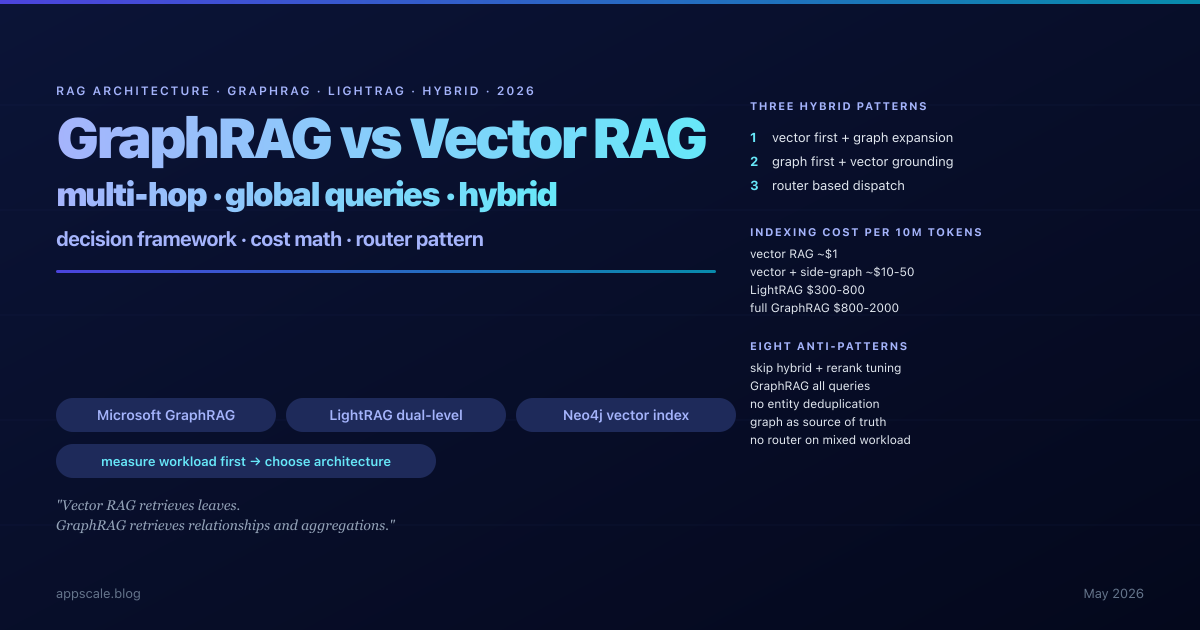

GraphRAG vs Vector RAG vs Hybrid: A 2026 Multi-Hop Retrieval Architecture Guide

The honest reason most production RAG systems plateau is that dense-vector retrieval has no notion of a relationship. The retriever finds top-k cosine-similar chunks and hands them to the model; if the answer requires composing facts across three documents, vector RAG returns only the chunks lexically close to the query and silently drops the bridging fact. Microsoft GraphRAG named this in 2024; LightRAG offered a cheaper alternative in 2025; the 2026 conversation is no longer "should we add a graph" but "which slice of the workload deserves the graph and how do we compose vector and graph without doubling the indexing bill". This article is the deep-architecture decision guide: the multi-hop and global-query failure modes that motivate graphs, what pure vector RAG actually does and the workloads it serves well, the five-stage Microsoft GraphRAG pipeline and its honest cost (300x to 1000x vector indexing), LightRAG and the simpler-cheaper variants, Neo4j vector index for unified storage, three production hybrid architectures (vector-first plus graph expansion, graph-first with vector grounding, router-based dispatch), the decision framework by workload, indexing cost math worked through at 2026 prices, drift and freshness operational patterns, eight anti-patterns, five-stage maturity ladder.

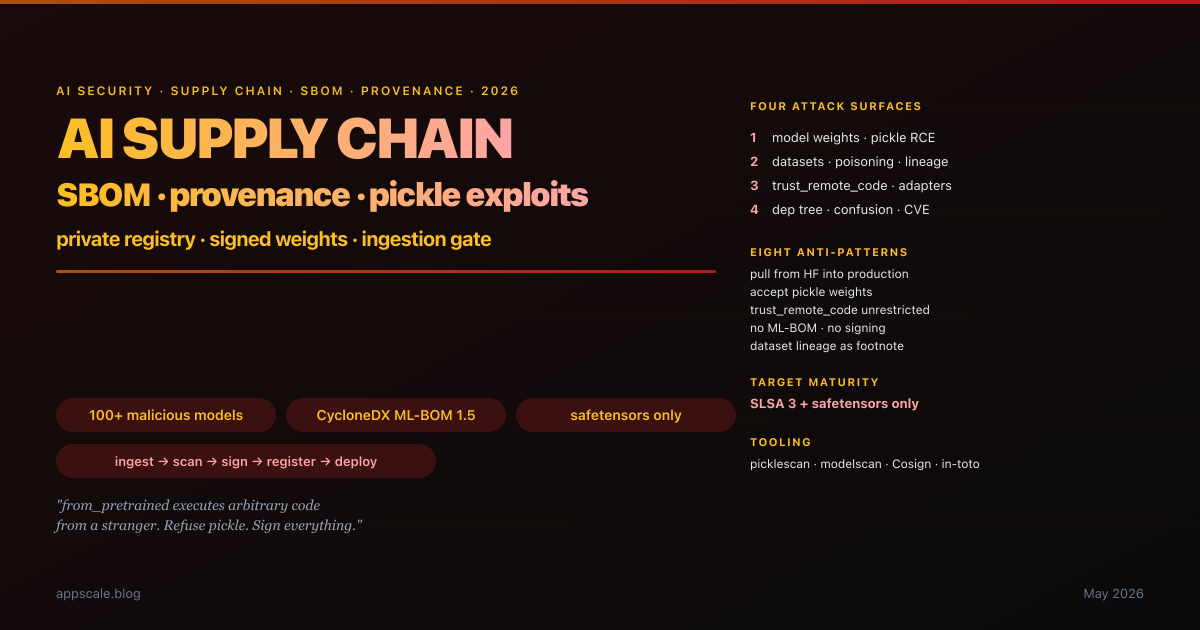

AI Supply Chain Security 2026: SBOM, Model Provenance, and Why That HuggingFace Pickle Is About to Get You Owned

In February 2024 security researchers disclosed that a popular text-classification model on HuggingFace had been quietly modified to execute a reverse-shell payload at import time. Six months later a similar pattern hit a fork of a widely-used embedding model. Twelve months later JFrog disclosed more than a hundred malicious models sitting in plain sight on the public registry. The enterprise response in most cases was a shrug and a reminder to "be careful what you download" — which is the same response the software industry gave to npm and PyPI for a decade before SBOM-and-provenance discipline finally became table stakes. The AI supply chain is the next attack surface. This article is the deep-architecture guide: the four asset categories (weights, datasets, custom-code adapters, dep tree), the pickle problem precisely (why from_pretrained is arbitrary-code execution), the safetensors migration, CycloneDX ML-BOM, Sigstore/Cosign/in-toto for models, the Linux Foundation Model Signing project, the reference ingestion-gate architecture (picklescan + modelscan + CVE scan + provenance verify + private registry + fleet-side signature verification), dependency confusion in ML packages, embedded backdoors and stealth fine-tunes, dataset lineage and the EU AI Act, eight anti-patterns, five-stage maturity ladder, and the Monday-morning checklist.

保持领先地位

每周深入探讨人工智能系统、云架构、分布式系统和工程领导力。加入 5,000 多名工程师的行列。