工程见解

深入探讨人工智能系统、云架构、分布式系统和工程领导力。

AI Incident Response Runbook: RCA for LLM Failures (2026)

LLM systems fail in ways the SRE runbook of the last decade does not anticipate. This article walks the engineering deliverables for an LLM-aware incident response architecture in 2026: severity classification adapted to LLM failure surfaces; detection signal stack (eval drift, guardrail trips, cost spikes, latency p99, hallucination rate, user reports); six containment primitives operable from a single console (model pin, prompt rollback, retrieval quarantine, canary halt, traffic shape, kill-switch); RCA template with LLM failure classes (hallucination, prompt injection, model regression, retrieval poisoning, vendor outage, jailbreak, context-window leak, agentic loop) and LLM-specific action item types; blameless culture extended to model contributions; on-call rota with primary, secondary, incident commander, and subject-matter dimensions. 8 anti-patterns, 5-stage maturity ladder, composition with AI observability, prompt versioning, human escalation, and AI-native CI/CD.

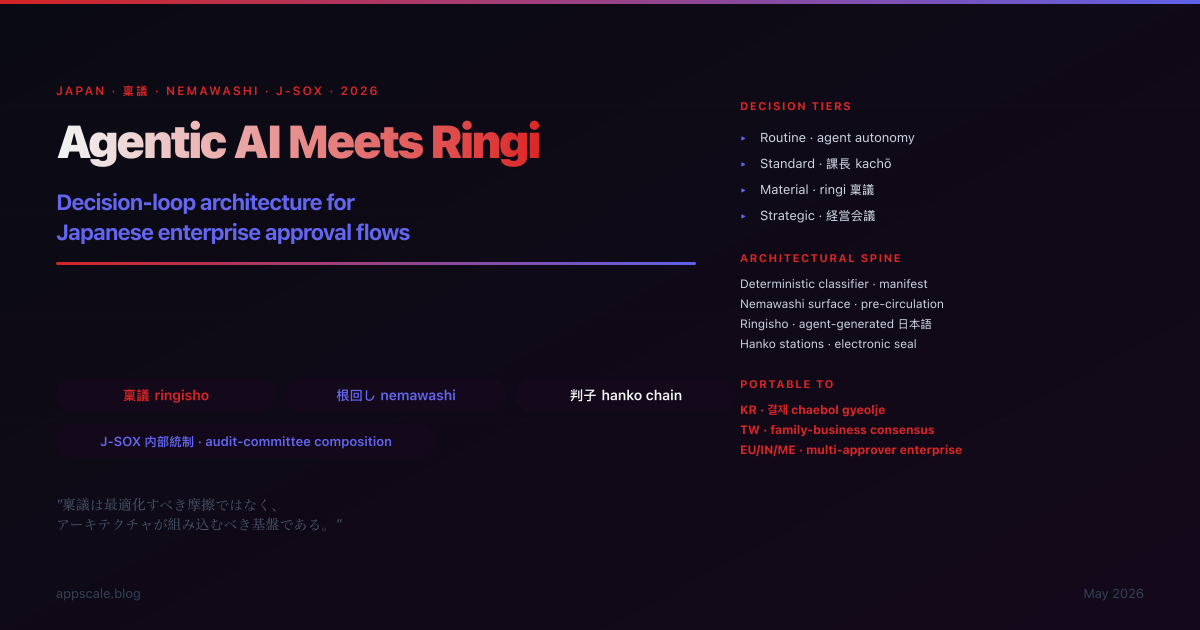

Agentic AI Meets Ringi: Decision Loop Architecture for Japanese Enterprise Approval Flows (2026)

The Japanese ringi seido is not a workflow to optimise around — it is the consensus-decision substrate on which the firm's J-SOX internal-control framework rests, and an agentic AI deployed without ringi-aware architecture fails the audit committee's review on first sample. This article walks the engineering deliverables: deterministic decision classifier reading the internal-control manifest; structured nemawashi surface for pre-circulation; agent-generated Japanese ringisho from internal-control-approved templates; electronic hanko circulation integrated with the firm's approval system; chain-of-approval execution gate; decision-narrative audit trail composed with J-SOX retention. 8 anti-patterns, 5-stage maturity ladder, portable to Korean chaebol gyeolje, Taiwanese family-business consensus, and broader multi-approver enterprise decision cultures.

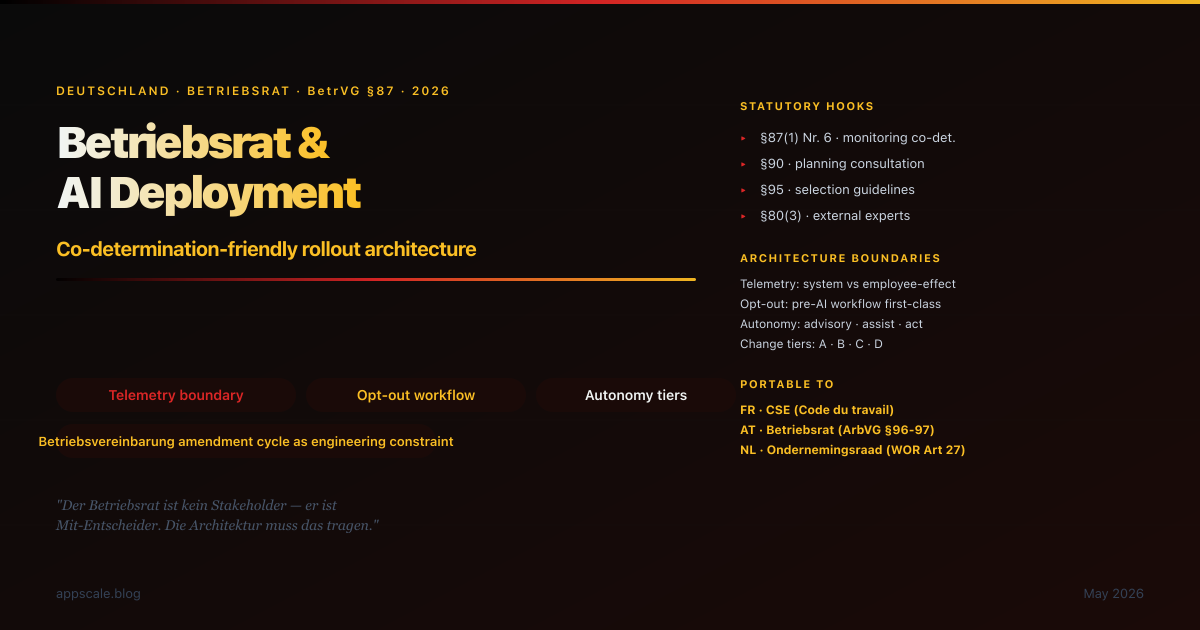

Betriebsrat and AI Deployment: Co-Determination-Friendly Rollout Architecture (2026)

The German Betriebsrat is not a stakeholder you consult; it is a co-decision-maker under BetrVG §87(1) Nr. 6 whose statutory rights determine whether your AI deployment ships. This article walks the engineering deliverables for a Betriebsvereinbarung-friendly architecture — telemetry boundaries that distinguish system observability from employee surveillance at the substrate level, opt-out paths as first-class workflow, autonomy tiers (advisory/assist/act) enforced at code level, change-classification pipeline gating releases against Betriebsvereinbarung-impact tiers, German-language artefact pack. 8 anti-patterns, 5-stage maturity ladder, portable to French CSE, Austrian Betriebsrat, Dutch Ondernemingsraad, and the European Works Council framework.

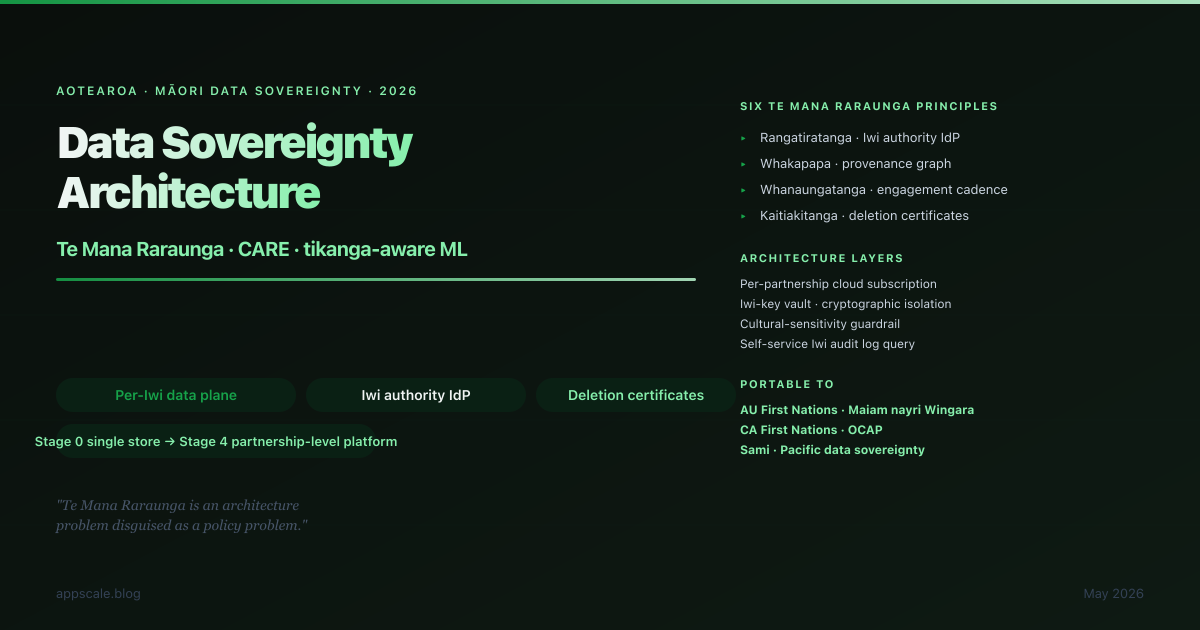

Data Sovereignty Architecture: Respecting Māori Data Principles in Tikanga-Aware ML Systems (2026)

Māori data sovereignty in 2026 is an architecture problem disguised as a policy problem. This article walks the engineering deliverables that operationalise Te Mana Raraunga and the CARE principles — per-partnership cloud subscriptions, Iwi authority IdP integration, deletion machinery that reaches the embedding store and fine-tune layer, partnership-trained cultural sensitivity classifiers, audit-log self-service for the Iwi data board, and engagement cadence wired into CI release gates. 8 anti-patterns, 5-stage maturity ladder, portable to Australian First Nations, Canadian First Nations OCAP, Sami, and Pacific data sovereignty contexts.

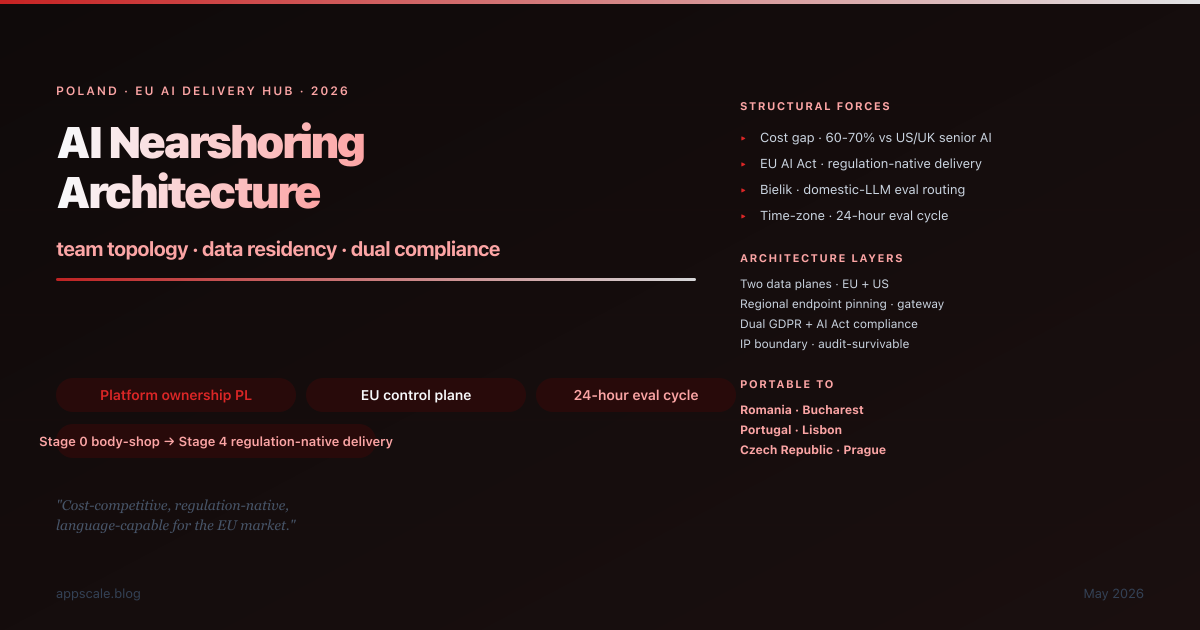

AI Nearshoring Architecture: Poland as the EU AI Delivery Hub — Team Topology and Data Residency (2026)

Poland in 2026 is the structurally interesting answer to the where-do-we-build-AI question — the cost gap, the EU AI Act compliance moat, and the local-LLM stack converged. This is the architecture that makes the answer credible: the team topology that puts the platform and EU-customer surface in Poland with full ownership, the two-data-plane partitioning that makes EU-residency enforceable, the dual GDPR + AI Act compliance posture that pre-empts buyer due-diligence, the IP boundary that survives the US-client security audit, and the 24-hour eval cycle that turns the time-zone gap into a velocity multiplier. 8 anti-patterns, 5-stage maturity ladder, portable to Romania, Portugal, Czech Republic.

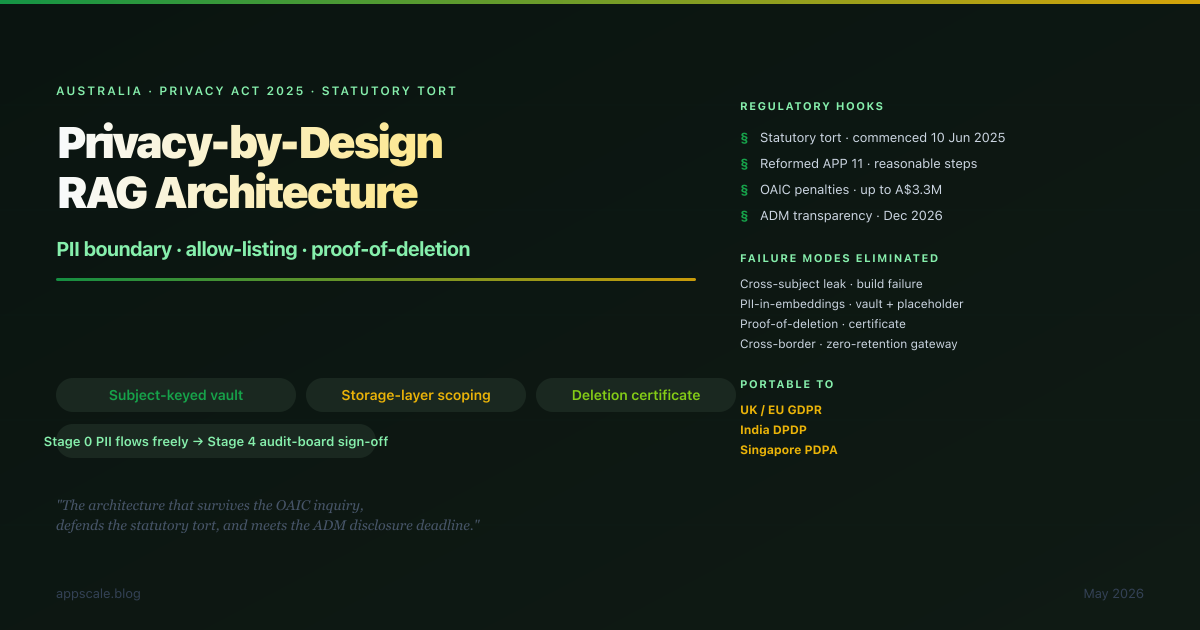

Privacy-by-Design RAG Architecture for the Australian Privacy Act 2025 Reforms and the Statutory Tort (2026)

The 2024-2025 Privacy Act reforms changed RAG architecture in Australia from a "we should think about privacy" posture to a "design for the tort claim and the OAIC inquiry" posture. This is the architecture that survives both — the PII boundary drawn before the embedding store, subject-keyed vault, placeholders in embeddings and prompts, storage-layer scoping with empty defaults, per-query provenance into an admissible audit log, deletion as transactional fan-out with a certificate, zero-retention enforced at the model gateway, and an automated-decision register that drives the privacy policy. Statutory tort hook, 8 anti-patterns, 5-stage maturity ladder, portable to UK/EU GDPR, India DPDP, and Singapore PDPA.

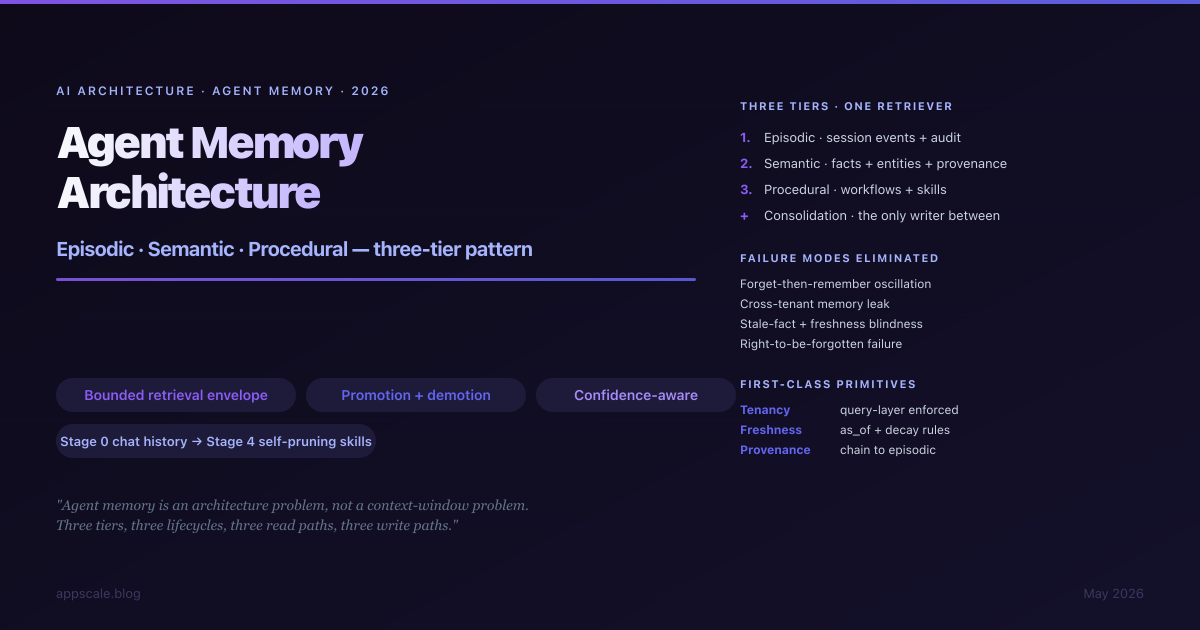

Agent Memory Architecture: Episodic, Semantic, Procedural — the Three-Tier Pattern (2026)

The agent demos that survive contact with production all treat memory as an architecture, not as a context window. This is the three-tier pattern — episodic, semantic, procedural — that separates the lifecycles, read paths, write paths, and failure modes the naive single-store approach conflates. Read paths, write paths, the consolidation pipeline that earns the architecture, where each tier physically lives, eight anti-patterns to retire, the 5-stage maturity ladder, and a portable design that lands cleanly on LangGraph, AutoGen, the OpenAI Agents SDK, the Anthropic Claude Agent SDK, and the Microsoft Agent Framework.

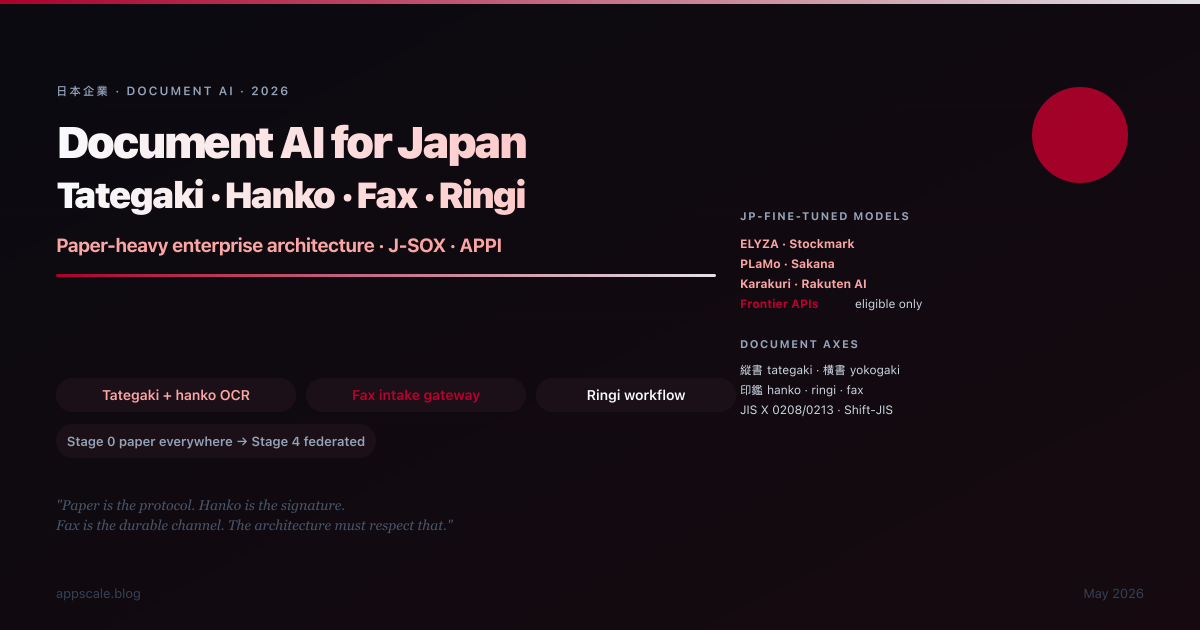

Document AI for Japanese Paper-Heavy Enterprises: Tategaki, Hanko, Fax Pipelines, and the Ringi Document Workflow (2026)

Japanese paper-heavy enterprises in 2026 still run on fax, hanko stamps, tategaki documents, and ringi-sho approval workflows — and the document AI architecture that survives this reality looks nothing like the US-EU document AI playbook. This article walks the architecture: tategaki + yokogaki OCR with layout analysis, hanko/inkan detection and seal-registry validation, IP-fax intake gateway with sender authentication, ringi workflow modelling with AI drafting and retrieval, JIS X 0208/0213/Shift-JIS/EUC-JP encoding edges, JP-fine-tuned model selection (ELYZA, Stockmark, PLaMo, Sakana, Karakuri), J-SOX and APPI composition, 8 anti-patterns, and the 5-stage maturity ladder. Portable to KR, TW, ID, TH, IN paper-heavy markets.

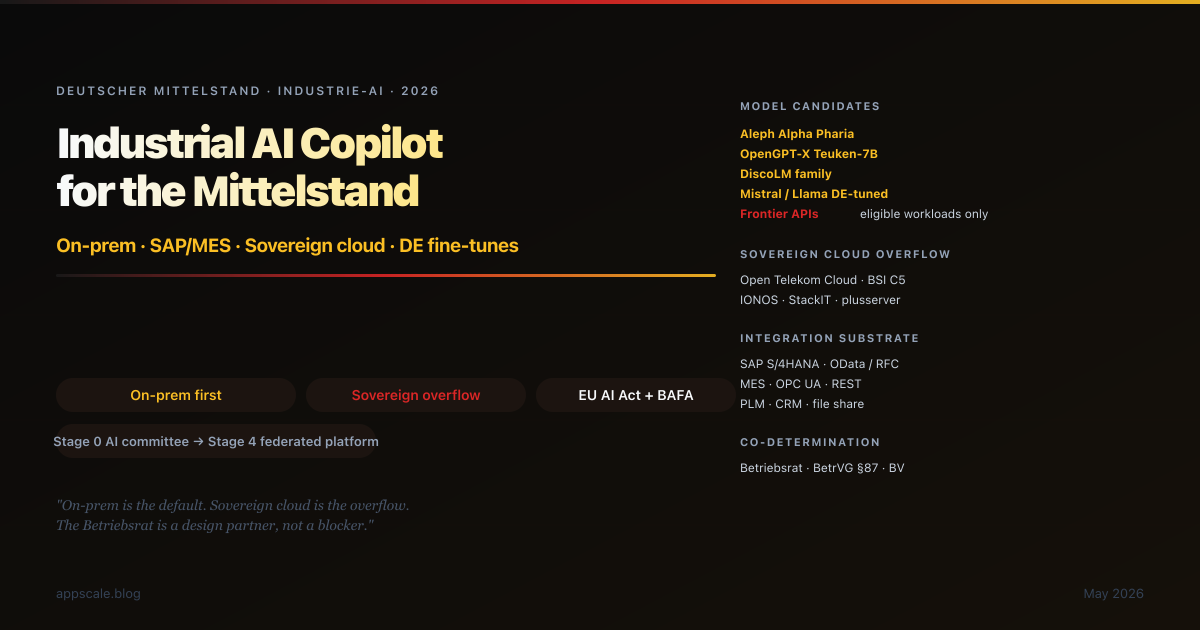

Industrial AI Copilot Architecture for the German Mittelstand: On-Prem, SAP/MES Integration, Sovereign Cloud, German-Language Fine-Tunes (2026)

The German Mittelstand is the most architecturally constrained AI deployment context in Europe — on-prem-first, SAP/MES/PLM substrate, Betriebsrat co-determination, BAFA export control, EU AI Act compliance, and German-language fine-tunes (Aleph Alpha Pharia, OpenGPT-X Teuken-7B, DiscoLM) as real engineering options against the frontier. This article walks the architecture that survives those constraints: on-prem inference with sovereign-cloud (Open Telekom Cloud, IONOS, StackIT, plusserver) overflow, eval-driven model routing, SAP/MES/PLM integration patterns, Betriebsrat-friendly design under BetrVG §87, EU AI Act and BAFA composition, 8 anti-patterns, and the 5-stage maturity ladder. Portable to AT, CH, IT, CZ, PL, KR, JP industrial firms.

The Algorithm Charter for Aotearoa as an AI Governance Blueprint: Public-Sector Architecture for the LLM Era (2026)

New Zealand's Algorithm Charter is short, principle-based, and signatory-voluntary — and it operationalises into a production-grade AI governance architecture that is portable across the Westminster-tradition public sector (CA, AU, IE, UK). This article maps each of the six commitments (Transparency, Partnership / Te Tiriti, People, Data, Privacy / Ethics / Rights, Human Oversight) to deliverable architectural artefacts (algorithm register, model card, DPIA, iwi engagement record, TMR plan, affected-people impact narrative, audit log, oversight thresholds, explainability report). Includes the artefact register pattern, 8 anti-patterns, and the 5-stage maturity ladder.

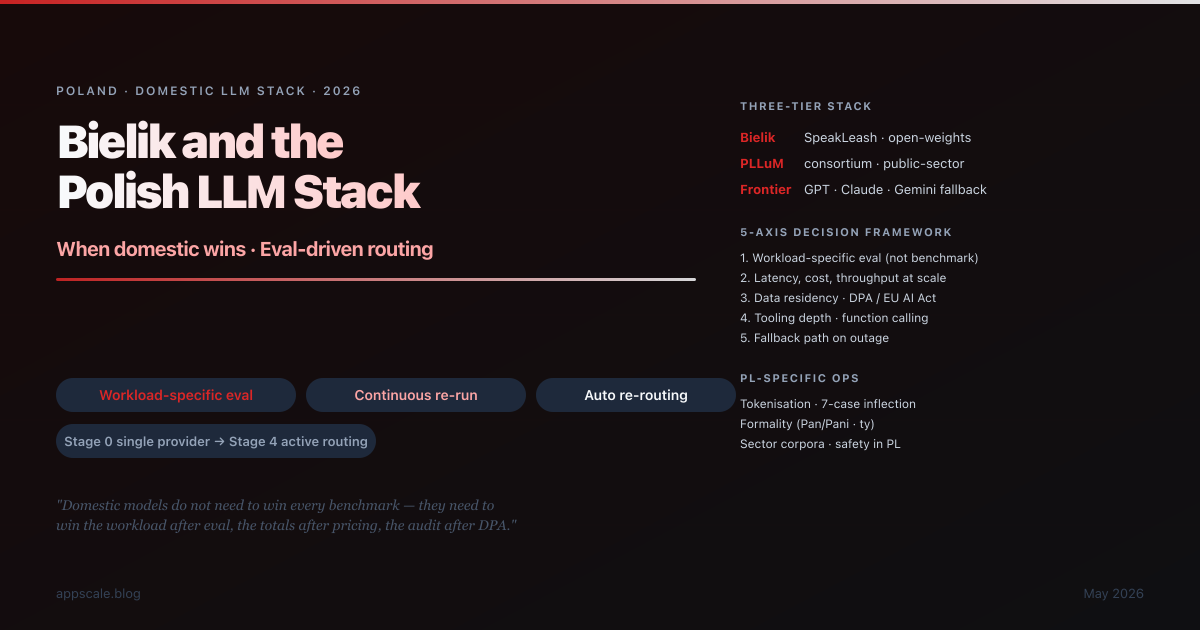

Bielik and the Polish LLM Stack: When Domestic Models Win, Eval-Driven Routing, and the Small-Language LLM Decision (2026)

Polish enterprises in 2026 face a choice they are largely unprepared for: pick a frontier model and live with the cost and residency consequences, or build a stack around domestic models (Bielik, PLLuM) and live with the eval discipline that requires. This article maps the three-tier Polish stack (Bielik · PLLuM · Frontier), the 5-axis decision framework, the 5-step workload-specific eval methodology, the eval-driven routing architecture, PL-specific operational considerations (tokenisation, 7-case inflection, formality register, sector corpora, safety evals, data residency under DPA/GDPR/EU AI Act), 8 anti-patterns, and the 5-stage maturity ladder. Portable to NL, CZ, HU, KR, TH, VN ecosystems.

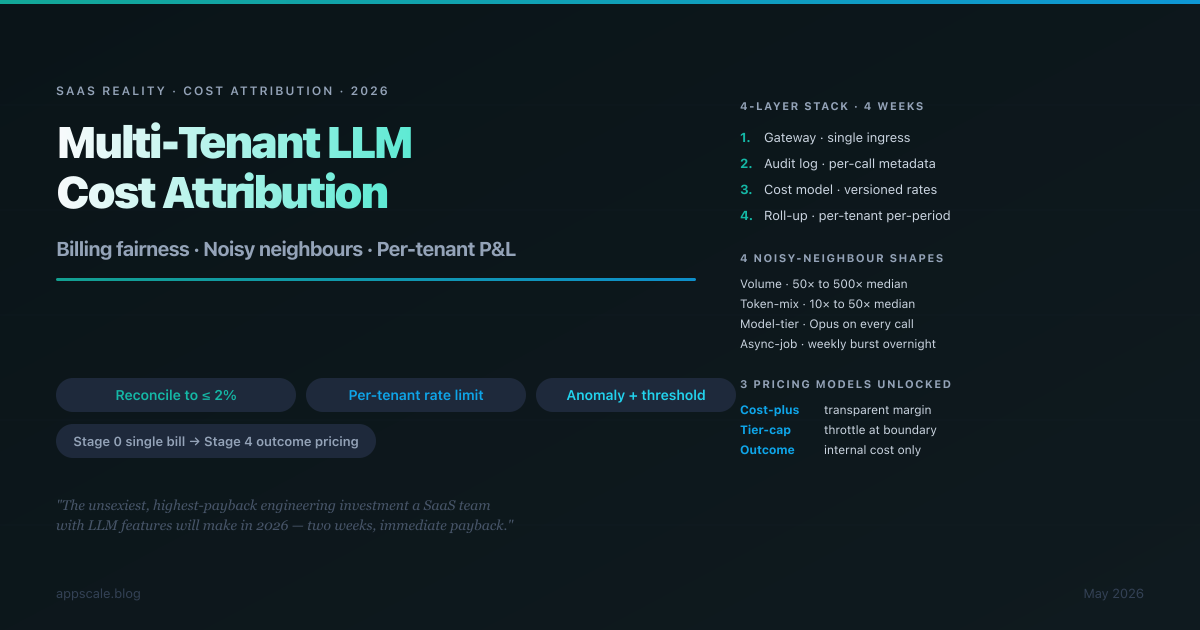

Multi-Tenant LLM Cost Attribution Architecture: Billing Fairness, Noisy Neighbours, and the Per-Tenant P&L (2026)

Multi-tenant SaaS with LLM features hits a problem the rest of SaaS solved a decade ago: when one tenant's usage costs 50× another tenant's and the bill arrives as a single line item from OpenAI or Anthropic, unit economics quietly become fiction. This is the four-layer architecture that fixes it — gateway, audit log, versioned cost model, roll-up — implementation order week-by-week, the four shapes of noisy neighbour, three pricing models the architecture unlocks, 8 anti-patterns to retire, and the 5-stage maturity ladder. Globally portable across OpenAI, Anthropic, Bedrock, Vertex, and self-hosted vLLM.

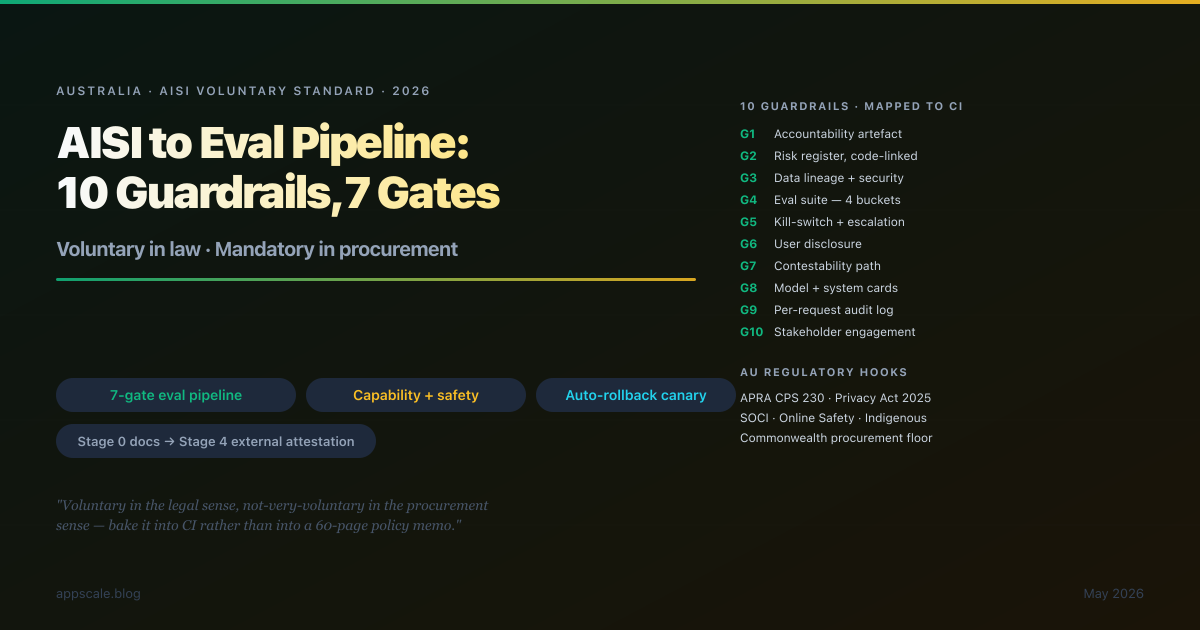

AI Safety Evals: Mapping the Australian AI Safety Institute Voluntary Standard to a Concrete Eval-Gate Pipeline (2026)

The AISI Voluntary Standard is voluntary in the legal sense and not-very-voluntary in the procurement sense — APRA-regulated entities, Commonwealth procurement, and SOCI critical infrastructure increasingly read it as a hard floor. This article maps the 10 guardrails to a concrete 7-gate eval pipeline, the four eval buckets (capability, safety, robustness, operational), AU-specific hooks (Privacy Act 2025, APRA CPS 230, Online Safety, Indigenous data sovereignty), 8 anti-patterns to retire, and the 5-stage maturity ladder. Globally portable: the same pipeline lands as a starting point for NIST AI RMF, EU AI Act, IMDA, and METI guidance.

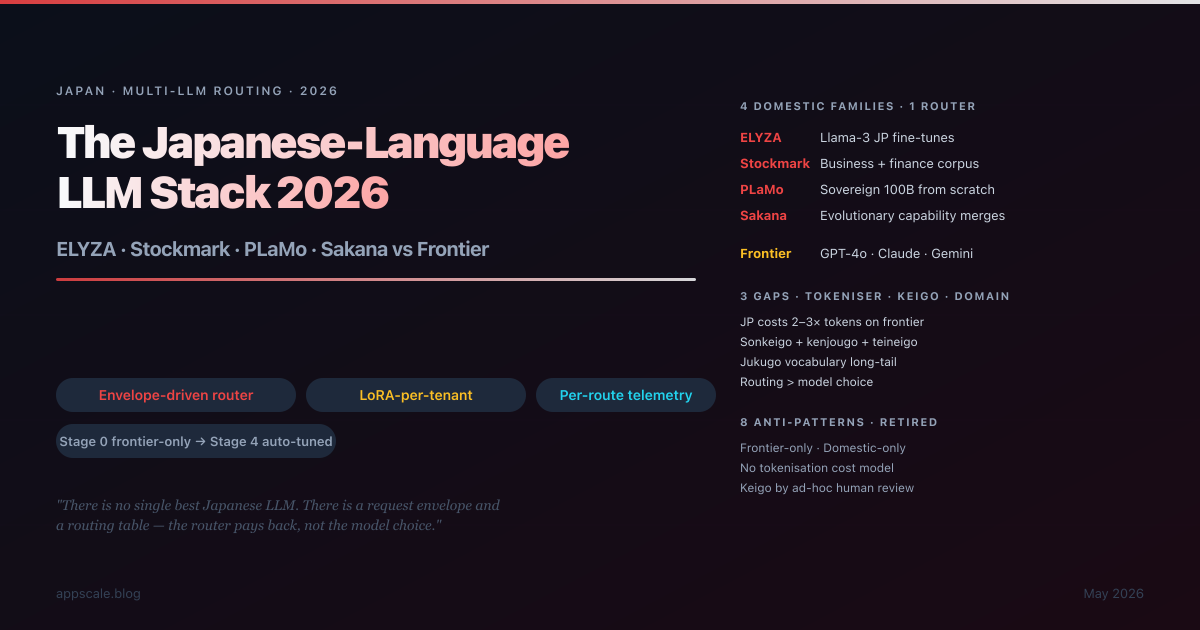

The Japanese-Language LLM Stack 2026: ELYZA, Stockmark, PLaMo vs Frontier — When to Use Which

Honest decision matrix for the 2026 Japanese LLM stack: when do ELYZA, Stockmark, PLaMo, and Sakana beat frontier APIs (GPT-4o, Claude, Gemini) — and when do they not? Tokenisation cost penalty, keigo handling, vertical vocabulary, sovereignty requirements, and the multi-LLM routing architecture that lets you have all three. Globally portable pattern: Japanese is the demanding instance, the routing layer that survives Japanese will survive Korean, Mandarin, Hindi, and every other language with similar characteristics.

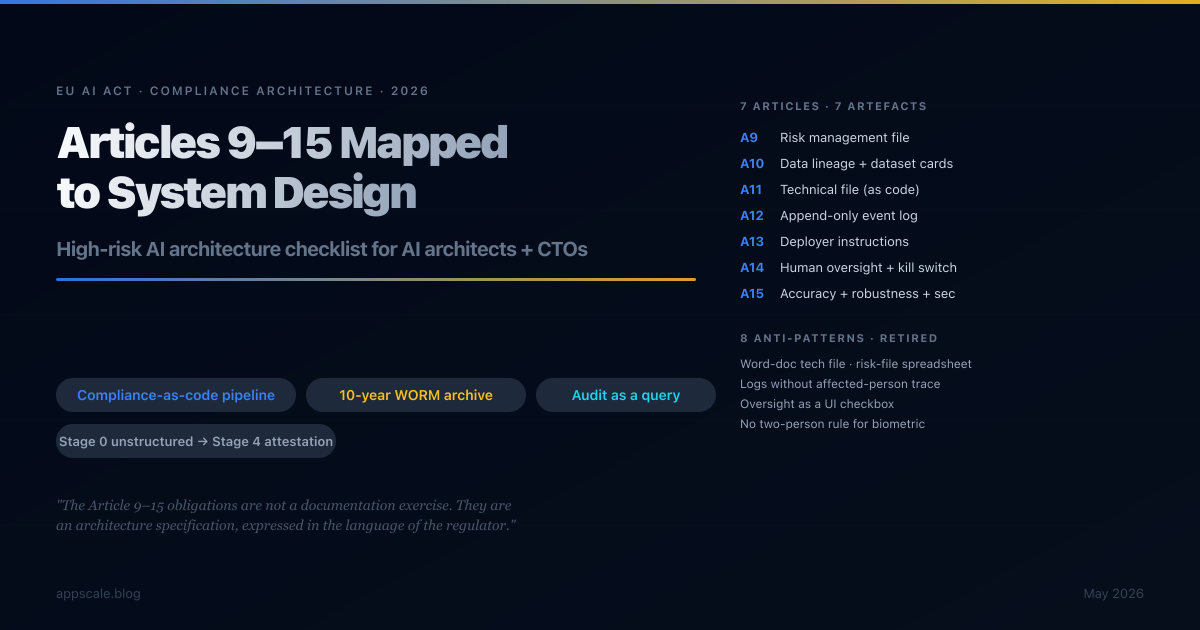

The EU AI Act High-Risk System Architecture Checklist: Articles 9–15 Mapped to System Design (2026)

A clause-by-clause architecture checklist for Articles 9–15 of the EU AI Act, the binding obligations for high-risk AI systems from August 2026. Seven articles, seven artefacts, one compliance-as-code pipeline. Risk file, dataset cards, technical file, logging pipeline, deployer instructions, human oversight architecture, and accuracy/robustness/cybersecurity test suite — all generated from the engineering repository, retained as immutable bundles, defensible at audit as a query rather than a project.

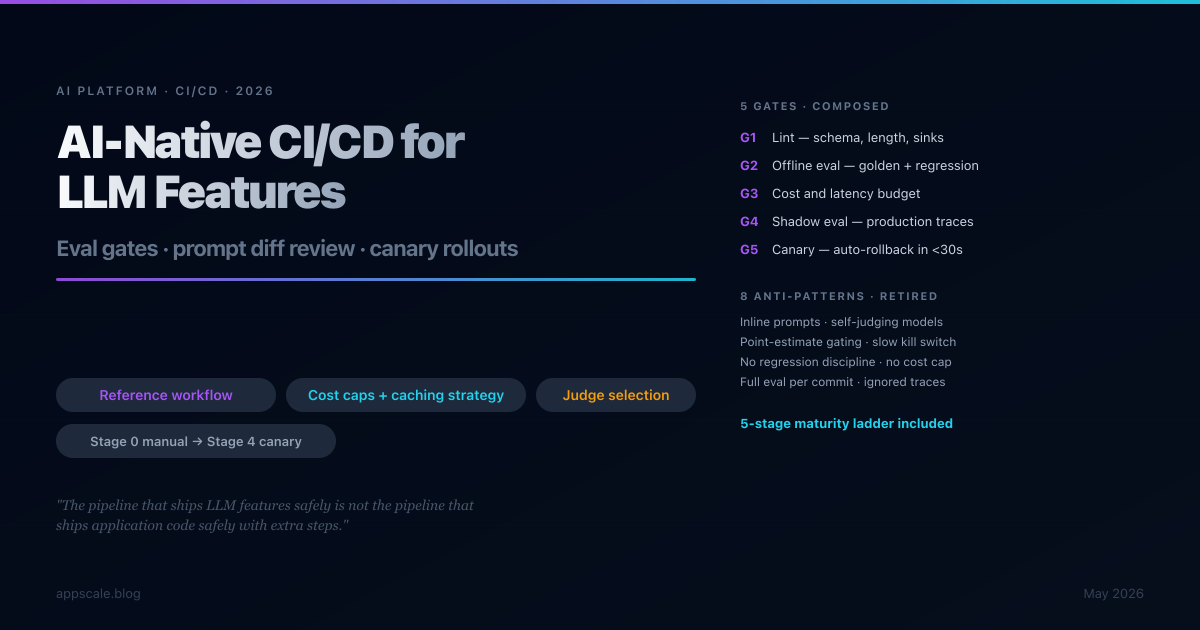

AI-Native CI/CD for LLM Features: Eval Gates, Prompt Diff Review, Canary Rollouts (2026)

Traditional CI/CD breaks for LLM features because outputs are non-deterministic, evaluation costs are non-trivial, and failure modes are silent. This article gives you a five-gate pipeline — lint, offline eval, cost and latency budget, shadow eval on production traces, canary with auto-rollback — that catches regressions at the cheapest gate that catches them, with a maturity ladder from manual review to full canary that most teams should walk in stages over twelve to eighteen months.

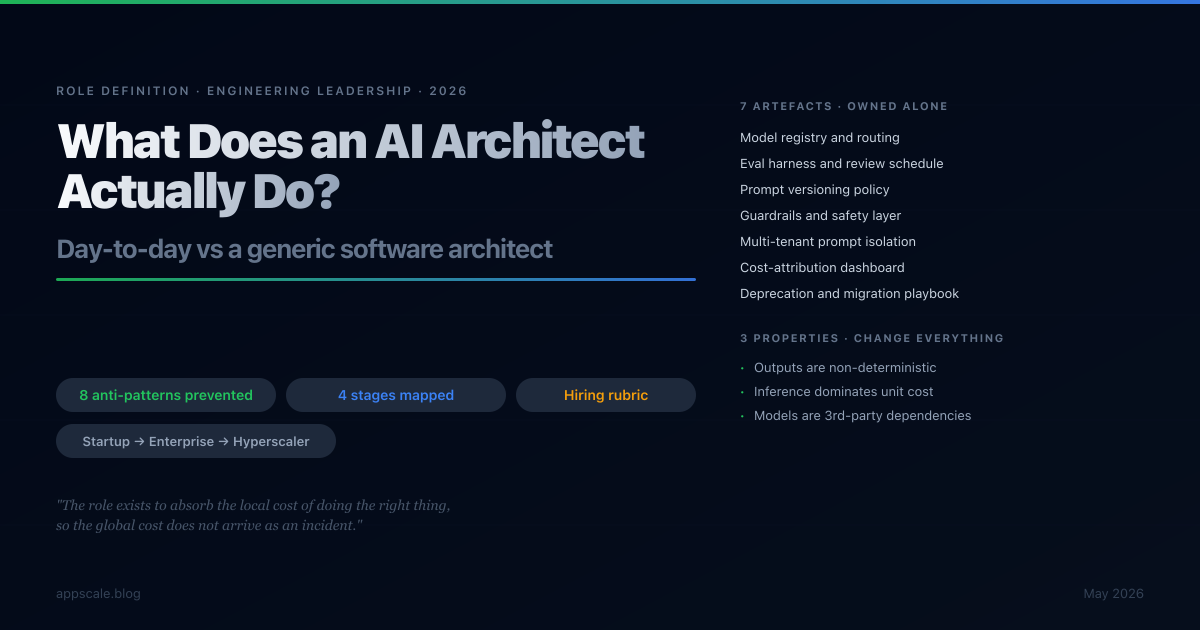

What Does an AI Architect Actually Do? Day-to-Day vs a Generic Software Architect (2026)

The role of "AI architect" has filled job boards faster than the role itself has been honestly defined. This article pins it down: what an AI architect actually ships in a typical week, where the work overlaps with a generic software architect and where it diverges sharply, the seven artefacts only this role produces, the eight anti-patterns it exists to prevent, and how the day-to-day shifts from startup to enterprise to hyperscaler.

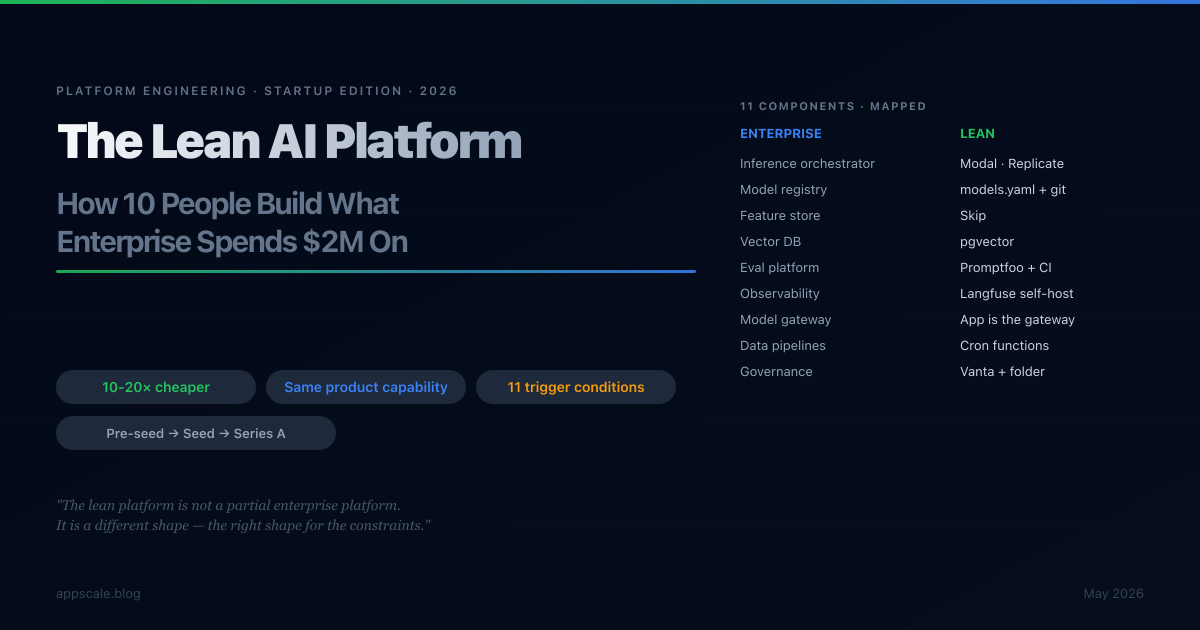

The Lean AI Platform: How a 10-Person Team Builds What Enterprise Spends $2M On

The enterprise AI platform burns $1.5M-$3M annually. The Series A startup ships the same product capability for one-tenth to one-twentieth that cost. This is a component-by-component map of the enterprise AI platform onto startup constraints — what to build, what to skip, what to fake, and the exact trigger conditions that flip each answer. With the cost envelope at pre-seed, seed, and Series A.

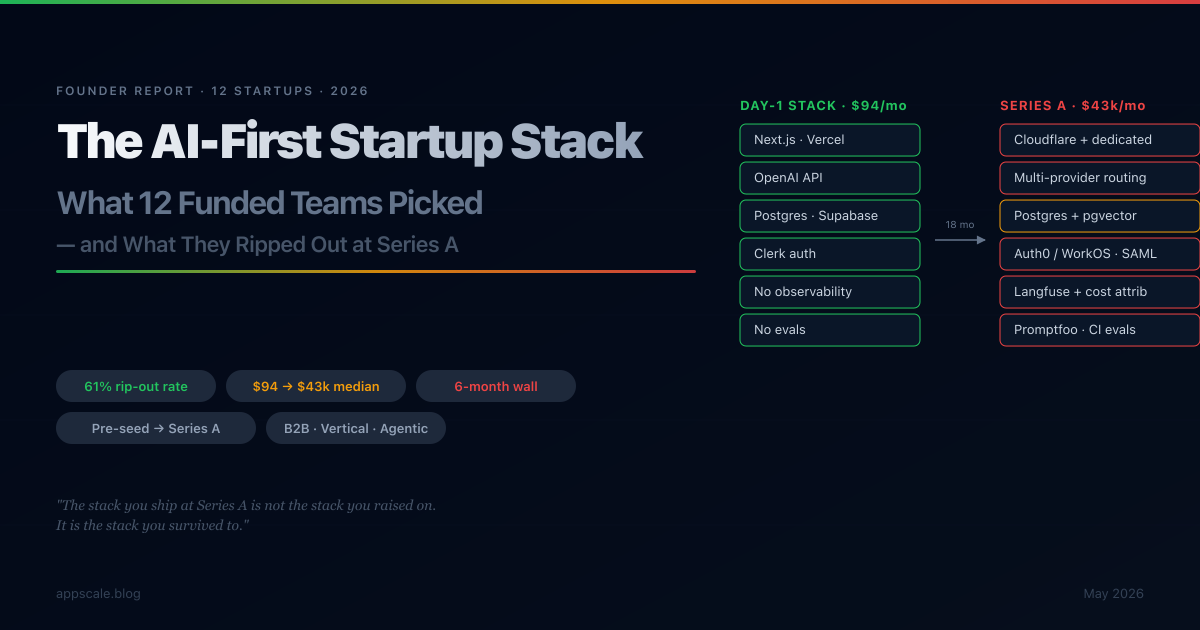

The 2026 AI-First Startup Stack: What 12 Funded Startups Actually Picked (and What They Ripped Out at Series A)

A composite report drawn from 12 funded AI-first startups (pre-seed to Series A) — the Day-1 stack everyone converged on, the six-month walls every team hits, the 61% of the original stack that gets ripped out, and the cost trajectory from $94/month to $43k/month median. With a 2026 reference stack by stage and the trigger conditions for each upgrade.

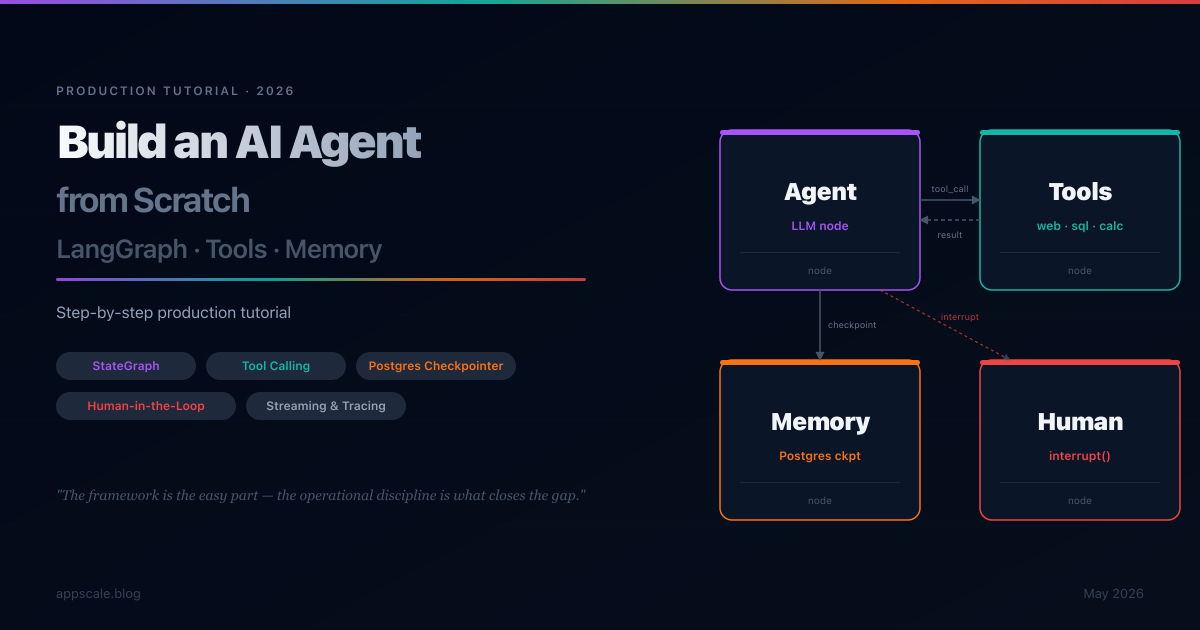

Build an AI Agent from Scratch: LangGraph + Tools + Memory — Step-by-Step Tutorial (2026)

A step-by-step production tutorial for building an AI agent from scratch with LangGraph: state graphs, tool calling, Postgres checkpointing for durable memory, human-in-the-loop interrupts, streaming, observability, and deployment patterns. Builds a research assistant that survives restarts, remembers conversations across sessions, and escalates to humans when uncertain.

保持领先地位

每周深入探讨人工智能系统、云架构、分布式系统和工程领导力。加入 5,000 多名工程师的行列。