エンジニアリングインサイト

AIシステム、クラウドアーキテクチャ、分散システム、エンジニアリングリーダーシップの深堀り。

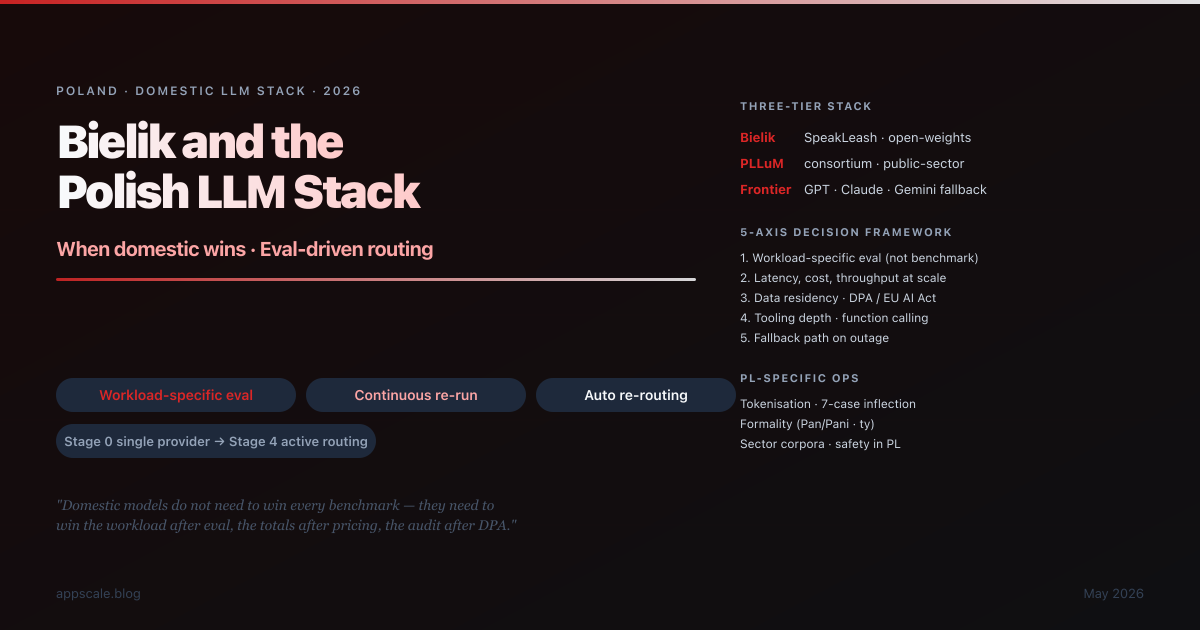

Bielik and the Polish LLM Stack: When Domestic Models Win, Eval-Driven Routing, and the Small-Language LLM Decision (2026)

Polish enterprises in 2026 face a choice they are largely unprepared for: pick a frontier model and live with the cost and residency consequences, or build a stack around domestic models (Bielik, PLLuM) and live with the eval discipline that requires. This article maps the three-tier Polish stack (Bielik · PLLuM · Frontier), the 5-axis decision framework, the 5-step workload-specific eval methodology, the eval-driven routing architecture, PL-specific operational considerations (tokenisation, 7-case inflection, formality register, sector corpora, safety evals, data residency under DPA/GDPR/EU AI Act), 8 anti-patterns, and the 5-stage maturity ladder. Portable to NL, CZ, HU, KR, TH, VN ecosystems.

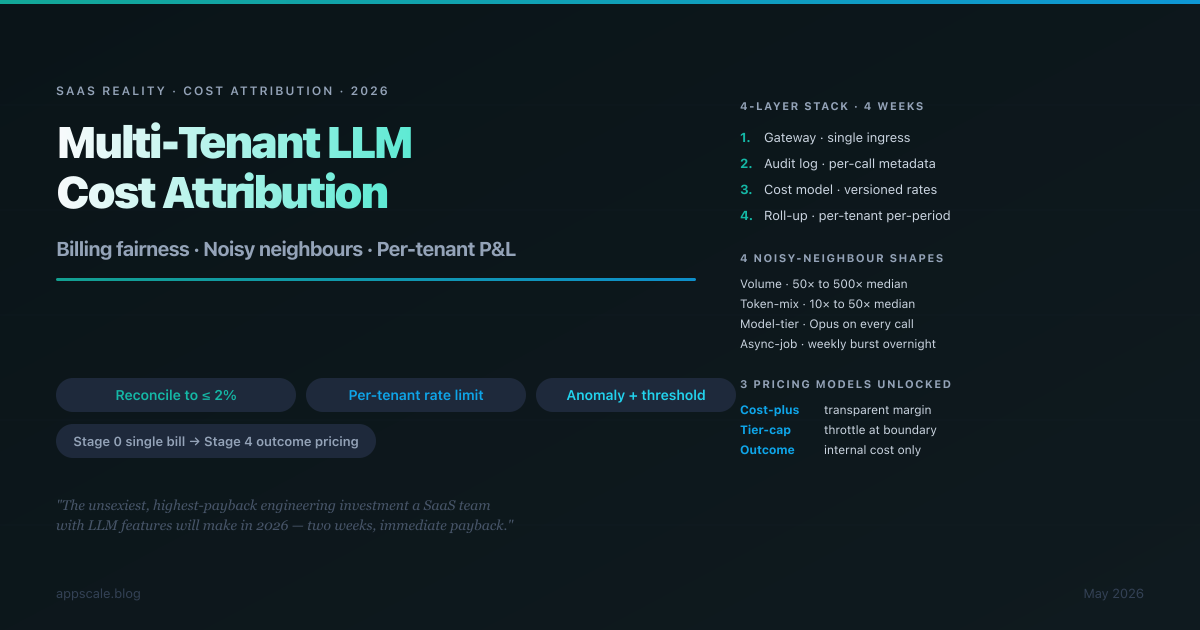

Multi-Tenant LLM Cost Attribution Architecture: Billing Fairness, Noisy Neighbours, and the Per-Tenant P&L (2026)

Multi-tenant SaaS with LLM features hits a problem the rest of SaaS solved a decade ago: when one tenant's usage costs 50× another tenant's and the bill arrives as a single line item from OpenAI or Anthropic, unit economics quietly become fiction. This is the four-layer architecture that fixes it — gateway, audit log, versioned cost model, roll-up — implementation order week-by-week, the four shapes of noisy neighbour, three pricing models the architecture unlocks, 8 anti-patterns to retire, and the 5-stage maturity ladder. Globally portable across OpenAI, Anthropic, Bedrock, Vertex, and self-hosted vLLM.

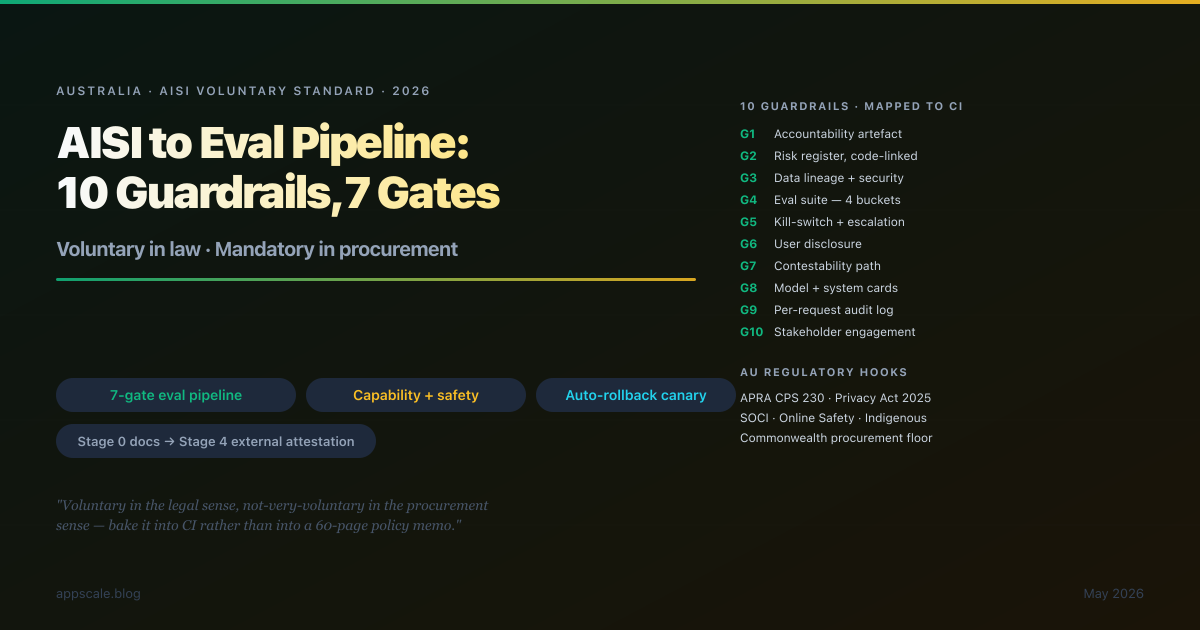

AI Safety Evals: Mapping the Australian AI Safety Institute Voluntary Standard to a Concrete Eval-Gate Pipeline (2026)

The AISI Voluntary Standard is voluntary in the legal sense and not-very-voluntary in the procurement sense — APRA-regulated entities, Commonwealth procurement, and SOCI critical infrastructure increasingly read it as a hard floor. This article maps the 10 guardrails to a concrete 7-gate eval pipeline, the four eval buckets (capability, safety, robustness, operational), AU-specific hooks (Privacy Act 2025, APRA CPS 230, Online Safety, Indigenous data sovereignty), 8 anti-patterns to retire, and the 5-stage maturity ladder. Globally portable: the same pipeline lands as a starting point for NIST AI RMF, EU AI Act, IMDA, and METI guidance.

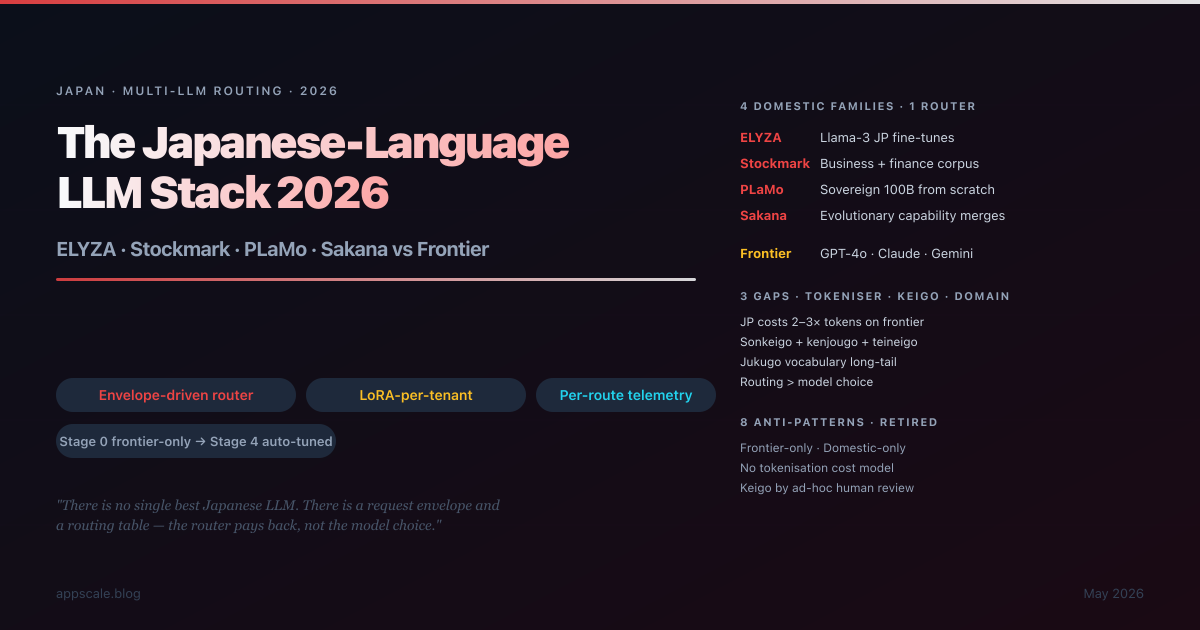

The Japanese-Language LLM Stack 2026: ELYZA, Stockmark, PLaMo vs Frontier — When to Use Which

Honest decision matrix for the 2026 Japanese LLM stack: when do ELYZA, Stockmark, PLaMo, and Sakana beat frontier APIs (GPT-4o, Claude, Gemini) — and when do they not? Tokenisation cost penalty, keigo handling, vertical vocabulary, sovereignty requirements, and the multi-LLM routing architecture that lets you have all three. Globally portable pattern: Japanese is the demanding instance, the routing layer that survives Japanese will survive Korean, Mandarin, Hindi, and every other language with similar characteristics.

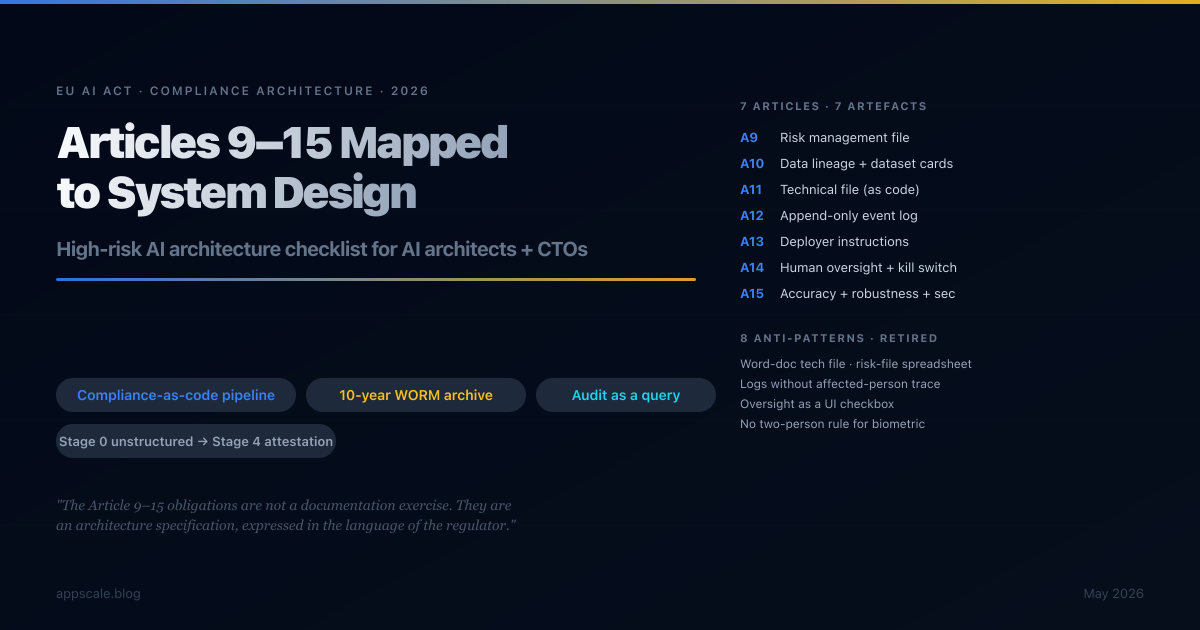

The EU AI Act High-Risk System Architecture Checklist: Articles 9–15 Mapped to System Design (2026)

A clause-by-clause architecture checklist for Articles 9–15 of the EU AI Act, the binding obligations for high-risk AI systems from August 2026. Seven articles, seven artefacts, one compliance-as-code pipeline. Risk file, dataset cards, technical file, logging pipeline, deployer instructions, human oversight architecture, and accuracy/robustness/cybersecurity test suite — all generated from the engineering repository, retained as immutable bundles, defensible at audit as a query rather than a project.

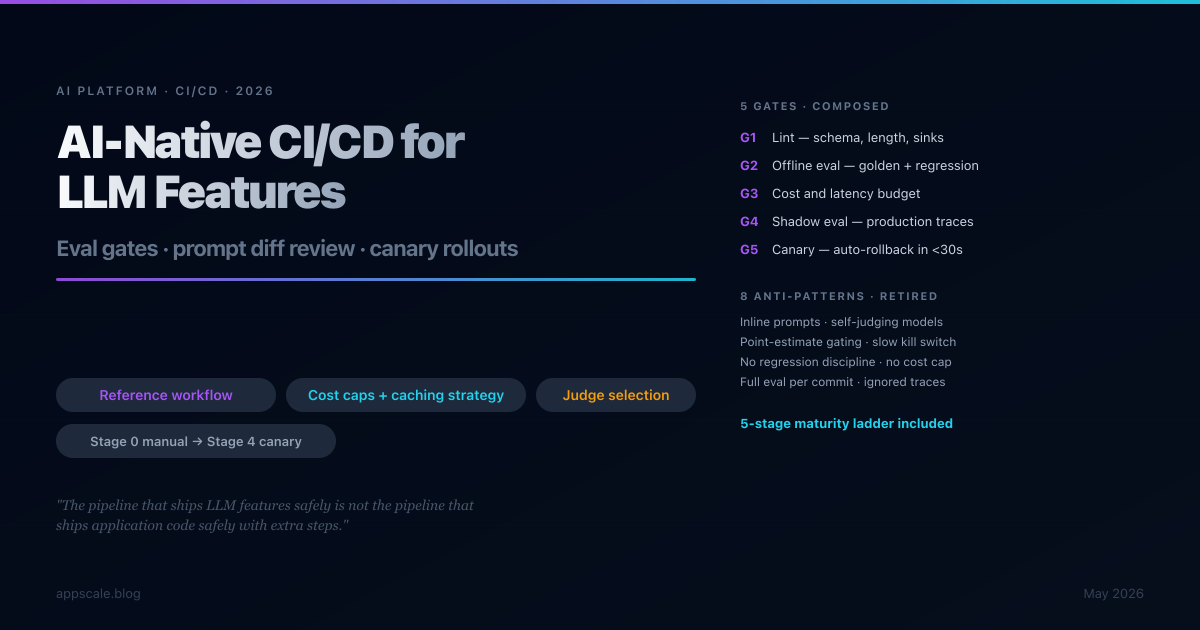

AI-Native CI/CD for LLM Features: Eval Gates, Prompt Diff Review, Canary Rollouts (2026)

Traditional CI/CD breaks for LLM features because outputs are non-deterministic, evaluation costs are non-trivial, and failure modes are silent. This article gives you a five-gate pipeline — lint, offline eval, cost and latency budget, shadow eval on production traces, canary with auto-rollback — that catches regressions at the cheapest gate that catches them, with a maturity ladder from manual review to full canary that most teams should walk in stages over twelve to eighteen months.

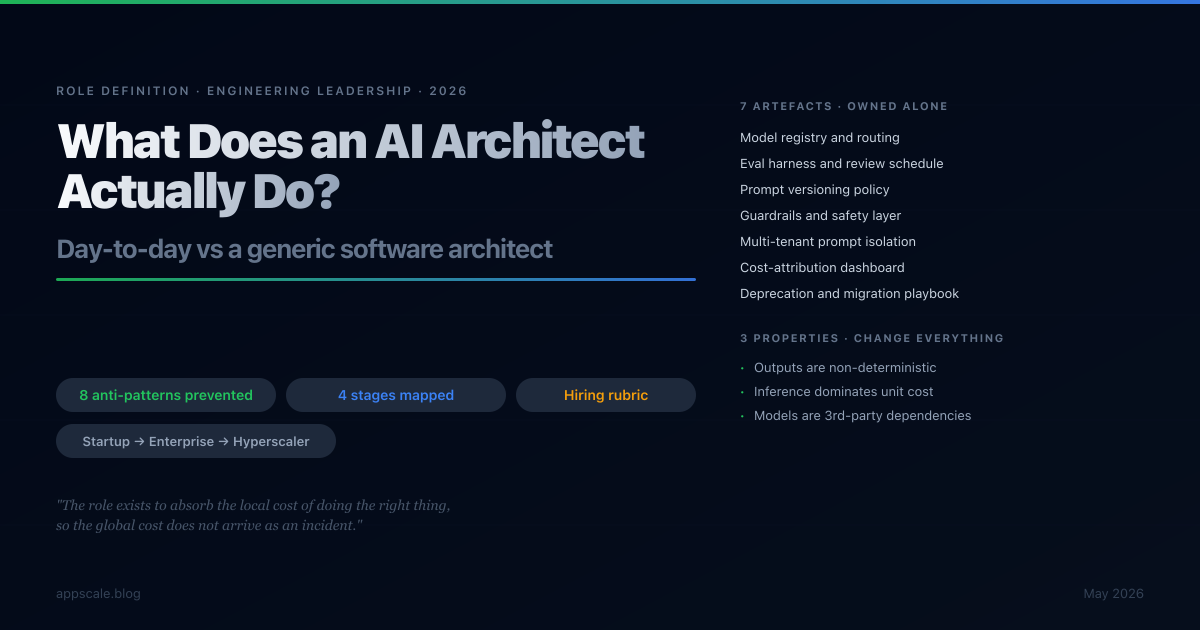

What Does an AI Architect Actually Do? Day-to-Day vs a Generic Software Architect (2026)

The role of "AI architect" has filled job boards faster than the role itself has been honestly defined. This article pins it down: what an AI architect actually ships in a typical week, where the work overlaps with a generic software architect and where it diverges sharply, the seven artefacts only this role produces, the eight anti-patterns it exists to prevent, and how the day-to-day shifts from startup to enterprise to hyperscaler.

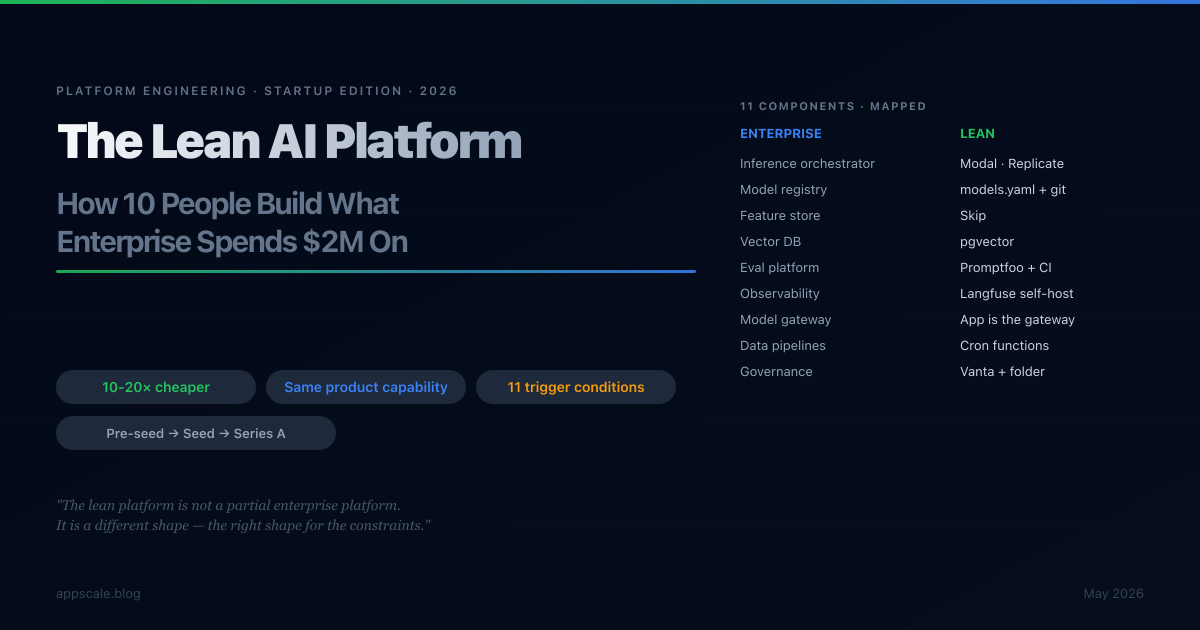

The Lean AI Platform: How a 10-Person Team Builds What Enterprise Spends $2M On

The enterprise AI platform burns $1.5M-$3M annually. The Series A startup ships the same product capability for one-tenth to one-twentieth that cost. This is a component-by-component map of the enterprise AI platform onto startup constraints — what to build, what to skip, what to fake, and the exact trigger conditions that flip each answer. With the cost envelope at pre-seed, seed, and Series A.

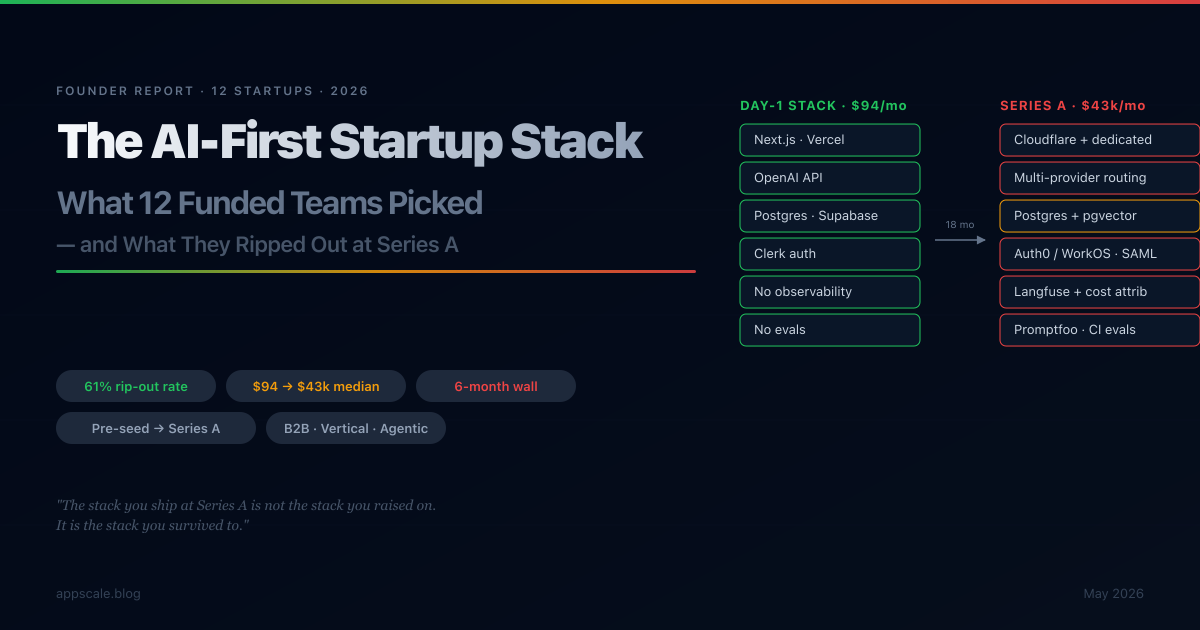

The 2026 AI-First Startup Stack: What 12 Funded Startups Actually Picked (and What They Ripped Out at Series A)

A composite report drawn from 12 funded AI-first startups (pre-seed to Series A) — the Day-1 stack everyone converged on, the six-month walls every team hits, the 61% of the original stack that gets ripped out, and the cost trajectory from $94/month to $43k/month median. With a 2026 reference stack by stage and the trigger conditions for each upgrade.

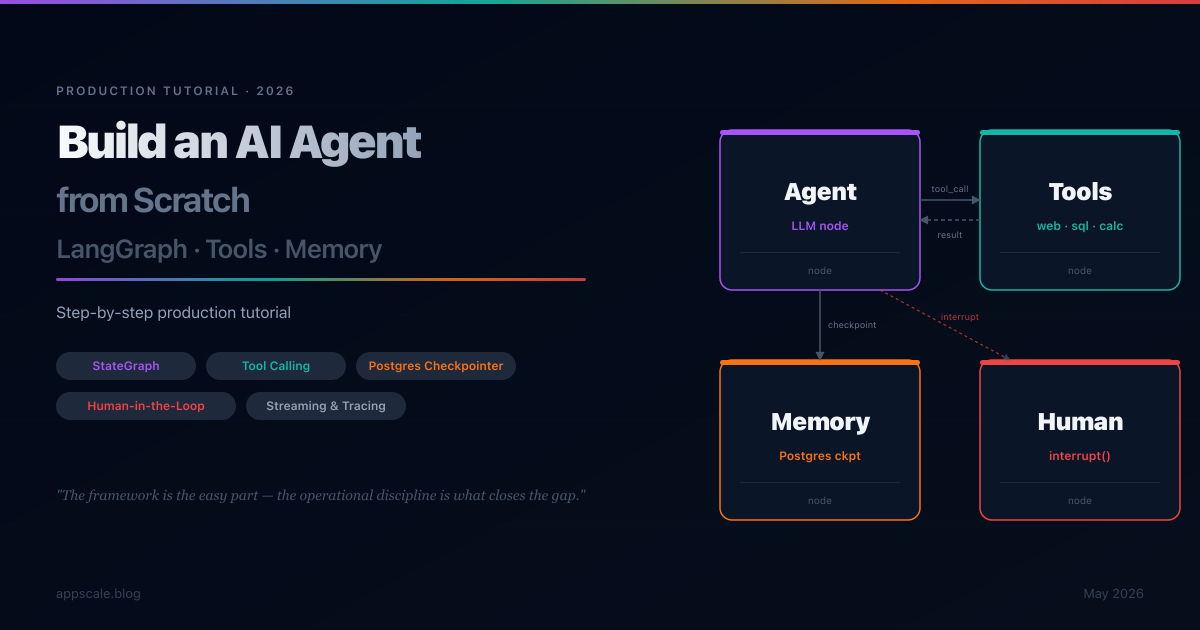

Build an AI Agent from Scratch: LangGraph + Tools + Memory — Step-by-Step Tutorial (2026)

A step-by-step production tutorial for building an AI agent from scratch with LangGraph: state graphs, tool calling, Postgres checkpointing for durable memory, human-in-the-loop interrupts, streaming, observability, and deployment patterns. Builds a research assistant that survives restarts, remembers conversations across sessions, and escalates to humans when uncertain.

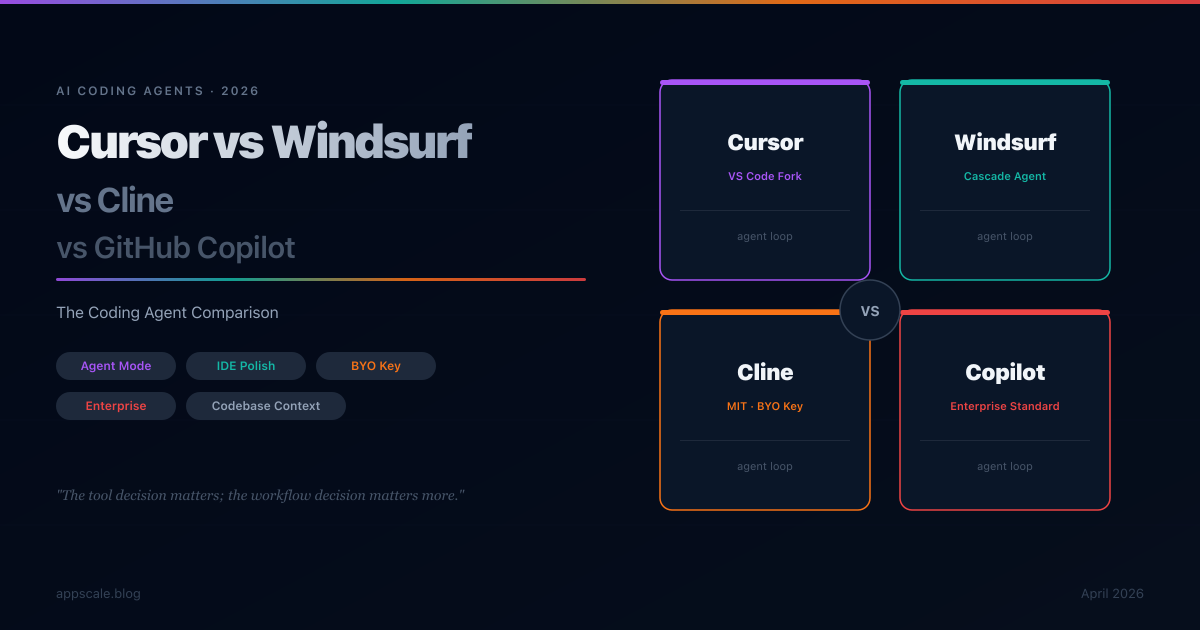

Cursor vs Windsurf vs Cline vs GitHub Copilot: AI Coding Agent Comparison (2026)

Cursor, Windsurf, Cline, and GitHub Copilot are the four dominant AI coding agents in 2026. This comparison covers agent architecture, IDE experience, model flexibility, enterprise readiness, pricing economics, and codebase context handling — with a four-constraint decision framework that maps each tool to specific team scenarios.

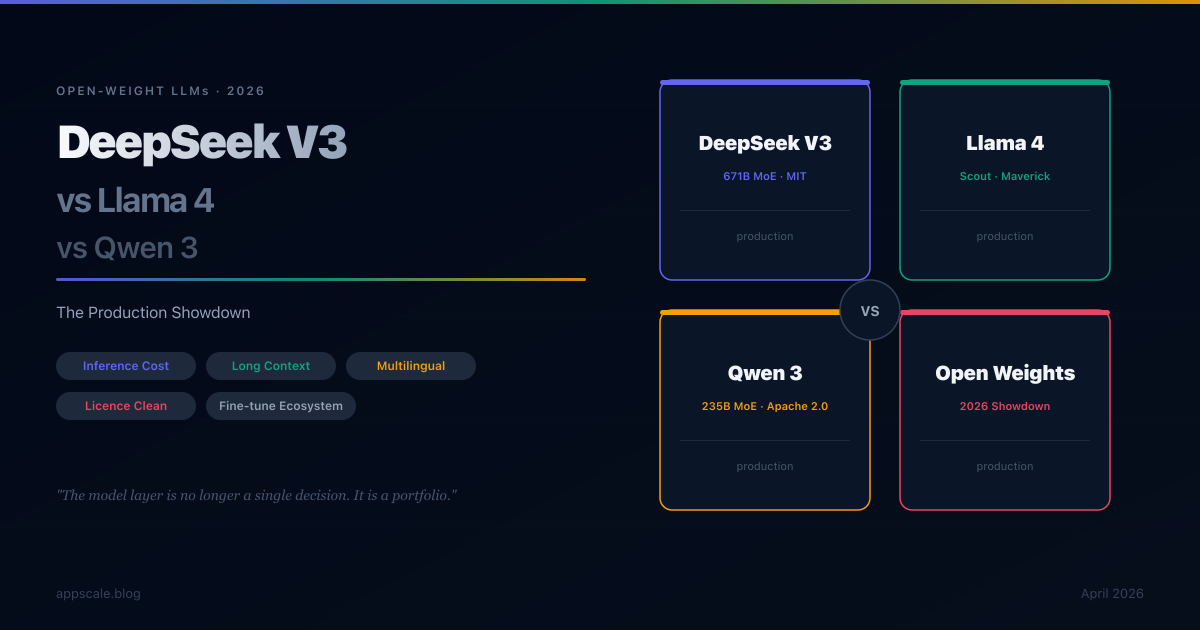

DeepSeek V3 vs Llama 4 vs Qwen 3: Open-Weight Model Showdown for Production (2026)

DeepSeek V3, Llama 4, and Qwen 3 are the three dominant open-weight model families in 2026. This deep comparison covers inference economics, hardware footprint, fine-tuning ergonomics, licence constraints, multilingual quality, and ecosystem maturity — with a four-constraint decision framework that maps each family to specific production scenarios.

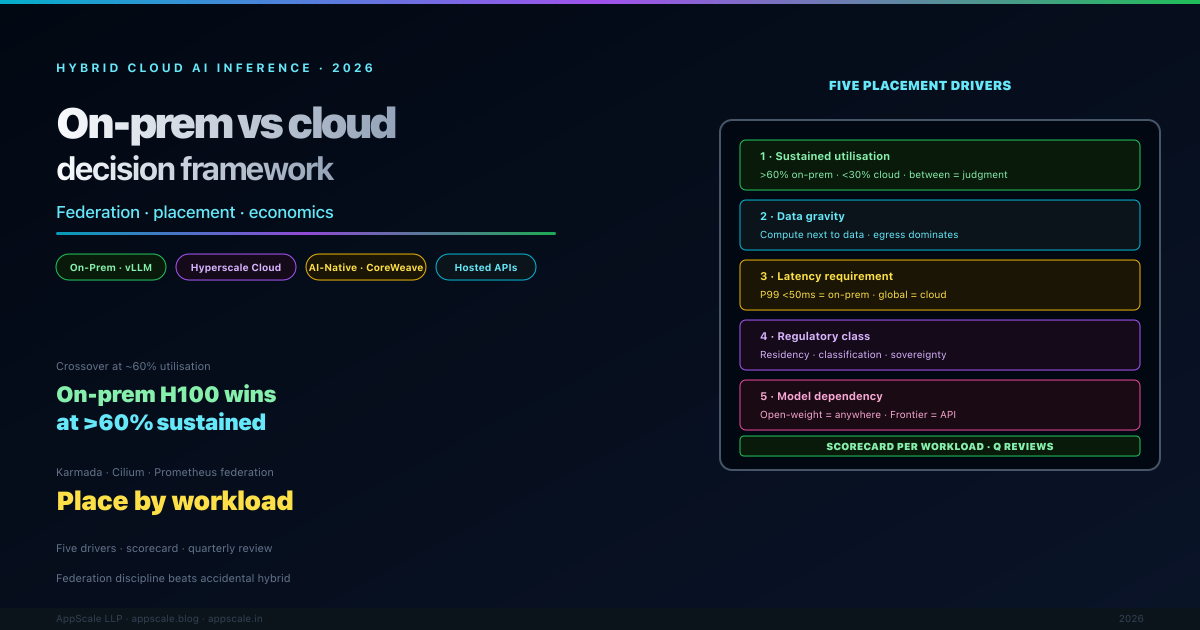

Hybrid Cloud AI Inference: On-Prem vs Cloud Decision Framework (2026)

A working architect reference for the on-prem vs cloud vs hybrid AI inference decision in 2026. The five placement drivers (utilisation, data gravity, latency, regulatory, model dependency), the GPU cost crossover math (~60% utilisation), the federation control plane (Karmada / Argo CD / Cilium ClusterMesh / Prometheus federation), the model-serving stack across boundaries (vLLM / NIM / Triton / Bedrock / Azure OpenAI), the LLM gateway pattern that makes self-hosted and hosted models indistinguishable, and the failure modes (cluster drift, identity divergence, egress costs, burst surprises) that derail real hybrid deployments.

Green AI: Cut Inference Cost 80% with Quantisation, Distillation, Speculative Decoding (2026)

A practical reference for cutting LLM inference cost by 60-90% in 2026. Quantisation (INT4 / INT8 / FP8 / GPTQ / AWQ), distillation, speculative decoding, continuous batching with paged attention, prefix and KV caching, model routing, the inference-server choice between vLLM/TGI/TensorRT-LLM/SGLang, GPU sharing with MIG, spot/preemptible failover, regional cost arbitrage, and carbon-aware scheduling — with the quality, latency, and engineering tradeoffs each technique imposes, and the gateway architecture that keeps the savings from regressing.

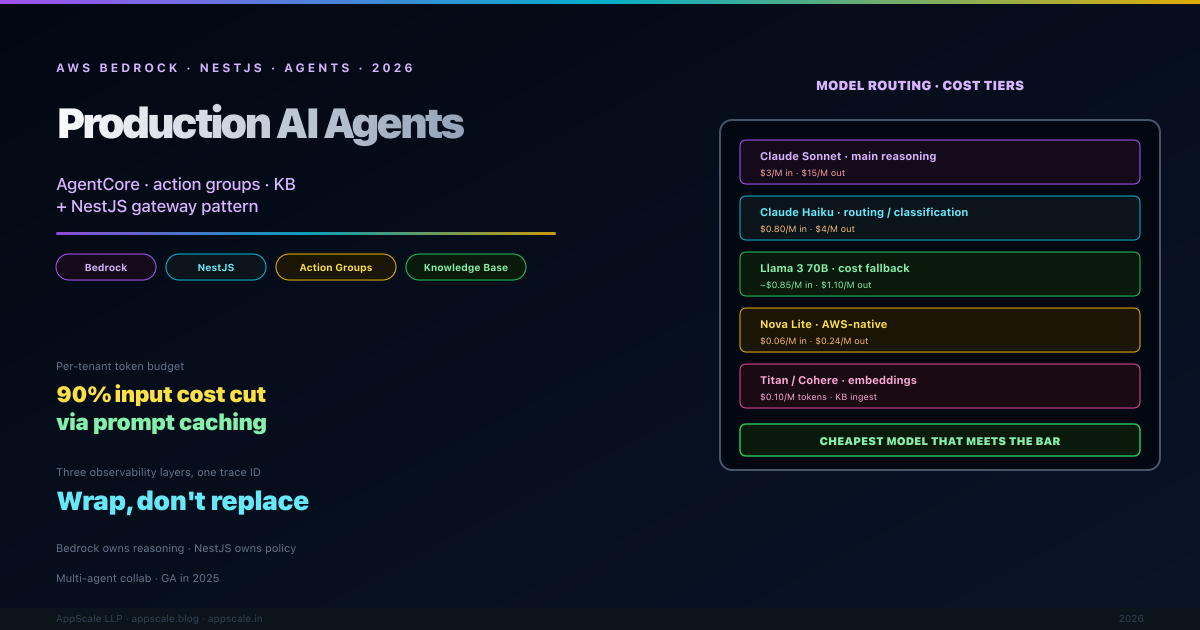

AI Agents on AWS Bedrock + NestJS: Production Architecture (2026)

A production-grade reference for building AI agents on AWS Bedrock with a NestJS gateway in 2026 — Bedrock AgentCore, action groups backed by Lambda, knowledge bases over S3 + OpenSearch, the routing-and-guardrail layer that NestJS must own, the model-selection cost arithmetic, three-layer observability (gateway + agent-internal + per-token cost), Bedrock Guardrails plus app-specific PII redaction, multi-agent collaboration patterns, and the failure modes you only learn about by running this in production.

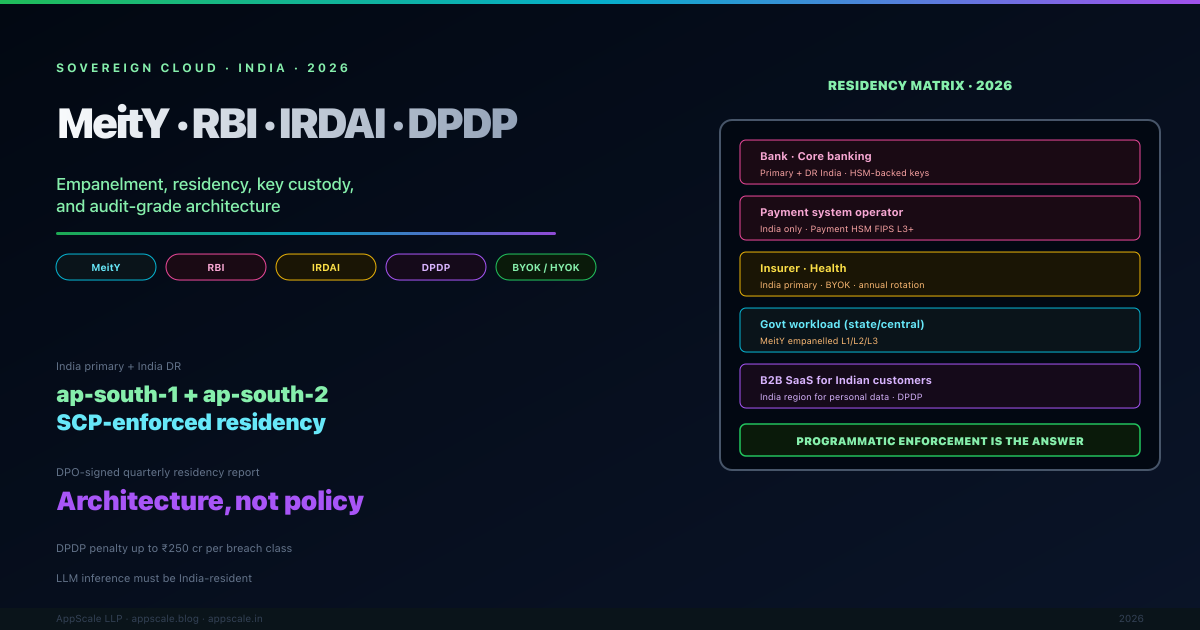

Sovereign Cloud in India: MeitY Empanelment, RBI/IRDAI/DPDP Architecture (2026)

A working architects reference for Indian sovereign-cloud architecture in 2026 — MeitY empanelment levels, RBI cloud guidelines, IRDAI advisory, DPDP Act 2023 obligations, the residency and key-custody patterns that pass audit, BYOK vs HYOK decisions, the AI-inference-residency problem, and the programmatic enforcement (SCPs, mandatory tags, daily inventories, DPO-signed quarterly reports) that turns "we keep data in India" into an audit-grade evidence chain. Includes the regulator-by-workload compliance matrix, the reference India-only topology, and the most common audit findings to avoid.

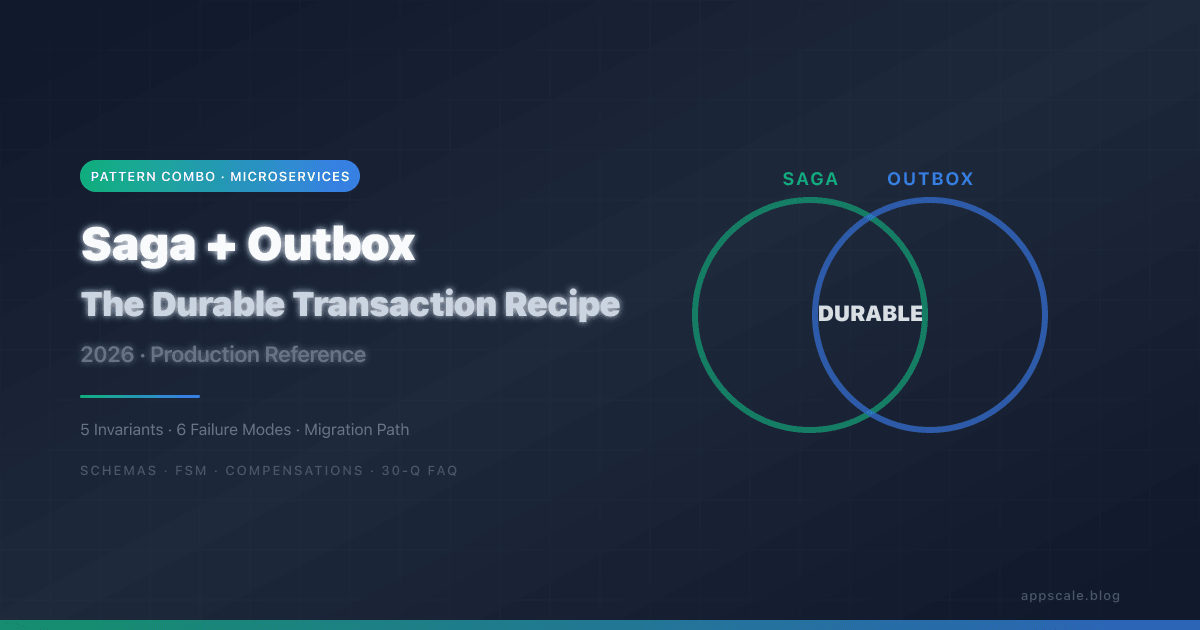

Saga + Outbox: The Durable Transaction Recipe (2026)

The Saga and Outbox patterns are most often essential when combined. This article is the production recipe for combining them: the five invariants you must preserve, the schemas, the state machine, six failure modes the combination survives, common implementation mistakes, observability, and an incremental migration path from a synchronous chained-call implementation. With four Mermaid diagrams, full SQL schemas, and a 30-question FAQ.

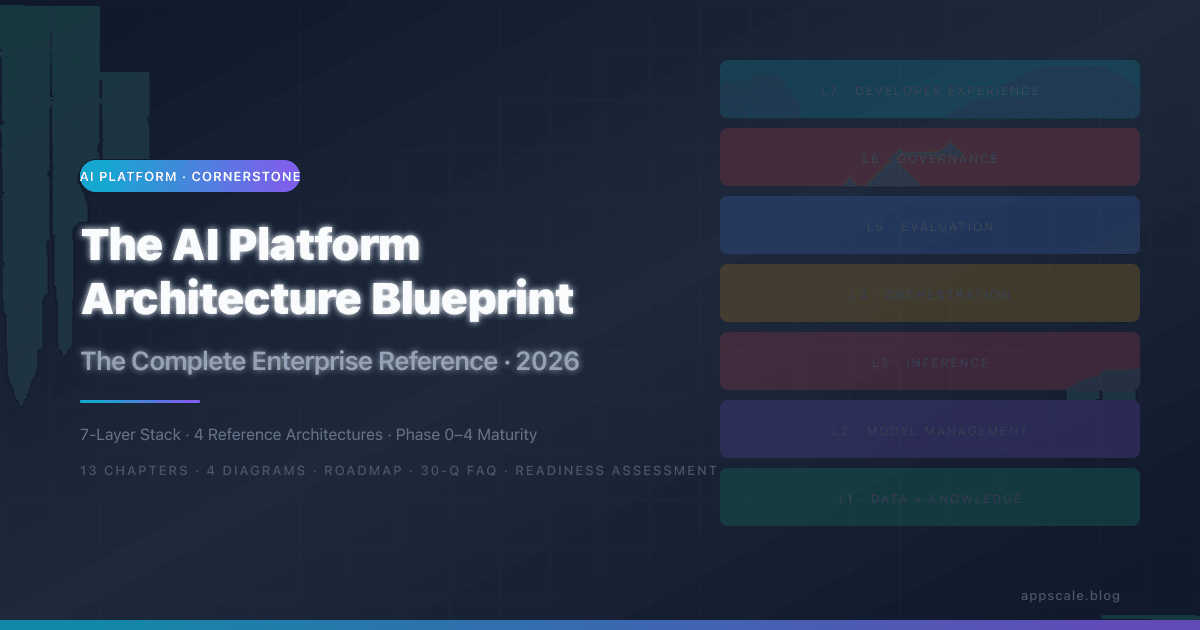

The AI Platform Architecture Blueprint: The Complete Enterprise Reference (2026)

A complete 13-chapter enterprise reference for designing, building, and evolving an AI platform — the shared substrate that lets every team ship AI features without rebuilding the same plumbing. Covers the 7-layer platform model (data, model management, inference, orchestration, evaluation, governance, developer experience), 4 reference architectures (Startup, Growth, Enterprise, Regulated-Industry), the model lifecycle and registry, multi-tenant data isolation, semantic caching and routing, FinOps with attribution and budget guardrails, EU AI Act and HIPAA-aligned governance, and the Phase 0 to Phase 4 maturity roadmap with team sizes and timelines. Includes a 20-question platform readiness assessment and a 30-question FAQ optimized for the decisions enterprise architects actually face.

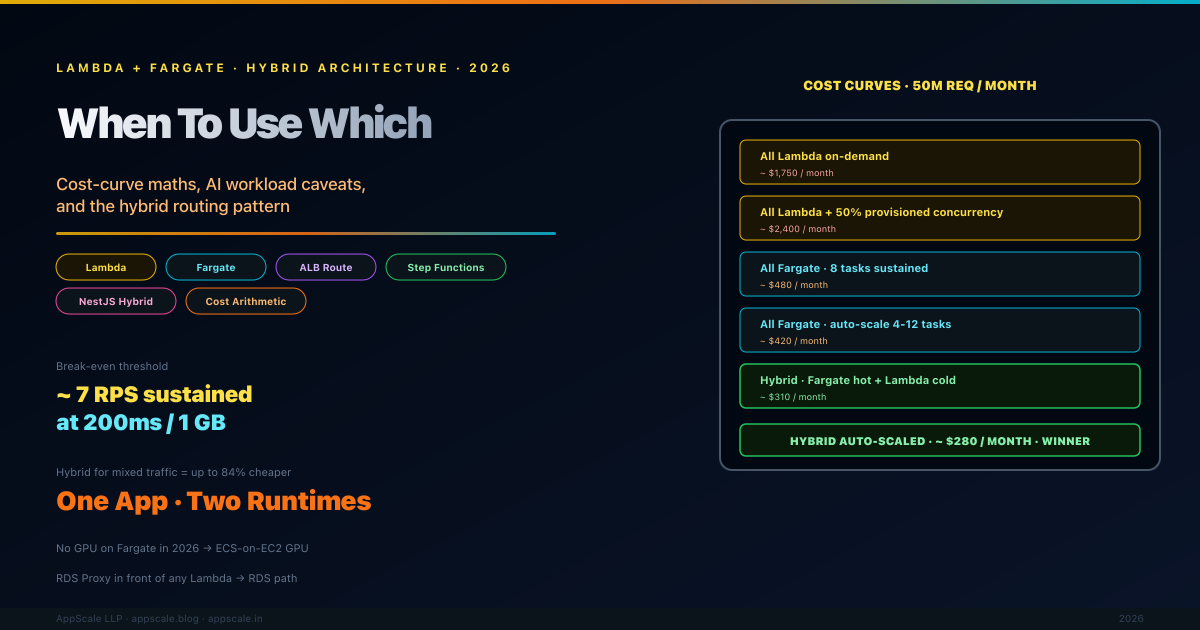

AWS Lambda + Fargate Hybrid Architecture: When to Use Which (2026)

The Lambda-versus-Fargate question stopped being binary and became openly hybrid by 2026. Teams shipping the cleanest production architectures use Lambda for its cold-start economics on sporadic event-driven work, Fargate for its long-running container economics on sustained traffic, and route between the two intelligently. This article covers the cost-curve arithmetic that decides most choices, the AI inference workload caveats including the 2026 GPU-on-Fargate gap, the hybrid routing pattern using ALB / EventBridge / Step Functions, NestJS deployment patterns that target both runtimes from one codebase, a real cost comparison spreadsheet for a 50M-request-per-month API, and the observability discipline required to run both together.

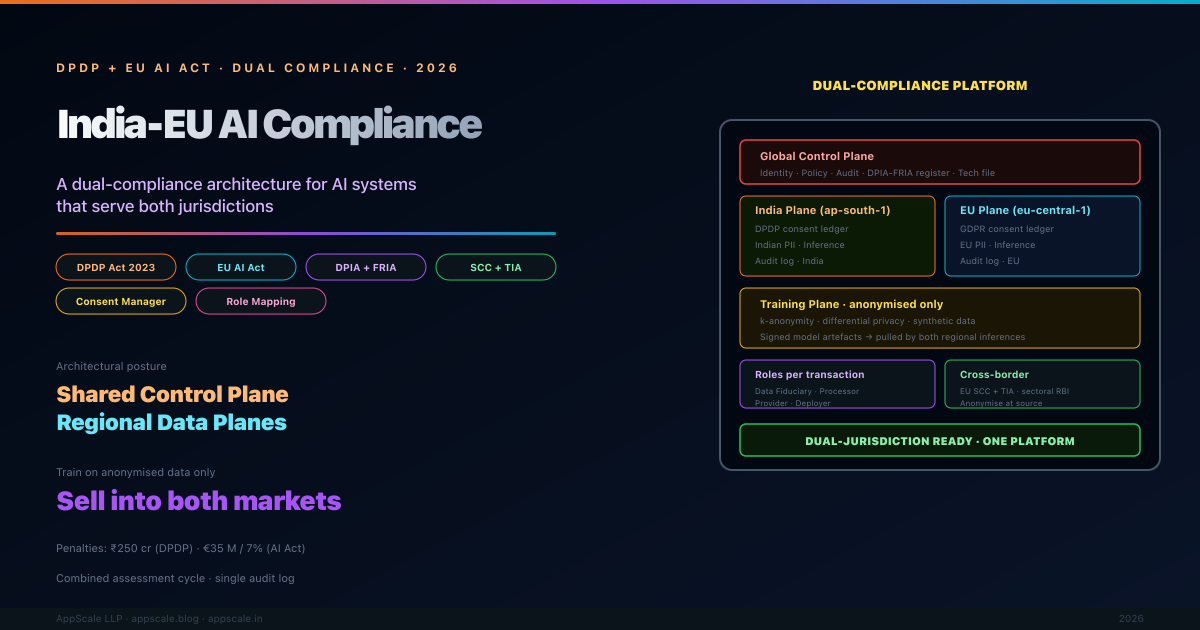

DPDP + EU AI Act: A Dual-Compliance Architecture for India-EU AI Systems (2026)

An AI product built in Bengaluru that serves a German healthcare buyer is governed by two regulators that do not coordinate — DPDP in India and the EU AI Act in Europe. This article is the architect's reference for shipping an India-EU AI platform that satisfies both regimes from one codebase: the DPDP versus AI Act overlap matrix, data residency arithmetic for India localisation alongside GDPR cross-border rules, the dual consent ledger that captures both DPDP notice-acknowledgement and GDPR lawful basis, the combined DPIA-FRIA workflow, the role mapping between Data Fiduciary, Provider, and Deployer, the SCC + TIA mechanics for EU-to-India transfers, and a reference architecture with shared control plane and regional data planes.

最先端を行く

AIシステム、クラウドアーキテクチャ、分散システム、エンジニアリングリーダーシップに関する毎週の深堀り。5,000人以上のエンジニアに参加。